mirror of

https://github.com/huggingface/diffusers.git

synced 2026-02-22 10:50:35 +08:00

Compare commits

83 Commits

v0.13.1-pa

...

multi_cont

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

6b2d9e6acd | ||

|

|

78507bda24 | ||

|

|

d2a5247a1f | ||

|

|

309d8cf9ab | ||

|

|

b285d94e10 | ||

|

|

55660cfb6d | ||

|

|

46bef6e31d | ||

|

|

22a31760c4 | ||

|

|

f0b661b8fb | ||

|

|

8552fd7efa | ||

|

|

e09a7d01c8 | ||

|

|

d3ce6f4b1e | ||

|

|

ff91f154ee | ||

|

|

62bea2df36 | ||

|

|

9136be14a7 | ||

|

|

7004ff55d5 | ||

|

|

ca7ca11bcd | ||

|

|

c7da8fd233 | ||

|

|

b8bfef2ab9 | ||

|

|

f3f626d556 | ||

|

|

b7b4683bdc | ||

|

|

56958e1177 | ||

|

|

ec021923d2 | ||

|

|

1598a57958 | ||

|

|

63805f8af7 | ||

|

|

9920c333c6 | ||

|

|

f38e3626cd | ||

|

|

5f826a35fb | ||

|

|

f7278638e4 | ||

|

|

b36cbd4fba | ||

|

|

2e3541d7f4 | ||

|

|

2b4f849db9 | ||

|

|

e4c356d3f6 | ||

|

|

2ea1da89ab | ||

|

|

fa6d52d594 | ||

|

|

a72a057d62 | ||

|

|

2f489571a7 | ||

|

|

e75eae3711 | ||

|

|

5e5ce13e2f | ||

|

|

7f0f7e1e91 | ||

|

|

3d2648d743 | ||

|

|

1f4deb697f | ||

|

|

f20c8f5a1a | ||

|

|

5b6582cf73 | ||

|

|

4f0141a67d | ||

|

|

1021929313 | ||

|

|

8f21a9f0e2 | ||

|

|

d9b9533c7e | ||

|

|

801484840a | ||

|

|

8dfff7c015 | ||

|

|

1a6fa69ab6 | ||

|

|

664b4de9e2 | ||

|

|

e4a9fb3b74 | ||

|

|

eadf0e2555 | ||

|

|

856dad57bb | ||

|

|

a75ac3fa8d | ||

|

|

477aaa96d0 | ||

|

|

e3a2c7f02c | ||

|

|

1586186eea | ||

|

|

42beaf1d23 | ||

|

|

824cb538b1 | ||

|

|

a0549fea44 | ||

|

|

1c36a1239e | ||

|

|

48a2eb33f9 | ||

|

|

0e975e5ff6 | ||

|

|

7f43f65235 | ||

|

|

6960e72225 | ||

|

|

5de4347663 | ||

|

|

54bc882d96 | ||

|

|

589faa8c88 | ||

|

|

39a3c77e0d | ||

|

|

17ecf72d44 | ||

|

|

f3fac68c55 | ||

|

|

8f1fe75b4c | ||

|

|

2ab4fcdb43 | ||

|

|

d7cfa0baa2 | ||

|

|

4135558a78 | ||

|

|

45572c2485 | ||

|

|

5f65ef4d0a | ||

|

|

c85efbb9ff | ||

|

|

1e5eaca754 | ||

|

|

55de50921f | ||

|

|

3231712b7d |

1

.github/workflows/build_documentation.yml

vendored

1

.github/workflows/build_documentation.yml

vendored

@@ -13,6 +13,7 @@ jobs:

|

||||

with:

|

||||

commit_sha: ${{ github.sha }}

|

||||

package: diffusers

|

||||

notebook_folder: diffusers_doc

|

||||

languages: en ko

|

||||

secrets:

|

||||

token: ${{ secrets.HUGGINGFACE_PUSH }}

|

||||

|

||||

1

.github/workflows/pr_quality.yml

vendored

1

.github/workflows/pr_quality.yml

vendored

@@ -47,3 +47,4 @@ jobs:

|

||||

run: |

|

||||

python utils/check_copies.py

|

||||

python utils/check_dummies.py

|

||||

make deps_table_check_updated

|

||||

|

||||

@@ -1,5 +1,5 @@

|

||||

<!---

|

||||

Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

|

||||

@@ -467,12 +467,12 @@ image.save("ddpm_generated_image.png")

|

||||

- [Unconditional Diffusion with continuous scheduler](https://huggingface.co/google/ncsnpp-ffhq-1024)

|

||||

|

||||

**Other Image Notebooks**:

|

||||

* [image-to-image generation with Stable Diffusion](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/image_2_image_using_diffusers.ipynb) ,

|

||||

* [tweak images via repeated Stable Diffusion seeds](https://colab.research.google.com/github/pcuenca/diffusers-examples/blob/main/notebooks/stable-diffusion-seeds.ipynb) ,

|

||||

* [image-to-image generation with Stable Diffusion](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/image_2_image_using_diffusers.ipynb) ,

|

||||

* [tweak images via repeated Stable Diffusion seeds](https://colab.research.google.com/github/pcuenca/diffusers-examples/blob/main/notebooks/stable-diffusion-seeds.ipynb) ,

|

||||

|

||||

**Diffusers for Other Modalities**:

|

||||

* [Molecule conformation generation](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/geodiff_molecule_conformation.ipynb) ,

|

||||

* [Model-based reinforcement learning](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/reinforcement_learning_with_diffusers.ipynb) ,

|

||||

* [Molecule conformation generation](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/geodiff_molecule_conformation.ipynb) ,

|

||||

* [Model-based reinforcement learning](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/reinforcement_learning_with_diffusers.ipynb) ,

|

||||

|

||||

### Web Demos

|

||||

If you just want to play around with some web demos, you can try out the following 🚀 Spaces:

|

||||

|

||||

@@ -1,5 +1,5 @@

|

||||

<!---

|

||||

Copyright 2022- The HuggingFace Team. All rights reserved.

|

||||

Copyright 2023- The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

|

||||

9

docs/source/_config.py

Normal file

9

docs/source/_config.py

Normal file

@@ -0,0 +1,9 @@

|

||||

# docstyle-ignore

|

||||

INSTALL_CONTENT = """

|

||||

# Diffusers installation

|

||||

! pip install diffusers transformers datasets accelerate

|

||||

# To install from source instead of the last release, comment the command above and uncomment the following one.

|

||||

# ! pip install git+https://github.com/huggingface/diffusers.git

|

||||

"""

|

||||

|

||||

notebook_first_cells = [{"type": "code", "content": INSTALL_CONTENT}]

|

||||

@@ -8,6 +8,10 @@

|

||||

- local: installation

|

||||

title: Installation

|

||||

title: Get started

|

||||

- sections:

|

||||

- local: tutorials/basic_training

|

||||

title: Train a diffusion model

|

||||

title: Tutorials

|

||||

- sections:

|

||||

- sections:

|

||||

- local: using-diffusers/loading

|

||||

@@ -44,6 +48,8 @@

|

||||

title: How to contribute a Pipeline

|

||||

- local: using-diffusers/using_safetensors

|

||||

title: Using safetensors

|

||||

- local: using-diffusers/weighted_prompts

|

||||

title: Weighting Prompts

|

||||

title: Pipelines for Inference

|

||||

- sections:

|

||||

- local: using-diffusers/rl

|

||||

@@ -78,11 +84,11 @@

|

||||

- local: training/text_inversion

|

||||

title: Textual Inversion

|

||||

- local: training/dreambooth

|

||||

title: Dreambooth

|

||||

title: DreamBooth

|

||||

- local: training/text2image

|

||||

title: Text-to-image fine-tuning

|

||||

title: Text-to-image

|

||||

- local: training/lora

|

||||

title: LoRA Support in Diffusers

|

||||

title: Low-Rank Adaptation of Large Language Models (LoRA)

|

||||

title: Training

|

||||

- sections:

|

||||

- local: conceptual/philosophy

|

||||

@@ -165,6 +171,8 @@

|

||||

title: Self-Attention Guidance

|

||||

- local: api/pipelines/stable_diffusion/panorama

|

||||

title: MultiDiffusion Panorama

|

||||

- local: api/pipelines/stable_diffusion/controlnet

|

||||

title: Text-to-Image Generation with ControlNet Conditioning

|

||||

title: Stable Diffusion

|

||||

- local: api/pipelines/stable_diffusion_2

|

||||

title: Stable Diffusion 2

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

@@ -64,6 +64,12 @@ The models are built on the base class ['ModelMixin'] that is a `torch.nn.module

|

||||

## PriorTransformerOutput

|

||||

[[autodoc]] models.prior_transformer.PriorTransformerOutput

|

||||

|

||||

## ControlNetOutput

|

||||

[[autodoc]] models.controlnet.ControlNetOutput

|

||||

|

||||

## ControlNetModel

|

||||

[[autodoc]] ControlNetModel

|

||||

|

||||

## FlaxModelMixin

|

||||

[[autodoc]] FlaxModelMixin

|

||||

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

@@ -46,6 +46,7 @@ available a colab notebook to directly try them out.

|

||||

|---|---|:---:|:---:|

|

||||

| [alt_diffusion](./alt_diffusion) | [**AltDiffusion**](https://arxiv.org/abs/2211.06679) | Image-to-Image Text-Guided Generation | -

|

||||

| [audio_diffusion](./audio_diffusion) | [**Audio Diffusion**](https://github.com/teticio/audio_diffusion.git) | Unconditional Audio Generation |

|

||||

| [controlnet](./api/pipelines/stable_diffusion/controlnet) | [**ControlNet with Stable Diffusion**](https://arxiv.org/abs/2302.05543) | Image-to-Image Text-Guided Generation | [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/controlnet.ipynb)

|

||||

| [cycle_diffusion](./cycle_diffusion) | [**Cycle Diffusion**](https://arxiv.org/abs/2210.05559) | Image-to-Image Text-Guided Generation |

|

||||

| [dance_diffusion](./dance_diffusion) | [**Dance Diffusion**](https://github.com/williamberman/diffusers.git) | Unconditional Audio Generation |

|

||||

| [ddpm](./ddpm) | [**Denoising Diffusion Probabilistic Models**](https://arxiv.org/abs/2006.11239) | Unconditional Image Generation |

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -32,7 +32,7 @@ Resources

|

||||

|

||||

| Pipeline | Tasks | Colab | Demo

|

||||

|---|---|:---:|:---:|

|

||||

| [pipeline_semantic_stable_diffusion_attend_and_excite.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/pipeline_semantic_stable_diffusion_attend_and_excite) | *Text-to-Image Generation* | - | -

|

||||

| [pipeline_semantic_stable_diffusion_attend_and_excite.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/pipeline_semantic_stable_diffusion_attend_and_excite) | *Text-to-Image Generation* | - | https://huggingface.co/spaces/AttendAndExcite/Attend-and-Excite

|

||||

|

||||

|

||||

### Usage example

|

||||

|

||||

167

docs/source/en/api/pipelines/stable_diffusion/controlnet.mdx

Normal file

167

docs/source/en/api/pipelines/stable_diffusion/controlnet.mdx

Normal file

@@ -0,0 +1,167 @@

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# Text-to-Image Generation with ControlNet Conditioning

|

||||

|

||||

## Overview

|

||||

|

||||

[Adding Conditional Control to Text-to-Image Diffusion Models](https://arxiv.org/abs/2302.05543) by Lvmin Zhang and Maneesh Agrawala.

|

||||

|

||||

Using the pretrained models we can provide control images (for example, a depth map) to control Stable Diffusion text-to-image generation so that it follows the structure of the depth image and fills in the details.

|

||||

|

||||

The abstract of the paper is the following:

|

||||

|

||||

*We present a neural network structure, ControlNet, to control pretrained large diffusion models to support additional input conditions. The ControlNet learns task-specific conditions in an end-to-end way, and the learning is robust even when the training dataset is small (< 50k). Moreover, training a ControlNet is as fast as fine-tuning a diffusion model, and the model can be trained on a personal devices. Alternatively, if powerful computation clusters are available, the model can scale to large amounts (millions to billions) of data. We report that large diffusion models like Stable Diffusion can be augmented with ControlNets to enable conditional inputs like edge maps, segmentation maps, keypoints, etc. This may enrich the methods to control large diffusion models and further facilitate related applications.*

|

||||

|

||||

This model was contributed by the amazing community contributor [takuma104](https://huggingface.co/takuma104) ❤️ .

|

||||

|

||||

Resources:

|

||||

|

||||

* [Paper](https://arxiv.org/abs/2302.05543)

|

||||

* [Original Code](https://github.com/lllyasviel/ControlNet)

|

||||

|

||||

## Available Pipelines:

|

||||

|

||||

| Pipeline | Tasks | Demo

|

||||

|---|---|:---:|

|

||||

| [StableDiffusionControlNetPipeline](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/pipeline_stable_diffusion_controlnet.py) | *Text-to-Image Generation with ControlNet Conditioning* | [Colab Example](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/controlnet.ipynb)

|

||||

|

||||

## Usage example

|

||||

|

||||

In the following we give a simple example of how to use a *ControlNet* checkpoint with Diffusers for inference.

|

||||

The inference pipeline is the same for all pipelines:

|

||||

|

||||

* 1. Take an image and run it through a pre-conditioning processor.

|

||||

* 2. Run the pre-processed image through the [`StableDiffusionControlNetPipeline`].

|

||||

|

||||

Let's have a look at a simple example using the [Canny Edge ControlNet](https://huggingface.co/lllyasviel/sd-controlnet-canny).

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionControlNetPipeline

|

||||

from diffusers.utils import load_image

|

||||

|

||||

# Let's load the popular vermeer image

|

||||

image = load_image(

|

||||

"https://hf.co/datasets/huggingface/documentation-images/resolve/main/diffusers/input_image_vermeer.png"

|

||||

)

|

||||

```

|

||||

|

||||

|

||||

|

||||

Next, we process the image to get the canny image. This is step *1.* - running the pre-conditioning processor. The pre-conditioning processor is different for every ControlNet. Please see the model cards of the [official checkpoints](#controlnet-with-stable-diffusion-1.5) for more information about other models.

|

||||

|

||||

First, we need to install opencv:

|

||||

|

||||

```

|

||||

pip install opencv-contrib-python

|

||||

```

|

||||

|

||||

Next, let's also install all required Hugging Face libraries:

|

||||

|

||||

```

|

||||

pip install diffusers transformers git+https://github.com/huggingface/accelerate.git

|

||||

```

|

||||

|

||||

Then we can retrieve the canny edges of the image.

|

||||

|

||||

```python

|

||||

import cv2

|

||||

from PIL import Image

|

||||

import numpy as np

|

||||

|

||||

image = np.array(image)

|

||||

|

||||

low_threshold = 100

|

||||

high_threshold = 200

|

||||

|

||||

image = cv2.Canny(image, low_threshold, high_threshold)

|

||||

image = image[:, :, None]

|

||||

image = np.concatenate([image, image, image], axis=2)

|

||||

canny_image = Image.fromarray(image)

|

||||

```

|

||||

|

||||

Let's take a look at the processed image.

|

||||

|

||||

|

||||

|

||||

Now, we load the official [Stable Diffusion 1.5 Model](runwayml/stable-diffusion-v1-5) as well as the ControlNet for canny edges.

|

||||

|

||||

```py

|

||||

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

|

||||

import torch

|

||||

|

||||

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16)

|

||||

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

||||

"runwayml/stable-diffusion-v1-5", controlnet=controlnet, torch_dtype=torch.float16

|

||||

)

|

||||

```

|

||||

|

||||

To speed-up things and reduce memory, let's enable model offloading and use the fast [`UniPCMultistepScheduler`].

|

||||

|

||||

```py

|

||||

from diffusers import UniPCMultistepScheduler

|

||||

|

||||

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

# this command loads the individual model components on GPU on-demand.

|

||||

pipe.enable_model_cpu_offload()

|

||||

```

|

||||

|

||||

Finally, we can run the pipeline:

|

||||

|

||||

```py

|

||||

generator = torch.manual_seed(0)

|

||||

|

||||

out_image = pipe(

|

||||

"disco dancer with colorful lights", num_inference_steps=20, generator=generator, image=canny_image

|

||||

).images[0]

|

||||

```

|

||||

|

||||

This should take only around 3-4 seconds on GPU (depending on hardware). The output image then looks as follows:

|

||||

|

||||

|

||||

|

||||

|

||||

**Note**: To see how to run all other ControlNet checkpoints, please have a look at [ControlNet with Stable Diffusion 1.5](#controlnet-with-stable-diffusion-1.5)

|

||||

|

||||

<!-- TODO: add space -->

|

||||

|

||||

## Available checkpoints

|

||||

|

||||

ControlNet requires a *control image* in addition to the text-to-image *prompt*.

|

||||

Each pretrained model is trained using a different conditioning method that requires different images for conditioning the generated outputs. For example, Canny edge conditioning requires the control image to be the output of a Canny filter, while depth conditioning requires the control image to be a depth map. See the overview and image examples below to know more.

|

||||

|

||||

All checkpoints can be found under the authors' namespace [lllyasviel](https://huggingface.co/lllyasviel).

|

||||

|

||||

### ControlNet with Stable Diffusion 1.5

|

||||

|

||||

| Model Name | Control Image Overview| Control Image Example | Generated Image Example |

|

||||

|---|---|---|---|

|

||||

|[lllyasviel/sd-controlnet-canny](https://huggingface.co/lllyasviel/sd-controlnet-canny)<br/> *Trained with canny edge detection* | A monochrome image with white edges on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_bird_canny.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_bird_canny.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_canny_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_canny_1.png"/></a>|

|

||||

|[lllyasviel/sd-controlnet-depth](https://huggingface.co/lllyasviel/sd-controlnet-depth)<br/> *Trained with Midas depth estimation* |A grayscale image with black representing deep areas and white representing shallow areas.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_vermeer_depth.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_vermeer_depth.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_depth_2.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_depth_2.png"/></a>|

|

||||

|[lllyasviel/sd-controlnet-hed](https://huggingface.co/lllyasviel/sd-controlnet-hed)<br/> *Trained with HED edge detection (soft edge)* |A monochrome image with white soft edges on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_bird_hed.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_bird_hed.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_hed_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_hed_1.png"/></a> |

|

||||

|[lllyasviel/sd-controlnet-mlsd](https://huggingface.co/lllyasviel/sd-controlnet-mlsd)<br/> *Trained with M-LSD line detection* |A monochrome image composed only of white straight lines on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_room_mlsd.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_room_mlsd.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_mlsd_0.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_mlsd_0.png"/></a>|

|

||||

|[lllyasviel/sd-controlnet-normal](https://huggingface.co/lllyasviel/sd-controlnet-normal)<br/> *Trained with normal map* |A [normal mapped](https://en.wikipedia.org/wiki/Normal_mapping) image.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_human_normal.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_human_normal.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_normal_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_normal_1.png"/></a>|

|

||||

|[lllyasviel/sd-controlnet-openpose](https://huggingface.co/lllyasviel/sd-controlnet_openpose)<br/> *Trained with OpenPose bone image* |A [OpenPose bone](https://github.com/CMU-Perceptual-Computing-Lab/openpose) image.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_human_openpose.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_human_openpose.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_openpose_0.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_openpose_0.png"/></a>|

|

||||

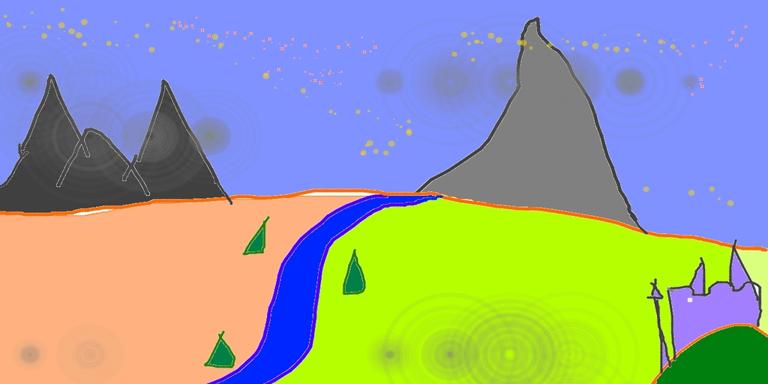

|[lllyasviel/sd-controlnet-scribble](https://huggingface.co/lllyasviel/sd-controlnet_scribble)<br/> *Trained with human scribbles* |A hand-drawn monochrome image with white outlines on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_vermeer_scribble.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_vermeer_scribble.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_scribble_0.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_scribble_0.png"/></a> |

|

||||

|[lllyasviel/sd-controlnet-seg](https://huggingface.co/lllyasviel/sd-controlnet_seg)<br/>*Trained with semantic segmentation* |An [ADE20K](https://groups.csail.mit.edu/vision/datasets/ADE20K/)'s segmentation protocol image.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_room_seg.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_room_seg.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_seg_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_seg_1.png"/></a> |

|

||||

|

||||

## StableDiffusionControlNetPipeline

|

||||

[[autodoc]] StableDiffusionControlNetPipeline

|

||||

- all

|

||||

- __call__

|

||||

- enable_attention_slicing

|

||||

- disable_attention_slicing

|

||||

- enable_vae_slicing

|

||||

- disable_vae_slicing

|

||||

- enable_xformers_memory_efficient_attention

|

||||

- disable_xformers_memory_efficient_attention

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

@@ -20,6 +20,9 @@ The original codebase can be found here: [CampVis/stable-diffusion](https://gith

|

||||

|

||||

[`StableDiffusionImg2ImgPipeline`] is compatible with all Stable Diffusion checkpoints for [Text-to-Image](./text2img)

|

||||

|

||||

The pipeline uses the diffusion-denoising mechanism proposed by SDEdit ([SDEdit: Guided Image Synthesis and Editing with Stochastic Differential Equations](https://arxiv.org/abs/2108.01073)

|

||||

proposed by Chenlin Meng, Yutong He, Yang Song, Jiaming Song, Jiajun Wu, Jun-Yan Zhu, Stefano Ermon).

|

||||

|

||||

[[autodoc]] StableDiffusionImg2ImgPipeline

|

||||

- all

|

||||

- __call__

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -60,7 +60,7 @@ def download_image(url):

|

||||

image = download_image(url)

|

||||

|

||||

prompt = "make the mountains snowy"

|

||||

edit = pipe(prompt, image=image, num_inference_steps=20, image_guidance_scale=1.5, guidance_scale=7).images[0]

|

||||

images = pipe(prompt, image=image, num_inference_steps=20, image_guidance_scale=1.5, guidance_scale=7).images

|

||||

images[0].save("snowy_mountains.png")

|

||||

```

|

||||

|

||||

|

||||

@@ -25,6 +25,7 @@ Resources:

|

||||

* [Project Page](https://pix2pixzero.github.io/).

|

||||

* [Paper](https://arxiv.org/abs/2302.03027).

|

||||

* [Original Code](https://github.com/pix2pixzero/pix2pix-zero).

|

||||

* [Demo](https://huggingface.co/spaces/pix2pix-zero-library/pix2pix-zero-demo).

|

||||

|

||||

## Tips

|

||||

|

||||

@@ -41,12 +42,13 @@ the above example, a valid input prompt would be: "a high resolution painting of

|

||||

* Change the input prompt to include "dog".

|

||||

* To learn more about how the source and target embeddings are generated, refer to the [original

|

||||

paper](https://arxiv.org/abs/2302.03027). Below, we also provide some directions on how to generate the embeddings.

|

||||

* Note that the quality of the outputs generated with this pipeline is dependent on how good the `source_embeds` and `target_embeds` are. Please, refer to [this discussion](#generating-source-and-target-embeddings) for some suggestions on the topic.

|

||||

|

||||

## Available Pipelines:

|

||||

|

||||

| Pipeline | Tasks | Demo

|

||||

|---|---|:---:|

|

||||

| [StableDiffusionPix2PixZeroPipeline](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/pipeline_stable_diffusion_pix2pix_zero.py) | *Text-Based Image Editing* | [🤗 Space] (soon) |

|

||||

| [StableDiffusionPix2PixZeroPipeline](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/pipeline_stable_diffusion_pix2pix_zero.py) | *Text-Based Image Editing* | [🤗 Space](https://huggingface.co/spaces/pix2pix-zero-library/pix2pix-zero-demo) |

|

||||

|

||||

<!-- TODO: add Colab -->

|

||||

|

||||

@@ -74,7 +76,7 @@ pipeline = StableDiffusionPix2PixZeroPipeline.from_pretrained(

|

||||

pipeline.scheduler = DDIMScheduler.from_config(pipeline.scheduler.config)

|

||||

pipeline.to("cuda")

|

||||

|

||||

prompt = "a high resolution painting of a cat in the style of van gough"

|

||||

prompt = "a high resolution painting of a cat in the style of van gogh"

|

||||

src_embs_url = "https://github.com/pix2pixzero/pix2pix-zero/raw/main/assets/embeddings_sd_1.4/cat.pt"

|

||||

target_embs_url = "https://github.com/pix2pixzero/pix2pix-zero/raw/main/assets/embeddings_sd_1.4/dog.pt"

|

||||

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

@@ -36,4 +36,6 @@ Available Checkpoints are:

|

||||

- enable_vae_slicing

|

||||

- disable_vae_slicing

|

||||

- enable_xformers_memory_efficient_attention

|

||||

- disable_xformers_memory_efficient_attention

|

||||

- disable_xformers_memory_efficient_attention

|

||||

- enable_vae_tiling

|

||||

- disable_vae_tiling

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

@@ -36,6 +36,7 @@ available a colab notebook to directly try them out.

|

||||

|---|---|:---:|:---:|

|

||||

| [alt_diffusion](./api/pipelines/alt_diffusion) | [**AltDiffusion**](https://arxiv.org/abs/2211.06679) | Image-to-Image Text-Guided Generation |

|

||||

| [audio_diffusion](./api/pipelines/audio_diffusion) | [**Audio Diffusion**](https://github.com/teticio/audio-diffusion.git) | Unconditional Audio Generation | [](https://colab.research.google.com/github/teticio/audio-diffusion/blob/master/notebooks/audio_diffusion_pipeline.ipynb)

|

||||

| [controlnet](./api/pipelines/stable_diffusion/controlnet) | [**ControlNet with Stable Diffusion**](https://arxiv.org/abs/2302.05543) | Image-to-Image Text-Guided Generation | [

|

||||

| [cycle_diffusion](./api/pipelines/cycle_diffusion) | [**Cycle Diffusion**](https://arxiv.org/abs/2210.05559) | Image-to-Image Text-Guided Generation |

|

||||

| [dance_diffusion](./api/pipelines/dance_diffusion) | [**Dance Diffusion**](https://github.com/williamberman/diffusers.git) | Unconditional Audio Generation |

|

||||

| [ddpm](./api/pipelines/ddpm) | [**Denoising Diffusion Probabilistic Models**](https://arxiv.org/abs/2006.11239) | Unconditional Image Generation |

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

@@ -133,6 +133,34 @@ images = pipe([prompt] * 32).images

|

||||

You may see a small performance boost in VAE decode on multi-image batches. There should be no performance impact on single-image batches.

|

||||

|

||||

|

||||

## Tiled VAE decode and encode for large images

|

||||

|

||||

Tiled VAE processing makes it possible to work with large images on limited VRAM. For example, generating 4k images in 8GB of VRAM. Tiled VAE decoder splits the image into overlapping tiles, decodes the tiles, and blends the outputs to make the final image.

|

||||

|

||||

You want to couple this with [`~StableDiffusionPipeline.enable_attention_slicing`] or [`~StableDiffusionPipeline.enable_xformers_memory_efficient_attention`] to further minimize memory use.

|

||||

|

||||

To use tiled VAE processing, invoke [`~StableDiffusionPipeline.enable_vae_tiling`] in your pipeline before inference. For example:

|

||||

|

||||

```python

|

||||

import torch

|

||||

from diffusers import StableDiffusionPipeline, UniPCMultistepScheduler

|

||||

|

||||

pipe = StableDiffusionPipeline.from_pretrained(

|

||||

"runwayml/stable-diffusion-v1-5",

|

||||

torch_dtype=torch.float16,

|

||||

)

|

||||

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

|

||||

pipe = pipe.to("cuda")

|

||||

prompt = "a beautiful landscape photograph"

|

||||

pipe.enable_vae_tiling()

|

||||

pipe.enable_xformers_memory_efficient_attention()

|

||||

|

||||

image = pipe([prompt], width=3840, height=2224, num_inference_steps=20).images[0]

|

||||

```

|

||||

|

||||

The output image will have some tile-to-tile tone variation from the tiles having separate decoders, but you shouldn't see sharp seams between the tiles. The tiling is turned off for images that are 512x512 or smaller.

|

||||

|

||||

|

||||

<a name="sequential_offloading"></a>

|

||||

## Offloading to CPU with accelerate for memory savings

|

||||

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

@@ -21,13 +21,13 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

## Stable Diffusion Inference

|

||||

|

||||

The snippet below demonstrates how to use the ONNX runtime. You need to use `StableDiffusionOnnxPipeline` instead of `StableDiffusionPipeline`. You also need to download the weights from the `onnx` branch of the repository, and indicate the runtime provider you want to use.

|

||||

The snippet below demonstrates how to use the ONNX runtime. You need to use `OnnxStableDiffusionPipeline` instead of `StableDiffusionPipeline`. You also need to download the weights from the `onnx` branch of the repository, and indicate the runtime provider you want to use.

|

||||

|

||||

```python

|

||||

# make sure you're logged in with `huggingface-cli login`

|

||||

from diffusers import StableDiffusionOnnxPipeline

|

||||

from diffusers import OnnxStableDiffusionPipeline

|

||||

|

||||

pipe = StableDiffusionOnnxPipeline.from_pretrained(

|

||||

pipe = OnnxStableDiffusionPipeline.from_pretrained(

|

||||

"runwayml/stable-diffusion-v1-5",

|

||||

revision="onnx",

|

||||

provider="CUDAExecutionProvider",

|

||||

@@ -37,6 +37,37 @@ prompt = "a photo of an astronaut riding a horse on mars"

|

||||

image = pipe(prompt).images[0]

|

||||

```

|

||||

|

||||

The snippet below demonstrates how to use the ONNX runtime with the Stable Diffusion upscaling pipeline.

|

||||

|

||||

```python

|

||||

from diffusers import OnnxStableDiffusionPipeline, OnnxStableDiffusionUpscalePipeline

|

||||

|

||||

prompt = "a photo of an astronaut riding a horse on mars"

|

||||

steps = 50

|

||||

|

||||

txt2img = OnnxStableDiffusionPipeline.from_pretrained(

|

||||

"runwayml/stable-diffusion-v1-5",

|

||||

revision="onnx",

|

||||

provider="CUDAExecutionProvider",

|

||||

)

|

||||

small_image = txt2img(

|

||||

prompt,

|

||||

num_inference_steps=steps,

|

||||

).images[0]

|

||||

|

||||

generator = torch.manual_seed(0)

|

||||

upscale = OnnxStableDiffusionUpscalePipeline.from_pretrained(

|

||||

"ssube/stable-diffusion-x4-upscaler-onnx",

|

||||

provider="CUDAExecutionProvider",

|

||||

)

|

||||

large_image = upscale(

|

||||

prompt,

|

||||

small_image,

|

||||

generator=generator,

|

||||

num_inference_steps=steps,

|

||||

).images[0]

|

||||

```

|

||||

|

||||

## Known Issues

|

||||

|

||||

- Generating multiple prompts in a batch seems to take too much memory. While we look into it, you may need to iterate instead of batching.

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

@@ -10,29 +10,29 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# Torch2.0 support in Diffusers

|

||||

# Accelerated PyTorch 2.0 support in Diffusers

|

||||

|

||||

Starting from version `0.13.0`, Diffusers supports the latest optimization from the upcoming [PyTorch 2.0](https://pytorch.org/get-started/pytorch-2.0/) release. These include:

|

||||

1. Support for native flash and memory-efficient attention without any extra dependencies.

|

||||

2. [torch.compile](https://pytorch.org/tutorials/intermediate/torch_compile_tutorial.html) support for compiling individual models for extra performance boost.

|

||||

1. Support for accelerated transformers implementation with memory-efficient attention – no extra dependencies required.

|

||||

2. [torch.compile](https://pytorch.org/tutorials/intermediate/torch_compile_tutorial.html) support for extra performance boost when individual models are compiled.

|

||||

|

||||

|

||||

## Installation

|

||||

To benefit from the native efficient attention and `torch.compile`, we will need to install the nightly version of PyTorch as the stable version is yet to be released. The first step is to install CUDA11.7 or CUDA11.8,

|

||||

as torch2.0 does not support the previous versions. Once CUDA is installed, torch nightly can be installed using:

|

||||

To benefit from the accelerated transformers implementation and `torch.compile`, we will need to install the nightly version of PyTorch, as the stable version is yet to be released. The first step is to install CUDA 11.7 or CUDA 11.8,

|

||||

as PyTorch 2.0 does not support the previous versions. Once CUDA is installed, torch nightly can be installed using:

|

||||

|

||||

```bash

|

||||

pip install --pre torch torchvision --index-url https://download.pytorch.org/whl/nightly/cu117

|

||||

```

|

||||

|

||||

## Using efficient attention and torch.compile.

|

||||

## Using accelerated transformers and torch.compile.

|

||||

|

||||

|

||||

1. **Efficient Attention**

|

||||

1. **Accelerated Transformers implementation**

|

||||

|

||||

Efficient attention is implemented via the [`torch.nn.functional.scaled_dot_product_attention`](https://pytorch.org/docs/master/generated/torch.nn.functional.scaled_dot_product_attention) function, which automatically enables flash/memory efficient attention, depending on the input and the GPU type. This is the same as the `memory_efficient_attention` from [xFormers](https://github.com/facebookresearch/xformers) but built natively into PyTorch.

|

||||

PyTorch 2.0 includes an optimized and memory-efficient attention implementation through the [`torch.nn.functional.scaled_dot_product_attention`](https://pytorch.org/docs/master/generated/torch.nn.functional.scaled_dot_product_attention) function, which automatically enables several optimizations depending on the inputs and the GPU type. This is similar to the `memory_efficient_attention` from [xFormers](https://github.com/facebookresearch/xformers), but built natively into PyTorch.

|

||||

|

||||

Efficient attention will be enabled by default in Diffusers if torch2.0 is installed and if `torch.nn.functional.scaled_dot_product_attention` is available. To use it, you can install torch2.0 as suggested above and use the pipeline. For example:

|

||||

These optimizations will be enabled by default in Diffusers if PyTorch 2.0 is installed and if `torch.nn.functional.scaled_dot_product_attention` is available. To use it, just install `torch 2.0` as suggested above and simply use the pipeline. For example:

|

||||

|

||||

```Python

|

||||

import torch

|

||||

@@ -59,12 +59,12 @@ pip install --pre torch torchvision --index-url https://download.pytorch.org/whl

|

||||

image = pipe(prompt).images[0]

|

||||

```

|

||||

|

||||

This should be as fast and memory efficient as `xFormers`.

|

||||

This should be as fast and memory efficient as `xFormers`. More details [in our benchmark](#benchmark).

|

||||

|

||||

|

||||

2. **torch.compile**

|

||||

|

||||

To get an additional speedup, we can use the new `torch.compile` feature. To do so, we wrap our `unet` with `torch.compile`. For more information and different options, refer to the

|

||||

To get an additional speedup, we can use the new `torch.compile` feature. To do so, we simply wrap our `unet` with `torch.compile`. For more information and different options, refer to the

|

||||

[torch compile docs](https://pytorch.org/tutorials/intermediate/torch_compile_tutorial.html).

|

||||

|

||||

```python

|

||||