mirror of

https://github.com/huggingface/diffusers.git

synced 2026-03-05 16:20:39 +08:00

Compare commits

27 Commits

fix_custom

...

temp/debug

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

fe6c903373 | ||

|

|

7ba7c65700 | ||

|

|

af7d5a6914 | ||

|

|

aa58d7a570 | ||

|

|

1a60865487 | ||

|

|

a1eb20c577 | ||

|

|

eada18a8c2 | ||

|

|

66d38f6eaa | ||

|

|

bc6b677a6a | ||

|

|

641e94da44 | ||

|

|

a86aa73aa1 | ||

|

|

893ef35bf1 | ||

|

|

1d813f6ebe | ||

|

|

ce4e6edefc | ||

|

|

a202bb1fca | ||

|

|

74483b9f14 | ||

|

|

dc42933feb | ||

|

|

eba1df08fb | ||

|

|

8e76e1269d | ||

|

|

a559b33eda | ||

|

|

3872e12d99 | ||

|

|

c83935a716 | ||

|

|

fe2501e540 | ||

|

|

5c3601b7a8 | ||

|

|

9658b24834 | ||

|

|

a1b6e29288 | ||

|

|

9bd4fda920 |

@@ -125,14 +125,14 @@ Awesome! Tell us what problem it solved for you.

|

||||

|

||||

You can open a feature request [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feature_request.md&title=).

|

||||

|

||||

#### 2.3 Feedback.

|

||||

#### 2.3 Feedback.

|

||||

|

||||

Feedback about the library design and why it is good or not good helps the core maintainers immensely to build a user-friendly library. To understand the philosophy behind the current design philosophy, please have a look [here](https://huggingface.co/docs/diffusers/conceptual/philosophy). If you feel like a certain design choice does not fit with the current design philosophy, please explain why and how it should be changed. If a certain design choice follows the design philosophy too much, hence restricting use cases, explain why and how it should be changed.

|

||||

If a certain design choice is very useful for you, please also leave a note as this is great feedback for future design decisions.

|

||||

|

||||

You can open an issue about feedback [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feedback.md&title=).

|

||||

|

||||

#### 2.4 Technical questions.

|

||||

#### 2.4 Technical questions.

|

||||

|

||||

Technical questions are mainly about why certain code of the library was written in a certain way, or what a certain part of the code does. Please make sure to link to the code in question and please provide detail on

|

||||

why this part of the code is difficult to understand.

|

||||

@@ -394,8 +394,8 @@ passes. You should run the tests impacted by your changes like this:

|

||||

```bash

|

||||

$ pytest tests/<TEST_TO_RUN>.py

|

||||

```

|

||||

|

||||

Before you run the tests, please make sure you install the dependencies required for testing. You can do so

|

||||

|

||||

Before you run the tests, please make sure you install the dependencies required for testing. You can do so

|

||||

with this command:

|

||||

|

||||

```bash

|

||||

|

||||

@@ -27,18 +27,18 @@ In a nutshell, Diffusers is built to be a natural extension of PyTorch. Therefor

|

||||

|

||||

## Simple over easy

|

||||

|

||||

As PyTorch states, **explicit is better than implicit** and **simple is better than complex**. This design philosophy is reflected in multiple parts of the library:

|

||||

As PyTorch states, **explicit is better than implicit** and **simple is better than complex**. This design philosophy is reflected in multiple parts of the library:

|

||||

- We follow PyTorch's API with methods like [`DiffusionPipeline.to`](https://huggingface.co/docs/diffusers/main/en/api/diffusion_pipeline#diffusers.DiffusionPipeline.to) to let the user handle device management.

|

||||

- Raising concise error messages is preferred to silently correct erroneous input. Diffusers aims at teaching the user, rather than making the library as easy to use as possible.

|

||||

- Complex model vs. scheduler logic is exposed instead of magically handled inside. Schedulers/Samplers are separated from diffusion models with minimal dependencies on each other. This forces the user to write the unrolled denoising loop. However, the separation allows for easier debugging and gives the user more control over adapting the denoising process or switching out diffusion models or schedulers.

|

||||

- Separately trained components of the diffusion pipeline, *e.g.* the text encoder, the unet, and the variational autoencoder, each have their own model class. This forces the user to handle the interaction between the different model components, and the serialization format separates the model components into different files. However, this allows for easier debugging and customization. Dreambooth or textual inversion training

|

||||

- Separately trained components of the diffusion pipeline, *e.g.* the text encoder, the unet, and the variational autoencoder, each have their own model class. This forces the user to handle the interaction between the different model components, and the serialization format separates the model components into different files. However, this allows for easier debugging and customization. Dreambooth or textual inversion training

|

||||

is very simple thanks to diffusers' ability to separate single components of the diffusion pipeline.

|

||||

|

||||

## Tweakable, contributor-friendly over abstraction

|

||||

|

||||

For large parts of the library, Diffusers adopts an important design principle of the [Transformers library](https://github.com/huggingface/transformers), which is to prefer copy-pasted code over hasty abstractions. This design principle is very opinionated and stands in stark contrast to popular design principles such as [Don't repeat yourself (DRY)](https://en.wikipedia.org/wiki/Don%27t_repeat_yourself).

|

||||

For large parts of the library, Diffusers adopts an important design principle of the [Transformers library](https://github.com/huggingface/transformers), which is to prefer copy-pasted code over hasty abstractions. This design principle is very opinionated and stands in stark contrast to popular design principles such as [Don't repeat yourself (DRY)](https://en.wikipedia.org/wiki/Don%27t_repeat_yourself).

|

||||

In short, just like Transformers does for modeling files, diffusers prefers to keep an extremely low level of abstraction and very self-contained code for pipelines and schedulers.

|

||||

Functions, long code blocks, and even classes can be copied across multiple files which at first can look like a bad, sloppy design choice that makes the library unmaintainable.

|

||||

Functions, long code blocks, and even classes can be copied across multiple files which at first can look like a bad, sloppy design choice that makes the library unmaintainable.

|

||||

**However**, this design has proven to be extremely successful for Transformers and makes a lot of sense for community-driven, open-source machine learning libraries because:

|

||||

- Machine Learning is an extremely fast-moving field in which paradigms, model architectures, and algorithms are changing rapidly, which therefore makes it very difficult to define long-lasting code abstractions.

|

||||

- Machine Learning practitioners like to be able to quickly tweak existing code for ideation and research and therefore prefer self-contained code over one that contains many abstractions.

|

||||

@@ -47,10 +47,10 @@ Functions, long code blocks, and even classes can be copied across multiple file

|

||||

At Hugging Face, we call this design the **single-file policy** which means that almost all of the code of a certain class should be written in a single, self-contained file. To read more about the philosophy, you can have a look

|

||||

at [this blog post](https://huggingface.co/blog/transformers-design-philosophy).

|

||||

|

||||

In diffusers, we follow this philosophy for both pipelines and schedulers, but only partly for diffusion models. The reason we don't follow this design fully for diffusion models is because almost all diffusion pipelines, such

|

||||

In diffusers, we follow this philosophy for both pipelines and schedulers, but only partly for diffusion models. The reason we don't follow this design fully for diffusion models is because almost all diffusion pipelines, such

|

||||

as [DDPM](https://huggingface.co/docs/diffusers/v0.12.0/en/api/pipelines/ddpm), [Stable Diffusion](https://huggingface.co/docs/diffusers/v0.12.0/en/api/pipelines/stable_diffusion/overview#stable-diffusion-pipelines), [UnCLIP (Dalle-2)](https://huggingface.co/docs/diffusers/v0.12.0/en/api/pipelines/unclip#overview) and [Imagen](https://imagen.research.google/) all rely on the same diffusion model, the [UNet](https://huggingface.co/docs/diffusers/api/models#diffusers.UNet2DConditionModel).

|

||||

|

||||

Great, now you should have generally understood why 🧨 Diffusers is designed the way it is 🤗.

|

||||

Great, now you should have generally understood why 🧨 Diffusers is designed the way it is 🤗.

|

||||

We try to apply these design principles consistently across the library. Nevertheless, there are some minor exceptions to the philosophy or some unlucky design choices. If you have feedback regarding the design, we would ❤️ to hear it [directly on GitHub](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feedback.md&title=).

|

||||

|

||||

## Design Philosophy in Details

|

||||

@@ -89,7 +89,7 @@ The following design principles are followed:

|

||||

- Models should by default have the highest precision and lowest performance setting.

|

||||

- To integrate new model checkpoints whose general architecture can be classified as an architecture that already exists in Diffusers, the existing model architecture shall be adapted to make it work with the new checkpoint. One should only create a new file if the model architecture is fundamentally different.

|

||||

- Models should be designed to be easily extendable to future changes. This can be achieved by limiting public function arguments, configuration arguments, and "foreseeing" future changes, *e.g.* it is usually better to add `string` "...type" arguments that can easily be extended to new future types instead of boolean `is_..._type` arguments. Only the minimum amount of changes shall be made to existing architectures to make a new model checkpoint work.

|

||||

- The model design is a difficult trade-off between keeping code readable and concise and supporting many model checkpoints. For most parts of the modeling code, classes shall be adapted for new model checkpoints, while there are some exceptions where it is preferred to add new classes to make sure the code is kept concise and

|

||||

- The model design is a difficult trade-off between keeping code readable and concise and supporting many model checkpoints. For most parts of the modeling code, classes shall be adapted for new model checkpoints, while there are some exceptions where it is preferred to add new classes to make sure the code is kept concise and

|

||||

readable longterm, such as [UNet blocks](https://github.com/huggingface/diffusers/blob/main/src/diffusers/models/unet_2d_blocks.py) and [Attention processors](https://github.com/huggingface/diffusers/blob/main/src/diffusers/models/cross_attention.py).

|

||||

|

||||

### Schedulers

|

||||

@@ -97,9 +97,9 @@ readable longterm, such as [UNet blocks](https://github.com/huggingface/diffuser

|

||||

Schedulers are responsible to guide the denoising process for inference as well as to define a noise schedule for training. They are designed as individual classes with loadable configuration files and strongly follow the **single-file policy**.

|

||||

|

||||

The following design principles are followed:

|

||||

- All schedulers are found in [`src/diffusers/schedulers`](https://github.com/huggingface/diffusers/tree/main/src/diffusers/schedulers).

|

||||

- Schedulers are **not** allowed to import from large utils files and shall be kept very self-contained.

|

||||

- One scheduler python file corresponds to one scheduler algorithm (as might be defined in a paper).

|

||||

- All schedulers are found in [`src/diffusers/schedulers`](https://github.com/huggingface/diffusers/tree/main/src/diffusers/schedulers).

|

||||

- Schedulers are **not** allowed to import from large utils files and shall be kept very self-contained.

|

||||

- One scheduler python file corresponds to one scheduler algorithm (as might be defined in a paper).

|

||||

- If schedulers share similar functionalities, we can make use of the `#Copied from` mechanism.

|

||||

- Schedulers all inherit from `SchedulerMixin` and `ConfigMixin`.

|

||||

- Schedulers can be easily swapped out with the [`ConfigMixin.from_config`](https://huggingface.co/docs/diffusers/main/en/api/configuration#diffusers.ConfigMixin.from_config) method as explained in detail [here](./using-diffusers/schedulers.mdx).

|

||||

|

||||

40

README.md

40

README.md

@@ -1,6 +1,6 @@

|

||||

<p align="center">

|

||||

<br>

|

||||

<img src="https://github.com/huggingface/diffusers/blob/main/docs/source/en/imgs/diffusers_library.jpg" width="400"/>

|

||||

<img src="./docs/source/en/imgs/diffusers_library.jpg" width="400"/>

|

||||

<br>

|

||||

<p>

|

||||

<p align="center">

|

||||

@@ -30,7 +30,7 @@ We recommend installing 🤗 Diffusers in a virtual environment from PyPi or Con

|

||||

### PyTorch

|

||||

|

||||

With `pip` (official package):

|

||||

|

||||

|

||||

```bash

|

||||

pip install --upgrade diffusers[torch]

|

||||

```

|

||||

@@ -107,7 +107,7 @@ Check out the [Quickstart](https://huggingface.co/docs/diffusers/quicktour) to l

|

||||

| [Training](https://huggingface.co/docs/diffusers/training/overview) | Guides for how to train a diffusion model for different tasks with different training techniques. |

|

||||

## Contribution

|

||||

|

||||

We ❤️ contributions from the open-source community!

|

||||

We ❤️ contributions from the open-source community!

|

||||

If you want to contribute to this library, please check out our [Contribution guide](https://github.com/huggingface/diffusers/blob/main/CONTRIBUTING.md).

|

||||

You can look out for [issues](https://github.com/huggingface/diffusers/issues) you'd like to tackle to contribute to the library.

|

||||

- See [Good first issues](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22) for general opportunities to contribute

|

||||

@@ -128,70 +128,70 @@ just hang out ☕.

|

||||

</tr>

|

||||

<tr style="border-top: 2px solid black">

|

||||

<td>Unconditional Image Generation</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/ddpm"> DDPM </a></td>

|

||||

<td><a href="./api/pipelines/ddpm"> DDPM </a></td>

|

||||

<td><a href="https://huggingface.co/google/ddpm-ema-church-256"> google/ddpm-ema-church-256 </a></td>

|

||||

</tr>

|

||||

<tr style="border-top: 2px solid black">

|

||||

<td>Text-to-Image</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/text2img">Stable Diffusion Text-to-Image</a></td>

|

||||

<td><a href="./api/pipelines/stable_diffusion/text2img">Stable Diffusion Text-to-Image</a></td>

|

||||

<td><a href="https://huggingface.co/runwayml/stable-diffusion-v1-5"> runwayml/stable-diffusion-v1-5 </a></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Text-to-Image</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/unclip">unclip</a></td>

|

||||

<td><a href="./api/pipelines/unclip">unclip</a></td>

|

||||

<td><a href="https://huggingface.co/kakaobrain/karlo-v1-alpha"> kakaobrain/karlo-v1-alpha </a></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Text-to-Image</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/if">if</a></td>

|

||||

<td><a href="./api/pipelines/if">if</a></td>

|

||||

<td><a href="https://huggingface.co/DeepFloyd/IF-I-XL-v1.0"> DeepFloyd/IF-I-XL-v1.0 </a></td>

|

||||

</tr>

|

||||

<tr style="border-top: 2px solid black">

|

||||

<td>Text-guided Image-to-Image</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/controlnet">Controlnet</a></td>

|

||||

<td><a href="./api/pipelines/stable_diffusion/controlnet">Controlnet</a></td>

|

||||

<td><a href="https://huggingface.co/lllyasviel/sd-controlnet-canny"> lllyasviel/sd-controlnet-canny </a></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Text-guided Image-to-Image</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/pix2pix">Instruct Pix2Pix</a></td>

|

||||

<td><a href="./api/pipelines/stable_diffusion/pix2pix">Instruct Pix2Pix</a></td>

|

||||

<td><a href="https://huggingface.co/timbrooks/instruct-pix2pix"> timbrooks/instruct-pix2pix </a></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Text-guided Image-to-Image</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/img2img">Stable Diffusion Image-to-Image</a></td>

|

||||

<td><a href="./api/pipelines/stable_diffusion/img2img">Stable Diffusion Image-to-Image</a></td>

|

||||

<td><a href="https://huggingface.co/runwayml/stable-diffusion-v1-5"> runwayml/stable-diffusion-v1-5 </a></td>

|

||||

</tr>

|

||||

<tr style="border-top: 2px solid black">

|

||||

<td>Text-guided Image Inpainting</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/inpaint">Stable Diffusion Inpaint</a></td>

|

||||

<td><a href="./api/pipelines/stable_diffusion/inpaint">Stable Diffusion Inpaint</a></td>

|

||||

<td><a href="https://huggingface.co/runwayml/stable-diffusion-inpainting"> runwayml/stable-diffusion-inpainting </a></td>

|

||||

</tr>

|

||||

<tr style="border-top: 2px solid black">

|

||||

<td>Image Variation</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/image_variation">Stable Diffusion Image Variation</a></td>

|

||||

<td><a href="./stable_diffusion/image_variation">Stable Diffusion Image Variation</a></td>

|

||||

<td><a href="https://huggingface.co/lambdalabs/sd-image-variations-diffusers"> lambdalabs/sd-image-variations-diffusers </a></td>

|

||||

</tr>

|

||||

<tr style="border-top: 2px solid black">

|

||||

<td>Super Resolution</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/upscale">Stable Diffusion Upscale</a></td>

|

||||

<td><a href="./stable_diffusion/stable_diffusion/upscale">Stable Diffusion Upscale</a></td>

|

||||

<td><a href="https://huggingface.co/stabilityai/stable-diffusion-x4-upscaler"> stabilityai/stable-diffusion-x4-upscaler </a></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Super Resolution</td>

|

||||

<td><a href="https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/latent_upscale">Stable Diffusion Latent Upscale</a></td>

|

||||

<td><a href="./stable_diffusion/latent_upscale">Stable Diffusion Latent Upscale</a></td>

|

||||

<td><a href="https://huggingface.co/stabilityai/sd-x2-latent-upscaler"> stabilityai/sd-x2-latent-upscaler </a></td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

## Popular libraries using 🧨 Diffusers

|

||||

|

||||

- https://github.com/microsoft/TaskMatrix

|

||||

- https://github.com/invoke-ai/InvokeAI

|

||||

- https://github.com/apple/ml-stable-diffusion

|

||||

- https://github.com/Sanster/lama-cleaner

|

||||

- https://github.com/microsoft/TaskMatrix

|

||||

- https://github.com/invoke-ai/InvokeAI

|

||||

- https://github.com/apple/ml-stable-diffusion

|

||||

- https://github.com/Sanster/lama-cleaner

|

||||

- https://github.com/IDEA-Research/Grounded-Segment-Anything

|

||||

- https://github.com/ashawkey/stable-dreamfusion

|

||||

- https://github.com/deep-floyd/IF

|

||||

- https://github.com/ashawkey/stable-dreamfusion

|

||||

- https://github.com/deep-floyd/IF

|

||||

- https://github.com/bentoml/BentoML

|

||||

- https://github.com/bmaltais/kohya_ss

|

||||

- +3000 other amazing GitHub repositories 💪

|

||||

|

||||

@@ -38,8 +38,6 @@ RUN python3 -m pip install --no-cache-dir --upgrade pip && \

|

||||

scipy \

|

||||

tensorboard \

|

||||

transformers \

|

||||

omegaconf \

|

||||

pytorch-lightning \

|

||||

xformers

|

||||

omegaconf

|

||||

|

||||

CMD ["/bin/bash"]

|

||||

|

||||

@@ -6,4 +6,4 @@ INSTALL_CONTENT = """

|

||||

# ! pip install git+https://github.com/huggingface/diffusers.git

|

||||

"""

|

||||

|

||||

notebook_first_cells = [{"type": "code", "content": INSTALL_CONTENT}]

|

||||

notebook_first_cells = [{"type": "code", "content": INSTALL_CONTENT}]

|

||||

@@ -28,8 +28,8 @@

|

||||

title: Load community pipelines

|

||||

- local: using-diffusers/using_safetensors

|

||||

title: Load safetensors

|

||||

- local: using-diffusers/other-formats

|

||||

title: Load different Stable Diffusion formats

|

||||

- local: using-diffusers/kerascv

|

||||

title: Load KerasCV Stable Diffusion checkpoints

|

||||

title: Loading & Hub

|

||||

- sections:

|

||||

- local: using-diffusers/pipeline_overview

|

||||

@@ -132,8 +132,6 @@

|

||||

- sections:

|

||||

- local: api/models

|

||||

title: Models

|

||||

- local: api/attnprocessor

|

||||

title: Attention Processor

|

||||

- local: api/diffusion_pipeline

|

||||

title: Diffusion Pipeline

|

||||

- local: api/logging

|

||||

@@ -144,16 +142,12 @@

|

||||

title: Outputs

|

||||

- local: api/loaders

|

||||

title: Loaders

|

||||

- local: api/utilities

|

||||

title: Utilities

|

||||

title: Main Classes

|

||||

- sections:

|

||||

- local: api/pipelines/overview

|

||||

title: Overview

|

||||

- local: api/pipelines/alt_diffusion

|

||||

title: AltDiffusion

|

||||

- local: api/pipelines/attend_and_excite

|

||||

title: Attend and Excite

|

||||

- local: api/pipelines/audio_diffusion

|

||||

title: Audio Diffusion

|

||||

- local: api/pipelines/audioldm

|

||||

@@ -168,32 +162,22 @@

|

||||

title: DDIM

|

||||

- local: api/pipelines/ddpm

|

||||

title: DDPM

|

||||

- local: api/pipelines/diffedit

|

||||

title: DiffEdit

|

||||

- local: api/pipelines/dit

|

||||

title: DiT

|

||||

- local: api/pipelines/if

|

||||

title: IF

|

||||

- local: api/pipelines/pix2pix

|

||||

title: InstructPix2Pix

|

||||

- local: api/pipelines/kandinsky

|

||||

title: Kandinsky

|

||||

- local: api/pipelines/latent_diffusion

|

||||

title: Latent Diffusion

|

||||

- local: api/pipelines/panorama

|

||||

title: MultiDiffusion Panorama

|

||||

- local: api/pipelines/paint_by_example

|

||||

title: PaintByExample

|

||||

- local: api/pipelines/pix2pix_zero

|

||||

title: Pix2Pix Zero

|

||||

- local: api/pipelines/pndm

|

||||

title: PNDM

|

||||

- local: api/pipelines/repaint

|

||||

title: RePaint

|

||||

- local: api/pipelines/stable_diffusion_safe

|

||||

title: Safe Stable Diffusion

|

||||

- local: api/pipelines/score_sde_ve

|

||||

title: Score SDE VE

|

||||

- local: api/pipelines/self_attention_guidance

|

||||

title: Self-Attention Guidance

|

||||

- local: api/pipelines/semantic_stable_diffusion

|

||||

title: Semantic Guidance

|

||||

- local: api/pipelines/spectrogram_diffusion

|

||||

@@ -211,21 +195,31 @@

|

||||

title: Depth-to-Image

|

||||

- local: api/pipelines/stable_diffusion/image_variation

|

||||

title: Image-Variation

|

||||

- local: api/pipelines/stable_diffusion/stable_diffusion_safe

|

||||

title: Safe Stable Diffusion

|

||||

- local: api/pipelines/stable_diffusion/stable_diffusion_2

|

||||

title: Stable Diffusion 2

|

||||

- local: api/pipelines/stable_diffusion/latent_upscale

|

||||

title: Stable-Diffusion-Latent-Upscaler

|

||||

- local: api/pipelines/stable_diffusion/upscale

|

||||

title: Super-Resolution

|

||||

- local: api/pipelines/stable_diffusion/latent_upscale

|

||||

title: Stable-Diffusion-Latent-Upscaler

|

||||

- local: api/pipelines/stable_diffusion/pix2pix

|

||||

title: InstructPix2Pix

|

||||

- local: api/pipelines/stable_diffusion/attend_and_excite

|

||||

title: Attend and Excite

|

||||

- local: api/pipelines/stable_diffusion/pix2pix_zero

|

||||

title: Pix2Pix Zero

|

||||

- local: api/pipelines/stable_diffusion/self_attention_guidance

|

||||

title: Self-Attention Guidance

|

||||

- local: api/pipelines/stable_diffusion/panorama

|

||||

title: MultiDiffusion Panorama

|

||||

- local: api/pipelines/stable_diffusion/model_editing

|

||||

title: Text-to-Image Model Editing

|

||||

- local: api/pipelines/stable_diffusion/diffedit

|

||||

title: DiffEdit

|

||||

title: Stable Diffusion

|

||||

- local: api/pipelines/stable_diffusion_2

|

||||

title: Stable Diffusion 2

|

||||

- local: api/pipelines/stable_unclip

|

||||

title: Stable unCLIP

|

||||

- local: api/pipelines/stochastic_karras_ve

|

||||

title: Stochastic Karras VE

|

||||

- local: api/pipelines/model_editing

|

||||

title: Text-to-Image Model Editing

|

||||

- local: api/pipelines/text_to_video

|

||||

title: Text-to-Video

|

||||

- local: api/pipelines/text_to_video_zero

|

||||

@@ -234,8 +228,6 @@

|

||||

title: UnCLIP

|

||||

- local: api/pipelines/latent_diffusion_uncond

|

||||

title: Unconditional Latent Diffusion

|

||||

- local: api/pipelines/unidiffuser

|

||||

title: UniDiffuser

|

||||

- local: api/pipelines/versatile_diffusion

|

||||

title: Versatile Diffusion

|

||||

- local: api/pipelines/vq_diffusion

|

||||

|

||||

@@ -1,42 +0,0 @@

|

||||

# Attention Processor

|

||||

|

||||

An attention processor is a class for applying different types of attention mechanisms.

|

||||

|

||||

## AttnProcessor

|

||||

[[autodoc]] models.attention_processor.AttnProcessor

|

||||

|

||||

## AttnProcessor2_0

|

||||

[[autodoc]] models.attention_processor.AttnProcessor2_0

|

||||

|

||||

## LoRAAttnProcessor

|

||||

[[autodoc]] models.attention_processor.LoRAAttnProcessor

|

||||

|

||||

## LoRAAttnProcessor2_0

|

||||

[[autodoc]] models.attention_processor.LoRAAttnProcessor2_0

|

||||

|

||||

## CustomDiffusionAttnProcessor

|

||||

[[autodoc]] models.attention_processor.CustomDiffusionAttnProcessor

|

||||

|

||||

## AttnAddedKVProcessor

|

||||

[[autodoc]] models.attention_processor.AttnAddedKVProcessor

|

||||

|

||||

## AttnAddedKVProcessor2_0

|

||||

[[autodoc]] models.attention_processor.AttnAddedKVProcessor2_0

|

||||

|

||||

## LoRAAttnAddedKVProcessor

|

||||

[[autodoc]] models.attention_processor.LoRAAttnAddedKVProcessor

|

||||

|

||||

## XFormersAttnProcessor

|

||||

[[autodoc]] models.attention_processor.XFormersAttnProcessor

|

||||

|

||||

## LoRAXFormersAttnProcessor

|

||||

[[autodoc]] models.attention_processor.LoRAXFormersAttnProcessor

|

||||

|

||||

## CustomDiffusionXFormersAttnProcessor

|

||||

[[autodoc]] models.attention_processor.CustomDiffusionXFormersAttnProcessor

|

||||

|

||||

## SlicedAttnProcessor

|

||||

[[autodoc]] models.attention_processor.SlicedAttnProcessor

|

||||

|

||||

## SlicedAttnAddedKVProcessor

|

||||

[[autodoc]] models.attention_processor.SlicedAttnAddedKVProcessor

|

||||

@@ -12,25 +12,36 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

# Pipelines

|

||||

|

||||

The [`DiffusionPipeline`] is the easiest way to load any pretrained diffusion pipeline from the [Hub](https://huggingface.co/models?library=diffusers) and use it for inference.

|

||||

The [`DiffusionPipeline`] is the easiest way to load any pretrained diffusion pipeline from the [Hub](https://huggingface.co/models?library=diffusers) and to use it in inference.

|

||||

|

||||

<Tip>

|

||||

|

||||

You shouldn't use the [`DiffusionPipeline`] class for training or finetuning a diffusion model. Individual

|

||||

components (for example, [`UNetModel`] and [`UNetConditionModel`]) of diffusion pipelines are usually trained individually, so we suggest directly working with instead.

|

||||

One should not use the Diffusion Pipeline class for training or fine-tuning a diffusion model. Individual

|

||||

components of diffusion pipelines are usually trained individually, so we suggest to directly work

|

||||

with [`UNetModel`] and [`UNetConditionModel`].

|

||||

|

||||

</Tip>

|

||||

|

||||

The pipeline type (for example [`StableDiffusionPipeline`]) of any diffusion pipeline loaded with [`~DiffusionPipeline.from_pretrained`] is automatically

|

||||

detected and pipeline components are loaded and passed to the `__init__` function of the pipeline.

|

||||

Any diffusion pipeline that is loaded with [`~DiffusionPipeline.from_pretrained`] will automatically

|

||||

detect the pipeline type, *e.g.* [`StableDiffusionPipeline`] and consequently load each component of the

|

||||

pipeline and pass them into the `__init__` function of the pipeline, *e.g.* [`~StableDiffusionPipeline.__init__`].

|

||||

|

||||

Any pipeline object can be saved locally with [`~DiffusionPipeline.save_pretrained`].

|

||||

|

||||

## DiffusionPipeline

|

||||

|

||||

[[autodoc]] DiffusionPipeline

|

||||

- all

|

||||

- __call__

|

||||

- device

|

||||

- to

|

||||

- components

|

||||

|

||||

## ImagePipelineOutput

|

||||

By default diffusion pipelines return an object of class

|

||||

|

||||

[[autodoc]] pipelines.ImagePipelineOutput

|

||||

|

||||

## AudioPipelineOutput

|

||||

By default diffusion pipelines return an object of class

|

||||

|

||||

[[autodoc]] pipelines.AudioPipelineOutput

|

||||

|

||||

@@ -13,7 +13,7 @@ specific language governing permissions and limitations under the License.

|

||||

# Models

|

||||

|

||||

Diffusers contains pretrained models for popular algorithms and modules for creating the next set of diffusion models.

|

||||

The primary function of these models is to denoise an input sample, by modeling the distribution \\(p_{\theta}(x_{t-1}|x_{t})\\).

|

||||

The primary function of these models is to denoise an input sample, by modeling the distribution $p_\theta(\mathbf{x}_{t-1}|\mathbf{x}_t)$.

|

||||

The models are built on the base class ['ModelMixin'] that is a `torch.nn.module` with basic functionality for saving and loading models both locally and from the HuggingFace hub.

|

||||

|

||||

## ModelMixin

|

||||

|

||||

@@ -12,11 +12,11 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

# BaseOutputs

|

||||

|

||||

All models have outputs that are subclasses of [`~utils.BaseOutput`]. Those are

|

||||

data structures containing all the information returned by the model, but they can also be used as tuples or

|

||||

All models have outputs that are instances of subclasses of [`~utils.BaseOutput`]. Those are

|

||||

data structures containing all the information returned by the model, but that can also be used as tuples or

|

||||

dictionaries.

|

||||

|

||||

For example:

|

||||

Let's see how this looks in an example:

|

||||

|

||||

```python

|

||||

from diffusers import DDIMPipeline

|

||||

@@ -25,45 +25,31 @@ pipeline = DDIMPipeline.from_pretrained("google/ddpm-cifar10-32")

|

||||

outputs = pipeline()

|

||||

```

|

||||

|

||||

The `outputs` object is a [`~pipelines.ImagePipelineOutput`] which means it has an image attribute.

|

||||

The `outputs` object is a [`~pipelines.ImagePipelineOutput`], as we can see in the

|

||||

documentation of that class below, it means it has an image attribute.

|

||||

|

||||

You can access each attribute as you normally would or with a keyword lookup, and if that attribute is not returned by the model, you will get `None`:

|

||||

You can access each attribute as you would usually do, and if that attribute has not been returned by the model, you will get `None`:

|

||||

|

||||

```python

|

||||

outputs.images

|

||||

```

|

||||

|

||||

or via keyword lookup

|

||||

|

||||

```python

|

||||

outputs["images"]

|

||||

```

|

||||

|

||||

When considering the `outputs` object as a tuple, it only considers the attributes that don't have `None` values.

|

||||

For instance, retrieving an image by indexing into it returns the tuple `(outputs.images)`:

|

||||

When considering our `outputs` object as tuple, it only considers the attributes that don't have `None` values.

|

||||

Here for instance, we could retrieve images via indexing:

|

||||

|

||||

```python

|

||||

outputs[:1]

|

||||

```

|

||||

|

||||

<Tip>

|

||||

|

||||

To check a specific pipeline or model output, refer to its corresponding API documentation.

|

||||

|

||||

</Tip>

|

||||

which will return the tuple `(outputs.images)` for instance.

|

||||

|

||||

## BaseOutput

|

||||

|

||||

[[autodoc]] utils.BaseOutput

|

||||

- to_tuple

|

||||

|

||||

## ImagePipelineOutput

|

||||

|

||||

[[autodoc]] pipelines.ImagePipelineOutput

|

||||

|

||||

## FlaxImagePipelineOutput

|

||||

|

||||

[[autodoc]] pipelines.pipeline_flax_utils.FlaxImagePipelineOutput

|

||||

|

||||

## AudioPipelineOutput

|

||||

|

||||

[[autodoc]] pipelines.AudioPipelineOutput

|

||||

|

||||

## ImageTextPipelineOutput

|

||||

|

||||

[[autodoc]] ImageTextPipelineOutput

|

||||

@@ -1,351 +0,0 @@

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# Kandinsky

|

||||

|

||||

## Overview

|

||||

|

||||

Kandinsky 2.1 inherits best practices from [DALL-E 2](https://arxiv.org/abs/2204.06125) and [Latent Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/latent_diffusion), while introducing some new ideas.

|

||||

|

||||

It uses [CLIP](https://huggingface.co/docs/transformers/model_doc/clip) for encoding images and text, and a diffusion image prior (mapping) between latent spaces of CLIP modalities. This approach enhances the visual performance of the model and unveils new horizons in blending images and text-guided image manipulation.

|

||||

|

||||

The Kandinsky model is created by [Arseniy Shakhmatov](https://github.com/cene555), [Anton Razzhigaev](https://github.com/razzant), [Aleksandr Nikolich](https://github.com/AlexWortega), [Igor Pavlov](https://github.com/boomb0om), [Andrey Kuznetsov](https://github.com/kuznetsoffandrey) and [Denis Dimitrov](https://github.com/denndimitrov) and the original codebase can be found [here](https://github.com/ai-forever/Kandinsky-2)

|

||||

|

||||

## Available Pipelines:

|

||||

|

||||

| Pipeline | Tasks |

|

||||

|---|---|

|

||||

| [pipeline_kandinsky.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky/pipeline_kandinsky.py) | *Text-to-Image Generation* |

|

||||

| [pipeline_kandinsky_inpaint.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky/pipeline_kandinsky_inpaint.py) | *Image-Guided Image Generation* |

|

||||

| [pipeline_kandinsky_img2img.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky/pipeline_kandinsky_img2img.py) | *Image-Guided Image Generation* |

|

||||

|

||||

## Usage example

|

||||

|

||||

In the following, we will walk you through some examples of how to use the Kandinsky pipelines to create some visually aesthetic artwork.

|

||||

|

||||

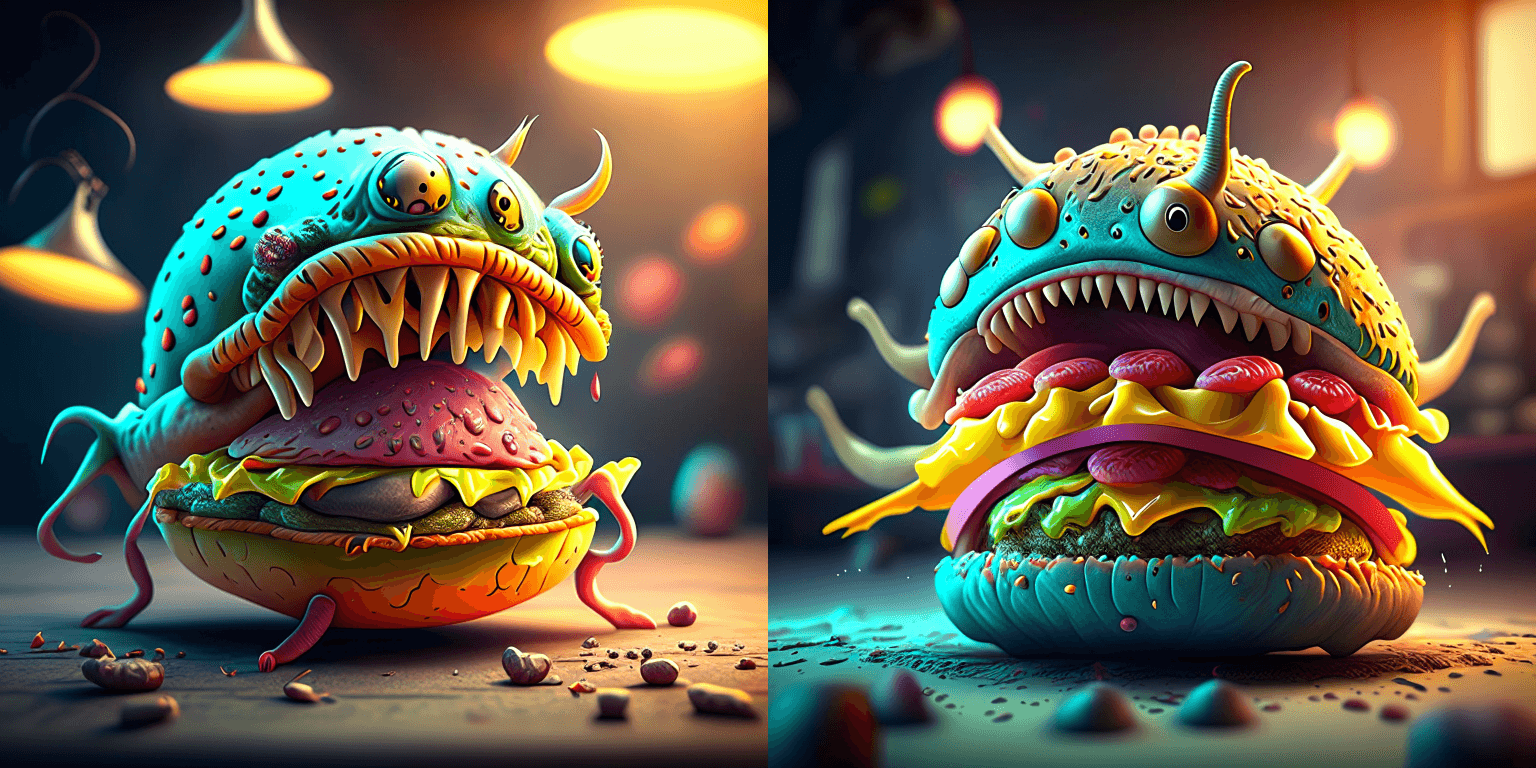

### Text-to-Image Generation

|

||||

|

||||

For text-to-image generation, we need to use both [`KandinskyPriorPipeline`] and [`KandinskyPipeline`].

|

||||

The first step is to encode text prompts with CLIP and then diffuse the CLIP text embeddings to CLIP image embeddings,

|

||||

as first proposed in [DALL-E 2](https://cdn.openai.com/papers/dall-e-2.pdf).

|

||||

Let's throw a fun prompt at Kandinsky to see what it comes up with.

|

||||

|

||||

```py

|

||||

prompt = "A alien cheeseburger creature eating itself, claymation, cinematic, moody lighting"

|

||||

```

|

||||

|

||||

First, let's instantiate the prior pipeline and the text-to-image pipeline. Both

|

||||

pipelines are diffusion models.

|

||||

|

||||

|

||||

```py

|

||||

from diffusers import DiffusionPipeline

|

||||

import torch

|

||||

|

||||

pipe_prior = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16)

|

||||

pipe_prior.to("cuda")

|

||||

|

||||

t2i_pipe = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

|

||||

t2i_pipe.to("cuda")

|

||||

```

|

||||

|

||||

Now we pass the prompt through the prior to generate image embeddings. The prior

|

||||

returns both the image embeddings corresponding to the prompt and negative/unconditional image

|

||||

embeddings corresponding to an empty string.

|

||||

|

||||

```py

|

||||

generator = torch.Generator(device="cuda").manual_seed(12)

|

||||

image_embeds, negative_image_embeds = pipe_prior(prompt, generator=generator).to_tuple()

|

||||

```

|

||||

|

||||

<Tip warning={true}>

|

||||

|

||||

The text-to-image pipeline expects both `image_embeds`, `negative_image_embeds` and the original

|

||||

`prompt` as the text-to-image pipeline uses another text encoder to better guide the second diffusion

|

||||

process of `t2i_pipe`.

|

||||

|

||||

By default, the prior returns unconditioned negative image embeddings corresponding to the negative prompt of `""`.

|

||||

For better results, you can also pass a `negative_prompt` to the prior. This will increase the effective batch size

|

||||

of the prior by a factor of 2.

|

||||

|

||||

```py

|

||||

prompt = "A alien cheeseburger creature eating itself, claymation, cinematic, moody lighting"

|

||||

negative_prompt = "low quality, bad quality"

|

||||

|

||||

image_embeds, negative_image_embeds = pipe_prior(prompt, negative_prompt, generator=generator).to_tuple()

|

||||

```

|

||||

|

||||

</Tip>

|

||||

|

||||

|

||||

Next, we can pass the embeddings as well as the prompt to the text-to-image pipeline. Remember that

|

||||

in case you are using a customized negative prompt, that you should pass this one also to the text-to-image pipelines

|

||||

with `negative_prompt=negative_prompt`:

|

||||

|

||||

```py

|

||||

image = t2i_pipe(prompt, image_embeds=image_embeds, negative_image_embeds=negative_image_embeds).images[0]

|

||||

image.save("cheeseburger_monster.png")

|

||||

```

|

||||

|

||||

One cheeseburger monster coming up! Enjoy!

|

||||

|

||||

|

||||

|

||||

The Kandinsky model works extremely well with creative prompts. Here is some of the amazing art that can be created using the exact same process but with different prompts.

|

||||

|

||||

```python

|

||||

prompt = "bird eye view shot of a full body woman with cyan light orange magenta makeup, digital art, long braided hair her face separated by makeup in the style of yin Yang surrealism, symmetrical face, real image, contrasting tone, pastel gradient background"

|

||||

```

|

||||

|

||||

|

||||

```python

|

||||

prompt = "A car exploding into colorful dust"

|

||||

```

|

||||

|

||||

|

||||

```python

|

||||

prompt = "editorial photography of an organic, almost liquid smoke style armchair"

|

||||

```

|

||||

|

||||

|

||||

```python

|

||||

prompt = "birds eye view of a quilted paper style alien planet landscape, vibrant colours, Cinematic lighting"

|

||||

```

|

||||

|

||||

|

||||

|

||||

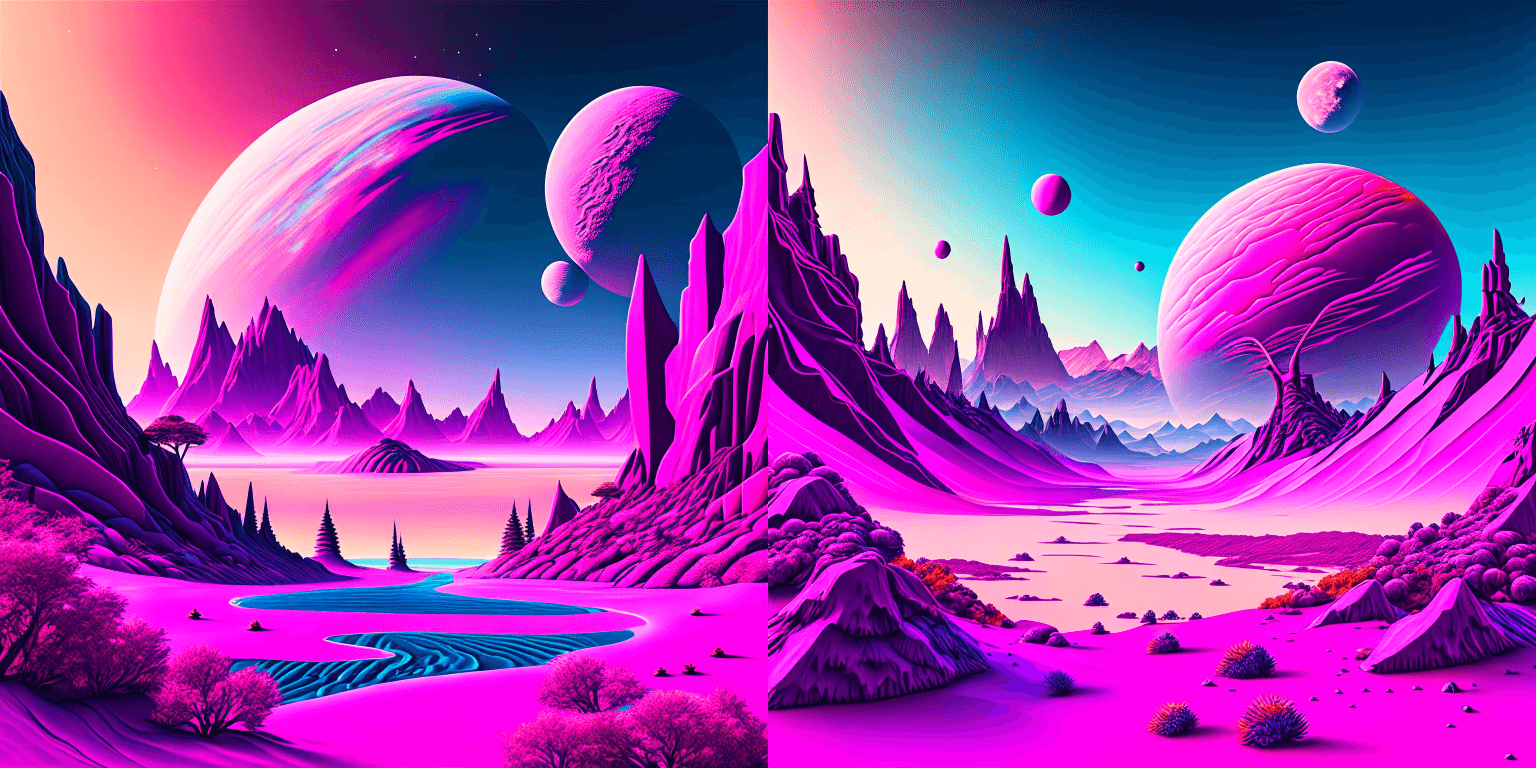

### Text Guided Image-to-Image Generation

|

||||

|

||||

The same Kandinsky model weights can be used for text-guided image-to-image translation. In this case, just make sure to load the weights using the [`KandinskyImg2ImgPipeline`] pipeline.

|

||||

|

||||

**Note**: You can also directly move the weights of the text-to-image pipelines to the image-to-image pipelines

|

||||

without loading them twice by making use of the [`~DiffusionPipeline.components`] function as explained [here](#converting-between-different-pipelines).

|

||||

|

||||

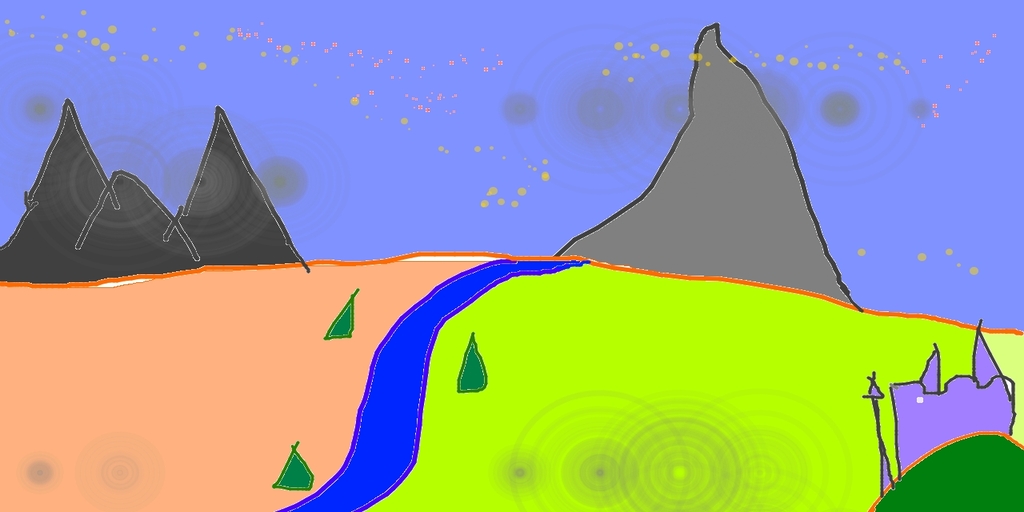

Let's download an image.

|

||||

|

||||

```python

|

||||

from PIL import Image

|

||||

import requests

|

||||

from io import BytesIO

|

||||

|

||||

# download image

|

||||

url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

|

||||

response = requests.get(url)

|

||||

original_image = Image.open(BytesIO(response.content)).convert("RGB")

|

||||

original_image = original_image.resize((768, 512))

|

||||

```

|

||||

|

||||

|

||||

|

||||

```python

|

||||

import torch

|

||||

from diffusers import KandinskyImg2ImgPipeline, KandinskyPriorPipeline

|

||||

|

||||

# create prior

|

||||

pipe_prior = KandinskyPriorPipeline.from_pretrained(

|

||||

"kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16

|

||||

)

|

||||

pipe_prior.to("cuda")

|

||||

|

||||

# create img2img pipeline

|

||||

pipe = KandinskyImg2ImgPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

|

||||

pipe.to("cuda")

|

||||

|

||||

prompt = "A fantasy landscape, Cinematic lighting"

|

||||

negative_prompt = "low quality, bad quality"

|

||||

|

||||

generator = torch.Generator(device="cuda").manual_seed(30)

|

||||

image_embeds, negative_image_embeds = pipe_prior(prompt, negative_prompt, generator=generator).to_tuple()

|

||||

|

||||

out = pipe(

|

||||

prompt,

|

||||

image=original_image,

|

||||

image_embeds=image_embeds,

|

||||

negative_image_embeds=negative_image_embeds,

|

||||

height=768,

|

||||

width=768,

|

||||

strength=0.3,

|

||||

)

|

||||

|

||||

out.images[0].save("fantasy_land.png")

|

||||

```

|

||||

|

||||

|

||||

|

||||

|

||||

### Text Guided Inpainting Generation

|

||||

|

||||

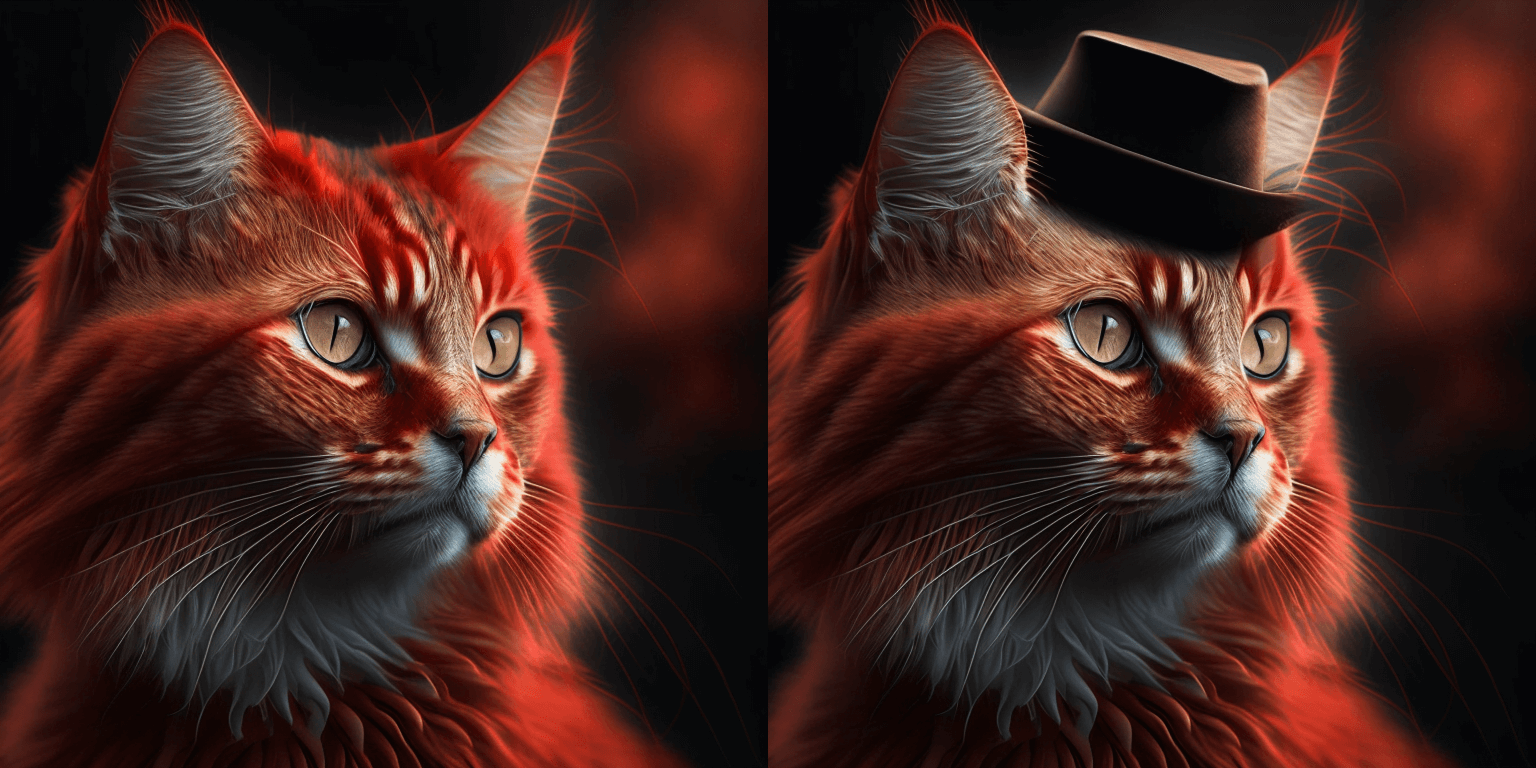

You can use [`KandinskyInpaintPipeline`] to edit images. In this example, we will add a hat to the portrait of a cat.

|

||||

|

||||

```py

|

||||

from diffusers import KandinskyInpaintPipeline, KandinskyPriorPipeline

|

||||

from diffusers.utils import load_image

|

||||

import torch

|

||||

import numpy as np

|

||||

|

||||

pipe_prior = KandinskyPriorPipeline.from_pretrained(

|

||||

"kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16

|

||||

)

|

||||

pipe_prior.to("cuda")

|

||||

|

||||

prompt = "a hat"

|

||||

prior_output = pipe_prior(prompt)

|

||||

|

||||

pipe = KandinskyInpaintPipeline.from_pretrained("kandinsky-community/kandinsky-2-1-inpaint", torch_dtype=torch.float16)

|

||||

pipe.to("cuda")

|

||||

|

||||

init_image = load_image(

|

||||

"https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main" "/kandinsky/cat.png"

|

||||

)

|

||||

|

||||

mask = np.ones((768, 768), dtype=np.float32)

|

||||

# Let's mask out an area above the cat's head

|

||||

mask[:250, 250:-250] = 0

|

||||

|

||||

out = pipe(

|

||||

prompt,

|

||||

image=init_image,

|

||||

mask_image=mask,

|

||||

**prior_output,

|

||||

height=768,

|

||||

width=768,

|

||||

num_inference_steps=150,

|

||||

)

|

||||

|

||||

image = out.images[0]

|

||||

image.save("cat_with_hat.png")

|

||||

```

|

||||

|

||||

|

||||

### Interpolate

|

||||

|

||||

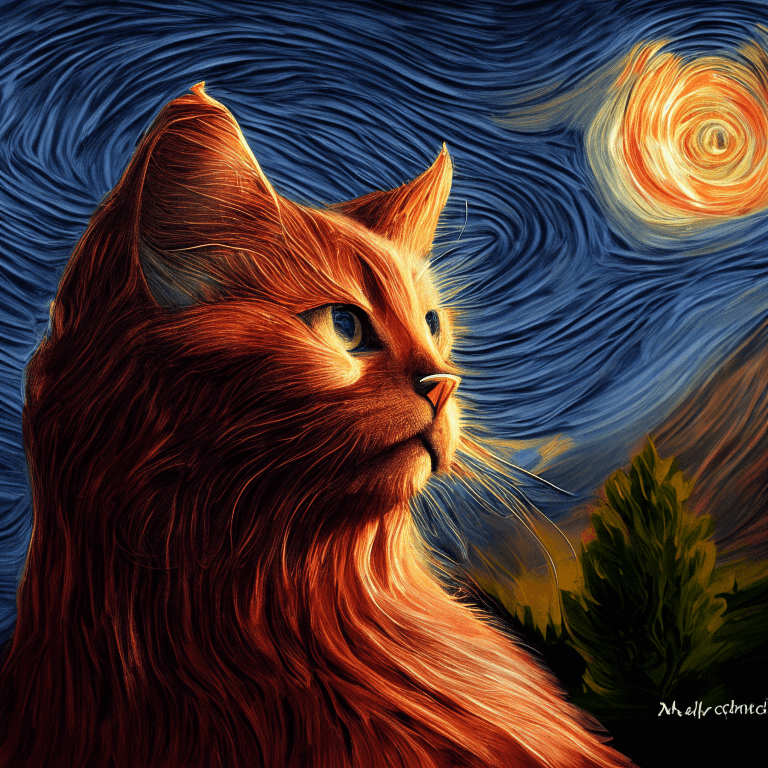

The [`KandinskyPriorPipeline`] also comes with a cool utility function that will allow you to interpolate the latent space of different images and texts super easily. Here is an example of how you can create an Impressionist-style portrait for your pet based on "The Starry Night".

|

||||

|

||||

Note that you can interpolate between texts and images - in the below example, we passed a text prompt "a cat" and two images to the `interplate` function, along with a `weights` variable containing the corresponding weights for each condition we interplate.

|

||||

|

||||

```python

|

||||

from diffusers import KandinskyPriorPipeline, KandinskyPipeline

|

||||

from diffusers.utils import load_image

|

||||

import PIL

|

||||

|

||||

import torch

|

||||

|

||||

pipe_prior = KandinskyPriorPipeline.from_pretrained(

|

||||

"kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16

|

||||

)

|

||||

pipe_prior.to("cuda")

|

||||

|

||||

img1 = load_image(

|

||||

"https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main" "/kandinsky/cat.png"

|

||||

)

|

||||

|

||||

img2 = load_image(

|

||||

"https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main" "/kandinsky/starry_night.jpeg"

|

||||

)

|

||||

|

||||

# add all the conditions we want to interpolate, can be either text or image

|

||||

images_texts = ["a cat", img1, img2]

|

||||

|

||||

# specify the weights for each condition in images_texts

|

||||

weights = [0.3, 0.3, 0.4]

|

||||

|

||||

# We can leave the prompt empty

|

||||

prompt = ""

|

||||

prior_out = pipe_prior.interpolate(images_texts, weights)

|

||||

|

||||

pipe = KandinskyPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

|

||||

pipe.to("cuda")

|

||||

|

||||

image = pipe(prompt, **prior_out, height=768, width=768).images[0]

|

||||

|

||||

image.save("starry_cat.png")

|

||||

```

|

||||

|

||||

|

||||

|

||||

## Optimization

|

||||

|

||||

Running Kandinsky in inference requires running both a first prior pipeline: [`KandinskyPriorPipeline`]

|

||||

and a second image decoding pipeline which is one of [`KandinskyPipeline`], [`KandinskyImg2ImgPipeline`], or [`KandinskyInpaintPipeline`].

|

||||

|

||||

The bulk of the computation time will always be the second image decoding pipeline, so when looking

|

||||

into optimizing the model, one should look into the second image decoding pipeline.

|

||||

|

||||

When running with PyTorch < 2.0, we strongly recommend making use of [`xformers`](https://github.com/facebookresearch/xformers)

|

||||

to speed-up the optimization. This can be done by simply running:

|

||||

|

||||

```py

|

||||

from diffusers import DiffusionPipeline

|

||||

import torch

|

||||

|

||||

t2i_pipe = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

|

||||

t2i_pipe.enable_xformers_memory_efficient_attention()

|

||||

```

|

||||

|

||||

When running on PyTorch >= 2.0, PyTorch's SDPA attention will automatically be used. For more information on

|

||||

PyTorch's SDPA, feel free to have a look at [this blog post](https://pytorch.org/blog/accelerated-diffusers-pt-20/).

|

||||

|

||||

To have explicit control , you can also manually set the pipeline to use PyTorch's 2.0 efficient attention:

|

||||

|

||||

```py

|

||||

from diffusers.models.attention_processor import AttnAddedKVProcessor2_0

|

||||

|

||||

t2i_pipe.unet.set_attn_processor(AttnAddedKVProcessor2_0())

|

||||

```

|

||||

|

||||

The slowest and most memory intense attention processor is the default `AttnAddedKVProcessor` processor.

|

||||

We do **not** recommend using it except for testing purposes or cases where very high determistic behaviour is desired.

|

||||

You can set it with:

|

||||

|

||||

```py

|

||||

from diffusers.models.attention_processor import AttnAddedKVProcessor

|

||||

|

||||

t2i_pipe.unet.set_attn_processor(AttnAddedKVProcessor())

|

||||

```

|

||||

|

||||

With PyTorch >= 2.0, you can also use Kandinsky with `torch.compile` which depending

|

||||

on your hardware can signficantly speed-up your inference time once the model is compiled.

|

||||

To use Kandinsksy with `torch.compile`, you can do:

|

||||

|

||||

```py

|

||||

t2i_pipe.unet.to(memory_format=torch.channels_last)

|

||||

t2i_pipe.unet = torch.compile(t2i_pipe.unet, mode="reduce-overhead", fullgraph=True)

|

||||

```

|

||||

|

||||

After compilation you should see a very fast inference time. For more information,

|

||||

feel free to have a look at [Our PyTorch 2.0 benchmark](https://huggingface.co/docs/diffusers/main/en/optimization/torch2.0).

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## KandinskyPriorPipeline

|

||||

|

||||

[[autodoc]] KandinskyPriorPipeline

|

||||

- all

|

||||

- __call__

|

||||

- interpolate

|

||||

|

||||

## KandinskyPipeline

|

||||

|

||||

[[autodoc]] KandinskyPipeline

|

||||

- all

|

||||

- __call__

|

||||

|

||||

## KandinskyImg2ImgPipeline

|

||||

|

||||

[[autodoc]] KandinskyImg2ImgPipeline

|

||||

- all

|

||||

- __call__

|

||||

|

||||

## KandinskyInpaintPipeline

|

||||

|

||||

[[autodoc]] KandinskyInpaintPipeline

|

||||

- all

|

||||

- __call__

|

||||

@@ -113,3 +113,105 @@ each pipeline, one should look directly into the respective pipeline.

|

||||

|

||||

**Note**: All pipelines have PyTorch's autograd disabled by decorating the `__call__` method with a [`torch.no_grad`](https://pytorch.org/docs/stable/generated/torch.no_grad.html) decorator because pipelines should

|

||||

not be used for training. If you want to store the gradients during the forward pass, we recommend writing your own pipeline, see also our [community-examples](https://github.com/huggingface/diffusers/tree/main/examples/community).

|

||||

|

||||

## Contribution

|

||||

|

||||

We are more than happy about any contribution to the officially supported pipelines 🤗. We aspire

|

||||

all of our pipelines to be **self-contained**, **easy-to-tweak**, **beginner-friendly** and for **one-purpose-only**.

|

||||

|

||||

- **Self-contained**: A pipeline shall be as self-contained as possible. More specifically, this means that all functionality should be either directly defined in the pipeline file itself, should be inherited from (and only from) the [`DiffusionPipeline` class](.../diffusion_pipeline) or be directly attached to the model and scheduler components of the pipeline.

|

||||

- **Easy-to-use**: Pipelines should be extremely easy to use - one should be able to load the pipeline and

|

||||

use it for its designated task, *e.g.* text-to-image generation, in just a couple of lines of code. Most

|

||||

logic including pre-processing, an unrolled diffusion loop, and post-processing should all happen inside the `__call__` method.

|

||||

- **Easy-to-tweak**: Certain pipelines will not be able to handle all use cases and tasks that you might like them to. If you want to use a certain pipeline for a specific use case that is not yet supported, you might have to copy the pipeline file and tweak the code to your needs. We try to make the pipeline code as readable as possible so that each part –from pre-processing to diffusing to post-processing– can easily be adapted. If you would like the community to benefit from your customized pipeline, we would love to see a contribution to our [community-examples](https://github.com/huggingface/diffusers/tree/main/examples/community). If you feel that an important pipeline should be part of the official pipelines but isn't, a contribution to the [official pipelines](./overview) would be even better.

|

||||

- **One-purpose-only**: Pipelines should be used for one task and one task only. Even if two tasks are very similar from a modeling point of view, *e.g.* image2image translation and in-painting, pipelines shall be used for one task only to keep them *easy-to-tweak* and *readable*.

|

||||

|

||||

## Examples

|

||||

|

||||

### Text-to-Image generation with Stable Diffusion

|

||||

|

||||

```python

|

||||

# make sure you're logged in with `huggingface-cli login`

|

||||

from diffusers import StableDiffusionPipeline, LMSDiscreteScheduler

|

||||

|

||||

pipe = StableDiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5")

|

||||

pipe = pipe.to("cuda")

|

||||

|

||||

prompt = "a photo of an astronaut riding a horse on mars"

|

||||

image = pipe(prompt).images[0]

|

||||

|

||||

image.save("astronaut_rides_horse.png")

|

||||

```

|

||||

|

||||

### Image-to-Image text-guided generation with Stable Diffusion

|

||||

|

||||

The `StableDiffusionImg2ImgPipeline` lets you pass a text prompt and an initial image to condition the generation of new images.

|

||||

|

||||

```python

|

||||

import requests

|

||||

from PIL import Image

|

||||

from io import BytesIO

|

||||

|

||||

from diffusers import StableDiffusionImg2ImgPipeline

|

||||

|

||||

# load the pipeline

|

||||

device = "cuda"

|

||||

pipe = StableDiffusionImg2ImgPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", torch_dtype=torch.float16).to(

|

||||

device

|

||||

)

|

||||

|

||||

# let's download an initial image

|

||||

url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

|

||||

|

||||

response = requests.get(url)

|

||||

init_image = Image.open(BytesIO(response.content)).convert("RGB")

|

||||

init_image = init_image.resize((768, 512))

|

||||

|

||||

prompt = "A fantasy landscape, trending on artstation"

|

||||

|

||||

images = pipe(prompt=prompt, image=init_image, strength=0.75, guidance_scale=7.5).images

|

||||

|

||||

images[0].save("fantasy_landscape.png")

|

||||

```

|

||||

You can also run this example on colab [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/image_2_image_using_diffusers.ipynb)

|

||||

|

||||

### Tweak prompts reusing seeds and latents

|

||||

|

||||

You can generate your own latents to reproduce results, or tweak your prompt on a specific result you liked. [This notebook](https://github.com/pcuenca/diffusers-examples/blob/main/notebooks/stable-diffusion-seeds.ipynb) shows how to do it step by step. You can also run it in Google Colab [](https://colab.research.google.com/github/pcuenca/diffusers-examples/blob/main/notebooks/stable-diffusion-seeds.ipynb)

|

||||

|

||||

|

||||

### In-painting using Stable Diffusion

|

||||

|

||||

The `StableDiffusionInpaintPipeline` lets you edit specific parts of an image by providing a mask and text prompt.

|

||||

|

||||

```python

|

||||

import PIL

|

||||

import requests

|

||||

import torch

|

||||

from io import BytesIO

|

||||

|

||||

from diffusers import StableDiffusionInpaintPipeline

|

||||

|

||||

|

||||

def download_image(url):

|

||||

response = requests.get(url)

|

||||

return PIL.Image.open(BytesIO(response.content)).convert("RGB")

|

||||

|

||||

|

||||

img_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo.png"

|

||||

mask_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo_mask.png"

|

||||

|

||||

init_image = download_image(img_url).resize((512, 512))

|

||||

mask_image = download_image(mask_url).resize((512, 512))

|

||||

|

||||

pipe = StableDiffusionInpaintPipeline.from_pretrained(

|

||||

"runwayml/stable-diffusion-inpainting",

|

||||

torch_dtype=torch.float16,

|

||||

)

|

||||

pipe = pipe.to("cuda")

|

||||

|

||||

prompt = "Face of a yellow cat, high resolution, sitting on a park bench"

|

||||

image = pipe(prompt=prompt, image=init_image, mask_image=mask_image).images[0]

|

||||

```

|

||||

|

||||

You can also run this example on colab [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/in_painting_with_stable_diffusion_using_diffusers.ipynb)

|

||||

|

||||

@@ -52,14 +52,6 @@ image = pipe(prompt).images[0]

|

||||

image.save("dolomites.png")

|

||||

```

|

||||

|

||||

<Tip>

|

||||

|

||||

While calling this pipeline, it's possible to specify the `view_batch_size` to have a >1 value.

|

||||

For some GPUs with high performance, higher a `view_batch_size`, can speedup the generation

|

||||

and increase the VRAM usage.

|

||||

|

||||

</Tip>

|

||||

|

||||

## StableDiffusionPanoramaPipeline

|

||||

[[autodoc]] StableDiffusionPanoramaPipeline

|

||||

- __call__

|

||||

@@ -1,204 +0,0 @@

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# UniDiffuser

|

||||

|

||||

The UniDiffuser model was proposed in [One Transformer Fits All Distributions in Multi-Modal Diffusion at Scale](https://arxiv.org/abs/2303.06555) by Fan Bao, Shen Nie, Kaiwen Xue, Chongxuan Li, Shi Pu, Yaole Wang, Gang Yue, Yue Cao, Hang Su, Jun Zhu.

|

||||

|

||||

The abstract of the [paper](https://arxiv.org/abs/2303.06555) is the following:

|

||||

|

||||

*This paper proposes a unified diffusion framework (dubbed UniDiffuser) to fit all distributions relevant to a set of multi-modal data in one model. Our key insight is -- learning diffusion models for marginal, conditional, and joint distributions can be unified as predicting the noise in the perturbed data, where the perturbation levels (i.e. timesteps) can be different for different modalities. Inspired by the unified view, UniDiffuser learns all distributions simultaneously with a minimal modification to the original diffusion model -- perturbs data in all modalities instead of a single modality, inputs individual timesteps in different modalities, and predicts the noise of all modalities instead of a single modality. UniDiffuser is parameterized by a transformer for diffusion models to handle input types of different modalities. Implemented on large-scale paired image-text data, UniDiffuser is able to perform image, text, text-to-image, image-to-text, and image-text pair generation by setting proper timesteps without additional overhead. In particular, UniDiffuser is able to produce perceptually realistic samples in all tasks and its quantitative results (e.g., the FID and CLIP score) are not only superior to existing general-purpose models but also comparable to the bespoken models (e.g., Stable Diffusion and DALL-E 2) in representative tasks (e.g., text-to-image generation).*

|

||||

|

||||

Resources:

|

||||

|

||||

* [Paper](https://arxiv.org/abs/2303.06555).

|

||||

* [Original Code](https://github.com/thu-ml/unidiffuser).

|

||||

|

||||

Available Checkpoints are:

|

||||

- *UniDiffuser-v0 (512x512 resolution)* [thu-ml/unidiffuser-v0](https://huggingface.co/thu-ml/unidiffuser-v0)

|

||||

- *UniDiffuser-v1 (512x512 resolution)* [thu-ml/unidiffuser-v1](https://huggingface.co/thu-ml/unidiffuser-v1)

|

||||

|

||||

This pipeline was contributed by our community member [dg845](https://github.com/dg845).

|

||||

|

||||

## Available Pipelines:

|

||||

|

||||

| Pipeline | Tasks | Demo | Colab |

|

||||

|:---:|:---:|:---:|:---:|

|

||||

| [UniDiffuserPipeline](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/pipeline_unidiffuser.py) | *Joint Image-Text Gen*, *Text-to-Image*, *Image-to-Text*,<br> *Image Gen*, *Text Gen*, *Image Variation*, *Text Variation* | [🤗 Spaces](https://huggingface.co/spaces/thu-ml/unidiffuser) | [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/unidiffuser.ipynb) |

|

||||

|

||||

## Usage Examples

|

||||

|

||||

Because the UniDiffuser model is trained to model the joint distribution of (image, text) pairs, it is capable of performing a diverse range of generation tasks.

|

||||

|

||||

### Unconditional Image and Text Generation

|

||||

|

||||

Unconditional generation (where we start from only latents sampled from a standard Gaussian prior) from a [`UniDiffuserPipeline`] will produce a (image, text) pair:

|

||||

|

||||

```python

|

||||

import torch

|

||||

|

||||

from diffusers import UniDiffuserPipeline

|

||||

|

||||

device = "cuda"

|

||||

model_id_or_path = "thu-ml/unidiffuser-v1"

|

||||

pipe = UniDiffuserPipeline.from_pretrained(model_id_or_path, torch_dtype=torch.float16)

|

||||

pipe.to(device)

|

||||

|

||||

# Unconditional image and text generation. The generation task is automatically inferred.

|

||||

sample = pipe(num_inference_steps=20, guidance_scale=8.0)

|

||||

image = sample.images[0]

|

||||

text = sample.text[0]

|

||||

image.save("unidiffuser_joint_sample_image.png")

|

||||

print(text)

|

||||

```

|

||||

|

||||

This is also called "joint" generation in the UniDiffusers paper, since we are sampling from the joint image-text distribution.

|

||||

|

||||

Note that the generation task is inferred from the inputs used when calling the pipeline.

|

||||

It is also possible to manually specify the unconditional generation task ("mode") manually with [`UniDiffuserPipeline.set_joint_mode`]:

|

||||

|

||||

```python

|

||||

# Equivalent to the above.

|

||||

pipe.set_joint_mode()

|

||||

sample = pipe(num_inference_steps=20, guidance_scale=8.0)

|

||||

```

|

||||

|

||||

When the mode is set manually, subsequent calls to the pipeline will use the set mode without attempting the infer the mode.

|

||||

You can reset the mode with [`UniDiffuserPipeline.reset_mode`], after which the pipeline will once again infer the mode.

|

||||

|

||||