mirror of

https://github.com/huggingface/diffusers.git

synced 2026-02-26 21:00:41 +08:00

Compare commits

106 Commits

pin_depend

...

v0.15.1-pa

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

92bf23aee5 | ||

|

|

84a89cc90c | ||

|

|

f81017300b | ||

|

|

f0ab5e9da8 | ||

|

|

d12119e74c | ||

|

|

e7534542a2 | ||

|

|

b9b891621e | ||

|

|

a43934371a | ||

|

|

caa5884e8a | ||

|

|

fa736e321d | ||

|

|

a4b233e5b5 | ||

|

|

524535b5f2 | ||

|

|

7b2407f4d7 | ||

|

|

639f6455b4 | ||

|

|

9d7c08f95e | ||

|

|

dc277501c7 | ||

|

|

0df47efee2 | ||

|

|

5a7d35e29c | ||

|

|

0c72006e3a | ||

|

|

a89a14fa7a | ||

|

|

e607a582cf | ||

|

|

ea39cd7e64 | ||

|

|

98c5e5da31 | ||

|

|

2d52e81cb9 | ||

|

|

52c4d32d41 | ||

|

|

c6180a311c | ||

|

|

e3095c5f47 | ||

|

|

526827c3d1 | ||

|

|

cb63febf2e | ||

|

|

8c6b47cfde | ||

|

|

67ec9cf513 | ||

|

|

80bc0c0ced | ||

|

|

091a058236 | ||

|

|

881a6b58c3 | ||

|

|

cb9d77af23 | ||

|

|

8b451eb63b | ||

|

|

8369196703 | ||

|

|

4f48476dd6 | ||

|

|

fbc9a736dd | ||

|

|

67c3518f68 | ||

|

|

ba49272db8 | ||

|

|

074d281ae0 | ||

|

|

953c9d14eb | ||

|

|

85f1c19282 | ||

|

|

b5d0a9131d | ||

|

|

983a7fbfd8 | ||

|

|

c413353e8e | ||

|

|

8db5e5b37d | ||

|

|

707341aebe | ||

|

|

26b4319ac5 | ||

|

|

18ebd57bd8 | ||

|

|

b6cc050245 | ||

|

|

0cbefefac3 | ||

|

|

1875c35aeb | ||

|

|

1dc856e508 | ||

|

|

2cbdc586de | ||

|

|

dcfa6e1d20 | ||

|

|

1c96f82ed9 | ||

|

|

ce144d6dd0 | ||

|

|

8c5c30f3b1 | ||

|

|

2de36fae7b | ||

|

|

e40526431a | ||

|

|

24947317a6 | ||

|

|

8826bae655 | ||

|

|

6e8e1ed77a | ||

|

|

37b359b2bd | ||

|

|

a9477bbdac | ||

|

|

ee20d1f8b9 | ||

|

|

0d0fa2a3e1 | ||

|

|

1a6def3ddb | ||

|

|

0c63c3839a | ||

|

|

a87e88b783 | ||

|

|

a0263b2e5b | ||

|

|

62c01d267a | ||

|

|

f3e72e9e57 | ||

|

|

4fd7e97f33 | ||

|

|

4a1eae07c7 | ||

|

|

e329edff7e | ||

|

|

3e2d1af867 | ||

|

|

715c25d344 | ||

|

|

4274a3a915 | ||

|

|

7139f0e874 | ||

|

|

8c530fc2f6 | ||

|

|

723933f5f1 | ||

|

|

f23d6eb8f2 | ||

|

|

cd634a8fbb | ||

|

|

7447f75b9f | ||

|

|

a5bdb678c0 | ||

|

|

c43356267b | ||

|

|

89b23d9869 | ||

|

|

419660c99b | ||

|

|

d36103a089 | ||

|

|

b3c437e009 | ||

|

|

7b6caca9eb | ||

|

|

f3fbf9bfc0 | ||

|

|

e1144ac20c | ||

|

|

1055175a18 | ||

|

|

0df4ad541f | ||

|

|

51d970d60d | ||

|

|

a937e1b594 | ||

|

|

1d033a95f6 | ||

|

|

49609768b4 | ||

|

|

9062b2847d | ||

|

|

b3d5cc4a36 | ||

|

|

b2021273eb | ||

|

|

e47459c80f |

2

.github/workflows/pr_tests.yml

vendored

2

.github/workflows/pr_tests.yml

vendored

@@ -40,7 +40,7 @@ jobs:

|

||||

framework: pytorch_examples

|

||||

runner: docker-cpu

|

||||

image: diffusers/diffusers-pytorch-cpu

|

||||

report: torch_cpu

|

||||

report: torch_example_cpu

|

||||

|

||||

name: ${{ matrix.config.name }}

|

||||

|

||||

|

||||

2

.github/workflows/push_tests_fast.yml

vendored

2

.github/workflows/push_tests_fast.yml

vendored

@@ -38,7 +38,7 @@ jobs:

|

||||

framework: pytorch_examples

|

||||

runner: docker-cpu

|

||||

image: diffusers/diffusers-pytorch-cpu

|

||||

report: torch_cpu

|

||||

report: torch_example_cpu

|

||||

|

||||

name: ${{ matrix.config.name }}

|

||||

|

||||

|

||||

@@ -394,8 +394,15 @@ passes. You should run the tests impacted by your changes like this:

|

||||

```bash

|

||||

$ pytest tests/<TEST_TO_RUN>.py

|

||||

```

|

||||

|

||||

Before you run the tests, please make sure you install the dependencies required for testing. You can do so

|

||||

with this command:

|

||||

|

||||

You can also run the full suite with the following command, but it takes

|

||||

```bash

|

||||

$ pip install -e ".[test]"

|

||||

```

|

||||

|

||||

You can run the full test suite with the following command, but it takes

|

||||

a beefy machine to produce a result in a decent amount of time now that

|

||||

Diffusers has grown a lot. Here is the command for it:

|

||||

|

||||

|

||||

@@ -4,7 +4,7 @@

|

||||

- local: quicktour

|

||||

title: Quicktour

|

||||

- local: stable_diffusion

|

||||

title: Stable Diffusion

|

||||

title: Effective and efficient diffusion

|

||||

- local: installation

|

||||

title: Installation

|

||||

title: Get started

|

||||

@@ -52,6 +52,8 @@

|

||||

title: How to contribute a Pipeline

|

||||

- local: using-diffusers/using_safetensors

|

||||

title: Using safetensors

|

||||

- local: using-diffusers/stable_diffusion_jax_how_to

|

||||

title: Stable Diffusion in JAX/Flax

|

||||

- local: using-diffusers/weighted_prompts

|

||||

title: Weighting Prompts

|

||||

title: Pipelines for Inference

|

||||

@@ -95,6 +97,8 @@

|

||||

title: ONNX

|

||||

- local: optimization/open_vino

|

||||

title: OpenVINO

|

||||

- local: optimization/coreml

|

||||

title: Core ML

|

||||

- local: optimization/mps

|

||||

title: MPS

|

||||

- local: optimization/habana

|

||||

@@ -202,6 +206,8 @@

|

||||

title: Stochastic Karras VE

|

||||

- local: api/pipelines/text_to_video

|

||||

title: Text-to-Video

|

||||

- local: api/pipelines/text_to_video_zero

|

||||

title: Text-to-Video Zero

|

||||

- local: api/pipelines/unclip

|

||||

title: UnCLIP

|

||||

- local: api/pipelines/latent_diffusion_uncond

|

||||

|

||||

@@ -28,3 +28,11 @@ API to load such adapter neural networks via the [`loaders.py` module](https://g

|

||||

### UNet2DConditionLoadersMixin

|

||||

|

||||

[[autodoc]] loaders.UNet2DConditionLoadersMixin

|

||||

|

||||

### TextualInversionLoaderMixin

|

||||

|

||||

[[autodoc]] loaders.TextualInversionLoaderMixin

|

||||

|

||||

### LoraLoaderMixin

|

||||

|

||||

[[autodoc]] loaders.LoraLoaderMixin

|

||||

|

||||

@@ -28,11 +28,11 @@ The abstract of the paper is the following:

|

||||

|

||||

## Tips

|

||||

|

||||

- AltDiffusion is conceptually exactly the same as [Stable Diffusion](./api/pipelines/stable_diffusion/overview).

|

||||

- AltDiffusion is conceptually exactly the same as [Stable Diffusion](./stable_diffusion/overview).

|

||||

|

||||

- *Run AltDiffusion*

|

||||

|

||||

AltDiffusion can be tested very easily with the [`AltDiffusionPipeline`], [`AltDiffusionImg2ImgPipeline`] and the `"BAAI/AltDiffusion-m9"` checkpoint exactly in the same way it is shown in the [Conditional Image Generation Guide](./using-diffusers/conditional_image_generation) and the [Image-to-Image Generation Guide](./using-diffusers/img2img).

|

||||

AltDiffusion can be tested very easily with the [`AltDiffusionPipeline`], [`AltDiffusionImg2ImgPipeline`] and the `"BAAI/AltDiffusion-m9"` checkpoint exactly in the same way it is shown in the [Conditional Image Generation Guide](../../using-diffusers/conditional_image_generation) and the [Image-to-Image Generation Guide](../../using-diffusers/img2img).

|

||||

|

||||

- *How to load and use different schedulers.*

|

||||

|

||||

|

||||

@@ -83,6 +83,7 @@ available a colab notebook to directly try them out.

|

||||

| [versatile_diffusion](./versatile_diffusion) | [Versatile Diffusion: Text, Images and Variations All in One Diffusion Model](https://arxiv.org/abs/2211.08332) | Image Variations Generation |

|

||||

| [versatile_diffusion](./versatile_diffusion) | [Versatile Diffusion: Text, Images and Variations All in One Diffusion Model](https://arxiv.org/abs/2211.08332) | Dual Image and Text Guided Generation |

|

||||

| [vq_diffusion](./vq_diffusion) | [Vector Quantized Diffusion Model for Text-to-Image Synthesis](https://arxiv.org/abs/2111.14822) | Text-to-Image Generation |

|

||||

| [text_to_video_zero](./text_to_video_zero) | [Text2Video-Zero: Text-to-Image Diffusion Models are Zero-Shot Video Generators](https://arxiv.org/abs/2303.13439) | Text-to-Video Generation |

|

||||

|

||||

|

||||

**Note**: Pipelines are simple examples of how to play around with the diffusion systems as described in the corresponding papers.

|

||||

|

||||

@@ -24,11 +24,11 @@ The abstract of the paper is the following:

|

||||

|

||||

| Pipeline | Tasks | Colab | Demo

|

||||

|---|---|:---:|:---:|

|

||||

| [pipeline_semantic_stable_diffusion.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/semantic_stable_diffusion/pipeline_semantic_stable_diffusion) | *Text-to-Image Generation* | [](https://colab.research.google.com/github/ml-research/semantic-image-editing/blob/main/examples/SemanticGuidance.ipynb) | [Coming Soon](https://huggingface.co/AIML-TUDA)

|

||||

| [pipeline_semantic_stable_diffusion.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/semantic_stable_diffusion/pipeline_semantic_stable_diffusion.py) | *Text-to-Image Generation* | [](https://colab.research.google.com/github/ml-research/semantic-image-editing/blob/main/examples/SemanticGuidance.ipynb) | [Coming Soon](https://huggingface.co/AIML-TUDA)

|

||||

|

||||

## Tips

|

||||

|

||||

- The Semantic Guidance pipeline can be used with any [Stable Diffusion](./api/pipelines/stable_diffusion/text2img) checkpoint.

|

||||

- The Semantic Guidance pipeline can be used with any [Stable Diffusion](./stable_diffusion/text2img) checkpoint.

|

||||

|

||||

### Run Semantic Guidance

|

||||

|

||||

@@ -67,7 +67,7 @@ out = pipe(

|

||||

)

|

||||

```

|

||||

|

||||

For more examples check the colab notebook.

|

||||

For more examples check the Colab notebook.

|

||||

|

||||

## StableDiffusionSafePipelineOutput

|

||||

[[autodoc]] pipelines.semantic_stable_diffusion.SemanticStableDiffusionPipelineOutput

|

||||

|

||||

@@ -30,7 +30,7 @@ As depicted above the model takes as input a MIDI file and tokenizes it into a s

|

||||

|

||||

| Pipeline | Tasks | Colab

|

||||

|---|---|:---:|

|

||||

| [pipeline_spectrogram_diffusion.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/spectrogram_diffusion/pipeline_spectrogram_diffusion) | *Unconditional Audio Generation* | - |

|

||||

| [pipeline_spectrogram_diffusion.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/spectrogram_diffusion/pipeline_spectrogram_diffusion.py) | *Unconditional Audio Generation* | - |

|

||||

|

||||

|

||||

## Example usage

|

||||

|

||||

@@ -131,7 +131,7 @@ This should take only around 3-4 seconds on GPU (depending on hardware). The out

|

||||

|

||||

|

||||

|

||||

**Note**: To see how to run all other ControlNet checkpoints, please have a look at [ControlNet with Stable Diffusion 1.5](#controlnet-with-stable-diffusion-1.5)

|

||||

**Note**: To see how to run all other ControlNet checkpoints, please have a look at [ControlNet with Stable Diffusion 1.5](#controlnet-with-stable-diffusion-1.5).

|

||||

|

||||

<!-- TODO: add space -->

|

||||

|

||||

|

||||

@@ -14,7 +14,7 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

## StableDiffusionImageVariationPipeline

|

||||

|

||||

[`StableDiffusionImageVariationPipeline`] lets you generate variations from an input image using Stable Diffusion. It uses a fine-tuned version of Stable Diffusion model, trained by [Justin Pinkney](https://www.justinpinkney.com/) (@Buntworthy) at [Lambda](https://lambdalabs.com/)

|

||||

[`StableDiffusionImageVariationPipeline`] lets you generate variations from an input image using Stable Diffusion. It uses a fine-tuned version of Stable Diffusion model, trained by [Justin Pinkney](https://www.justinpinkney.com/) (@Buntworthy) at [Lambda](https://lambdalabs.com/).

|

||||

|

||||

The original codebase can be found here:

|

||||

[Stable Diffusion Image Variations](https://github.com/LambdaLabsML/lambda-diffusers#stable-diffusion-image-variations)

|

||||

@@ -28,4 +28,4 @@ Available Checkpoints are:

|

||||

- enable_attention_slicing

|

||||

- disable_attention_slicing

|

||||

- enable_xformers_memory_efficient_attention

|

||||

- disable_xformers_memory_efficient_attention

|

||||

- disable_xformers_memory_efficient_attention

|

||||

|

||||

@@ -14,25 +14,26 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

## Overview

|

||||

|

||||

[Self-Attention Guidance](https://arxiv.org/abs/2210.00939) by Susung Hong et al.

|

||||

[Improving Sample Quality of Diffusion Models Using Self-Attention Guidance](https://arxiv.org/abs/2210.00939) by Susung Hong et al.

|

||||

|

||||

The abstract of the paper is the following:

|

||||

|

||||

*Denoising diffusion models (DDMs) have been drawing much attention for their appreciable sample quality and diversity. Despite their remarkable performance, DDMs remain black boxes on which further study is necessary to take a profound step. Motivated by this, we delve into the design of conventional U-shaped diffusion models. More specifically, we investigate the self-attention modules within these models through carefully designed experiments and explore their characteristics. In addition, inspired by the studies that substantiate the effectiveness of the guidance schemes, we present plug-and-play diffusion guidance, namely Self-Attention Guidance (SAG), that can drastically boost the performance of existing diffusion models. Our method, SAG, extracts the intermediate attention map from a diffusion model at every iteration and selects tokens above a certain attention score for masking and blurring to obtain a partially blurred input. Subsequently, we measure the dissimilarity between the predicted noises obtained from feeding the blurred and original input to the diffusion model and leverage it as guidance. With this guidance, we observe apparent improvements in a wide range of diffusion models, e.g., ADM, IDDPM, and Stable Diffusion, and show that the results further improve by combining our method with the conventional guidance scheme. We provide extensive ablation studies to verify our choices.*

|

||||

*Denoising diffusion models (DDMs) have attracted attention for their exceptional generation quality and diversity. This success is largely attributed to the use of class- or text-conditional diffusion guidance methods, such as classifier and classifier-free guidance. In this paper, we present a more comprehensive perspective that goes beyond the traditional guidance methods. From this generalized perspective, we introduce novel condition- and training-free strategies to enhance the quality of generated images. As a simple solution, blur guidance improves the suitability of intermediate samples for their fine-scale information and structures, enabling diffusion models to generate higher quality samples with a moderate guidance scale. Improving upon this, Self-Attention Guidance (SAG) uses the intermediate self-attention maps of diffusion models to enhance their stability and efficacy. Specifically, SAG adversarially blurs only the regions that diffusion models attend to at each iteration and guides them accordingly. Our experimental results show that our SAG improves the performance of various diffusion models, including ADM, IDDPM, Stable Diffusion, and DiT. Moreover, combining SAG with conventional guidance methods leads to further improvement.*

|

||||

|

||||

Resources:

|

||||

|

||||

* [Project Page](https://ku-cvlab.github.io/Self-Attention-Guidance).

|

||||

* [Paper](https://arxiv.org/abs/2210.00939).

|

||||

* [Original Code](https://github.com/KU-CVLAB/Self-Attention-Guidance).

|

||||

* [Demo](https://colab.research.google.com/github/SusungHong/Self-Attention-Guidance/blob/main/SAG_Stable.ipynb).

|

||||

* [Hugging Face Demo](https://huggingface.co/spaces/susunghong/Self-Attention-Guidance).

|

||||

* [Colab Demo](https://colab.research.google.com/github/SusungHong/Self-Attention-Guidance/blob/main/SAG_Stable.ipynb).

|

||||

|

||||

|

||||

## Available Pipelines:

|

||||

|

||||

| Pipeline | Tasks | Demo

|

||||

|---|---|:---:|

|

||||

| [StableDiffusionSAGPipeline](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/pipeline_stable_diffusion_sag.py) | *Text-to-Image Generation* | [Colab](https://colab.research.google.com/github/SusungHong/Self-Attention-Guidance/blob/main/SAG_Stable.ipynb) |

|

||||

| [StableDiffusionSAGPipeline](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/pipeline_stable_diffusion_sag.py) | *Text-to-Image Generation* | [🤗 Space](https://huggingface.co/spaces/susunghong/Self-Attention-Guidance) |

|

||||

|

||||

## Usage example

|

||||

|

||||

|

||||

@@ -28,11 +28,11 @@ The abstract of the paper is the following:

|

||||

|

||||

## Tips

|

||||

|

||||

- Safe Stable Diffusion may also be used with weights of [Stable Diffusion](./api/pipelines/stable_diffusion/text2img).

|

||||

- Safe Stable Diffusion may also be used with weights of [Stable Diffusion](./stable_diffusion/text2img).

|

||||

|

||||

### Run Safe Stable Diffusion

|

||||

|

||||

Safe Stable Diffusion can be tested very easily with the [`StableDiffusionPipelineSafe`], and the `"AIML-TUDA/stable-diffusion-safe"` checkpoint exactly in the same way it is shown in the [Conditional Image Generation Guide](./using-diffusers/conditional_image_generation).

|

||||

Safe Stable Diffusion can be tested very easily with the [`StableDiffusionPipelineSafe`], and the `"AIML-TUDA/stable-diffusion-safe"` checkpoint exactly in the same way it is shown in the [Conditional Image Generation Guide](../../using-diffusers/conditional_image_generation).

|

||||

|

||||

### Interacting with the Safety Concept

|

||||

|

||||

|

||||

@@ -32,12 +32,50 @@ we do not add any additional noise to the image embeddings i.e. `noise_level = 0

|

||||

* [stabilityai/stable-diffusion-2-1-unclip](https://hf.co/stabilityai/stable-diffusion-2-1-unclip)

|

||||

* [stabilityai/stable-diffusion-2-1-unclip-small](https://hf.co/stabilityai/stable-diffusion-2-1-unclip-small)

|

||||

* Text-to-image

|

||||

* Coming soon!

|

||||

* [stabilityai/stable-diffusion-2-1-unclip-small](https://hf.co/stabilityai/stable-diffusion-2-1-unclip-small)

|

||||

|

||||

### Text-to-Image Generation

|

||||

Stable unCLIP can be leveraged for text-to-image generation by pipelining it with the prior model of KakaoBrain's open source DALL-E 2 replication [Karlo](https://huggingface.co/kakaobrain/karlo-v1-alpha)

|

||||

|

||||

Coming soon!

|

||||

```python

|

||||

import torch

|

||||

from diffusers import UnCLIPScheduler, DDPMScheduler, StableUnCLIPPipeline

|

||||

from diffusers.models import PriorTransformer

|

||||

from transformers import CLIPTokenizer, CLIPTextModelWithProjection

|

||||

|

||||

prior_model_id = "kakaobrain/karlo-v1-alpha"

|

||||

data_type = torch.float16

|

||||

prior = PriorTransformer.from_pretrained(prior_model_id, subfolder="prior", torch_dtype=data_type)

|

||||

|

||||

prior_text_model_id = "openai/clip-vit-large-patch14"

|

||||

prior_tokenizer = CLIPTokenizer.from_pretrained(prior_text_model_id)

|

||||

prior_text_model = CLIPTextModelWithProjection.from_pretrained(prior_text_model_id, torch_dtype=data_type)

|

||||

prior_scheduler = UnCLIPScheduler.from_pretrained(prior_model_id, subfolder="prior_scheduler")

|

||||

prior_scheduler = DDPMScheduler.from_config(prior_scheduler.config)

|

||||

|

||||

stable_unclip_model_id = "stabilityai/stable-diffusion-2-1-unclip-small"

|

||||

|

||||

pipe = StableUnCLIPPipeline.from_pretrained(

|

||||

stable_unclip_model_id,

|

||||

torch_dtype=data_type,

|

||||

variant="fp16",

|

||||

prior_tokenizer=prior_tokenizer,

|

||||

prior_text_encoder=prior_text_model,

|

||||

prior=prior,

|

||||

prior_scheduler=prior_scheduler,

|

||||

)

|

||||

|

||||

pipe = pipe.to("cuda")

|

||||

wave_prompt = "dramatic wave, the Oceans roar, Strong wave spiral across the oceans as the waves unfurl into roaring crests; perfect wave form; perfect wave shape; dramatic wave shape; wave shape unbelievable; wave; wave shape spectacular"

|

||||

|

||||

images = pipe(prompt=wave_prompt).images

|

||||

images[0].save("waves.png")

|

||||

```

|

||||

<Tip warning={true}>

|

||||

|

||||

For text-to-image we use `stabilityai/stable-diffusion-2-1-unclip-small` as it was trained on CLIP ViT-L/14 embedding, the same as the Karlo model prior. [stabilityai/stable-diffusion-2-1-unclip](https://hf.co/stabilityai/stable-diffusion-2-1-unclip) was trained on OpenCLIP ViT-H, so we don't recommend its use.

|

||||

|

||||

</Tip>

|

||||

|

||||

### Text guided Image-to-Image Variation

|

||||

|

||||

|

||||

240

docs/source/en/api/pipelines/text_to_video_zero.mdx

Normal file

240

docs/source/en/api/pipelines/text_to_video_zero.mdx

Normal file

@@ -0,0 +1,240 @@

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

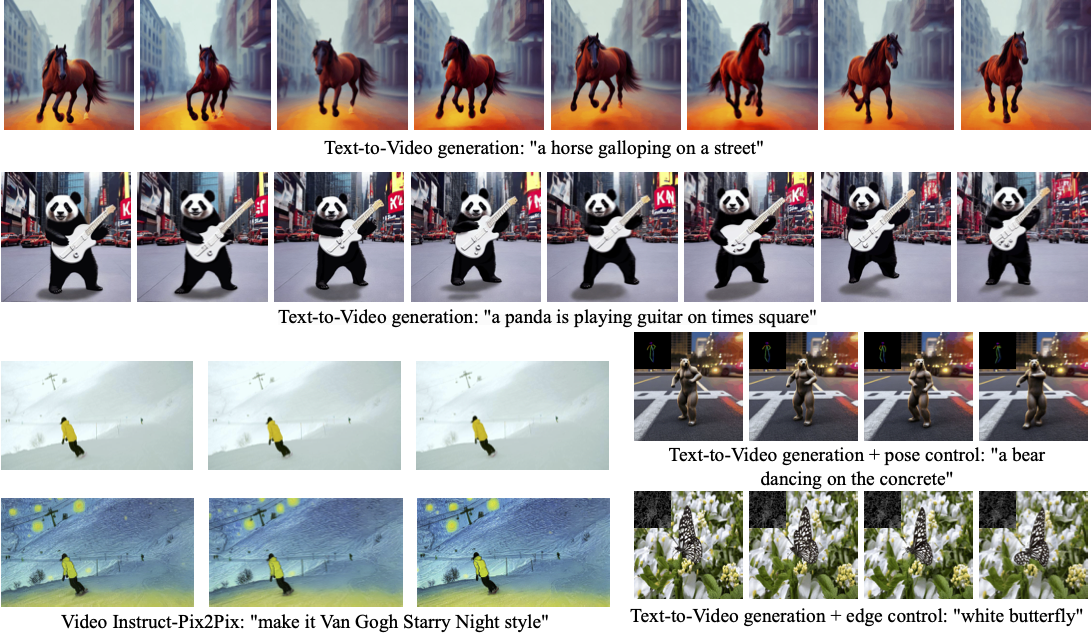

# Zero-Shot Text-to-Video Generation

|

||||

|

||||

## Overview

|

||||

|

||||

|

||||

[Text2Video-Zero: Text-to-Image Diffusion Models are Zero-Shot Video Generators](https://arxiv.org/abs/2303.13439) by

|

||||

Levon Khachatryan,

|

||||

Andranik Movsisyan,

|

||||

Vahram Tadevosyan,

|

||||

Roberto Henschel,

|

||||

[Zhangyang Wang](https://www.ece.utexas.edu/people/faculty/atlas-wang), Shant Navasardyan, [Humphrey Shi](https://www.humphreyshi.com).

|

||||

|

||||

Our method Text2Video-Zero enables zero-shot video generation using either

|

||||

1. A textual prompt, or

|

||||

2. A prompt combined with guidance from poses or edges, or

|

||||

3. Video Instruct-Pix2Pix, i.e., instruction-guided video editing.

|

||||

|

||||

Results are temporally consistent and follow closely the guidance and textual prompts.

|

||||

|

||||

|

||||

|

||||

The abstract of the paper is the following:

|

||||

|

||||

*Recent text-to-video generation approaches rely on computationally heavy training and require large-scale video datasets. In this paper, we introduce a new task of zero-shot text-to-video generation and propose a low-cost approach (without any training or optimization) by leveraging the power of existing text-to-image synthesis methods (e.g., Stable Diffusion), making them suitable for the video domain.

|

||||

Our key modifications include (i) enriching the latent codes of the generated frames with motion dynamics to keep the global scene and the background time consistent; and (ii) reprogramming frame-level self-attention using a new cross-frame attention of each frame on the first frame, to preserve the context, appearance, and identity of the foreground object.

|

||||

Experiments show that this leads to low overhead, yet high-quality and remarkably consistent video generation. Moreover, our approach is not limited to text-to-video synthesis but is also applicable to other tasks such as conditional and content-specialized video generation, and Video Instruct-Pix2Pix, i.e., instruction-guided video editing.

|

||||

As experiments show, our method performs comparably or sometimes better than recent approaches, despite not being trained on additional video data.*

|

||||

|

||||

|

||||

|

||||

Resources:

|

||||

|

||||

* [Project Page](https://text2video-zero.github.io/)

|

||||

* [Paper](https://arxiv.org/abs/2303.13439)

|

||||

* [Original Code](https://github.com/Picsart-AI-Research/Text2Video-Zero)

|

||||

|

||||

|

||||

## Available Pipelines:

|

||||

|

||||

| Pipeline | Tasks | Demo

|

||||

|---|---|:---:|

|

||||

| [TextToVideoZeroPipeline](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/text_to_video_synthesis/pipeline_text_to_video_zero.py) | *Zero-shot Text-to-Video Generation* | [🤗 Space](https://huggingface.co/spaces/PAIR/Text2Video-Zero)

|

||||

|

||||

|

||||

## Usage example

|

||||

|

||||

### Text-To-Video

|

||||

|

||||

To generate a video from prompt, run the following python command

|

||||

```python

|

||||

import torch

|

||||

import imageio

|

||||

from diffusers import TextToVideoZeroPipeline

|

||||

|

||||

model_id = "runwayml/stable-diffusion-v1-5"

|

||||

pipe = TextToVideoZeroPipeline.from_pretrained(model_id, torch_dtype=torch.float16).to("cuda")

|

||||

|

||||

prompt = "A panda is playing guitar on times square"

|

||||

result = pipe(prompt=prompt).images

|

||||

result = [(r * 255).astype("uint8") for r in result]

|

||||

imageio.mimsave("video.mp4", result, fps=4)

|

||||

```

|

||||

You can change these parameters in the pipeline call:

|

||||

* Motion field strength (see the [paper](https://arxiv.org/abs/2303.13439), Sect. 3.3.1):

|

||||

* `motion_field_strength_x` and `motion_field_strength_y`. Default: `motion_field_strength_x=12`, `motion_field_strength_y=12`

|

||||

* `T` and `T'` (see the [paper](https://arxiv.org/abs/2303.13439), Sect. 3.3.1)

|

||||

* `t0` and `t1` in the range `{0, ..., num_inference_steps}`. Default: `t0=45`, `t1=48`

|

||||

* Video length:

|

||||

* `video_length`, the number of frames video_length to be generated. Default: `video_length=8`

|

||||

|

||||

|

||||

### Text-To-Video with Pose Control

|

||||

To generate a video from prompt with additional pose control

|

||||

|

||||

1. Download a demo video

|

||||

|

||||

```python

|

||||

from huggingface_hub import hf_hub_download

|

||||

|

||||

filename = "__assets__/poses_skeleton_gifs/dance1_corr.mp4"

|

||||

repo_id = "PAIR/Text2Video-Zero"

|

||||

video_path = hf_hub_download(repo_type="space", repo_id=repo_id, filename=filename)

|

||||

```

|

||||

|

||||

|

||||

2. Read video containing extracted pose images

|

||||

```python

|

||||

from PIL import Image

|

||||

import imageio

|

||||

|

||||

reader = imageio.get_reader(video_path, "ffmpeg")

|

||||

frame_count = 8

|

||||

pose_images = [Image.fromarray(reader.get_data(i)) for i in range(frame_count)]

|

||||

```

|

||||

To extract pose from actual video, read [ControlNet documentation](./stable_diffusion/controlnet).

|

||||

|

||||

3. Run `StableDiffusionControlNetPipeline` with our custom attention processor

|

||||

|

||||

```python

|

||||

import torch

|

||||

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

|

||||

from diffusers.pipelines.text_to_video_synthesis.pipeline_text_to_video_zero import CrossFrameAttnProcessor

|

||||

|

||||

model_id = "runwayml/stable-diffusion-v1-5"

|

||||

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-openpose", torch_dtype=torch.float16)

|

||||

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

||||

model_id, controlnet=controlnet, torch_dtype=torch.float16

|

||||

).to("cuda")

|

||||

|

||||

# Set the attention processor

|

||||

pipe.unet.set_attn_processor(CrossFrameAttnProcessor(batch_size=2))

|

||||

pipe.controlnet.set_attn_processor(CrossFrameAttnProcessor(batch_size=2))

|

||||

|

||||

# fix latents for all frames

|

||||

latents = torch.randn((1, 4, 64, 64), device="cuda", dtype=torch.float16).repeat(len(pose_images), 1, 1, 1)

|

||||

|

||||

prompt = "Darth Vader dancing in a desert"

|

||||

result = pipe(prompt=[prompt] * len(pose_images), image=pose_images, latents=latents).images

|

||||

imageio.mimsave("video.mp4", result, fps=4)

|

||||

```

|

||||

|

||||

|

||||

### Text-To-Video with Edge Control

|

||||

|

||||

To generate a video from prompt with additional pose control,

|

||||

follow the steps described above for pose-guided generation using [Canny edge ControlNet model](https://huggingface.co/lllyasviel/sd-controlnet-canny).

|

||||

|

||||

|

||||

### Video Instruct-Pix2Pix

|

||||

|

||||

To perform text-guided video editing (with [InstructPix2Pix](./stable_diffusion/pix2pix)):

|

||||

|

||||

1. Download a demo video

|

||||

|

||||

```python

|

||||

from huggingface_hub import hf_hub_download

|

||||

|

||||

filename = "__assets__/pix2pix video/camel.mp4"

|

||||

repo_id = "PAIR/Text2Video-Zero"

|

||||

video_path = hf_hub_download(repo_type="space", repo_id=repo_id, filename=filename)

|

||||

```

|

||||

|

||||

2. Read video from path

|

||||

```python

|

||||

from PIL import Image

|

||||

import imageio

|

||||

|

||||

reader = imageio.get_reader(video_path, "ffmpeg")

|

||||

frame_count = 8

|

||||

video = [Image.fromarray(reader.get_data(i)) for i in range(frame_count)]

|

||||

```

|

||||

|

||||

3. Run `StableDiffusionInstructPix2PixPipeline` with our custom attention processor

|

||||

```python

|

||||

import torch

|

||||

from diffusers import StableDiffusionInstructPix2PixPipeline

|

||||

from diffusers.pipelines.text_to_video_synthesis.pipeline_text_to_video_zero import CrossFrameAttnProcessor

|

||||

|

||||

model_id = "timbrooks/instruct-pix2pix"

|

||||

pipe = StableDiffusionInstructPix2PixPipeline.from_pretrained(model_id, torch_dtype=torch.float16).to("cuda")

|

||||

pipe.unet.set_attn_processor(CrossFrameAttnProcessor(batch_size=3))

|

||||

|

||||

prompt = "make it Van Gogh Starry Night style"

|

||||

result = pipe(prompt=[prompt] * len(video), image=video).images

|

||||

imageio.mimsave("edited_video.mp4", result, fps=4)

|

||||

```

|

||||

|

||||

|

||||

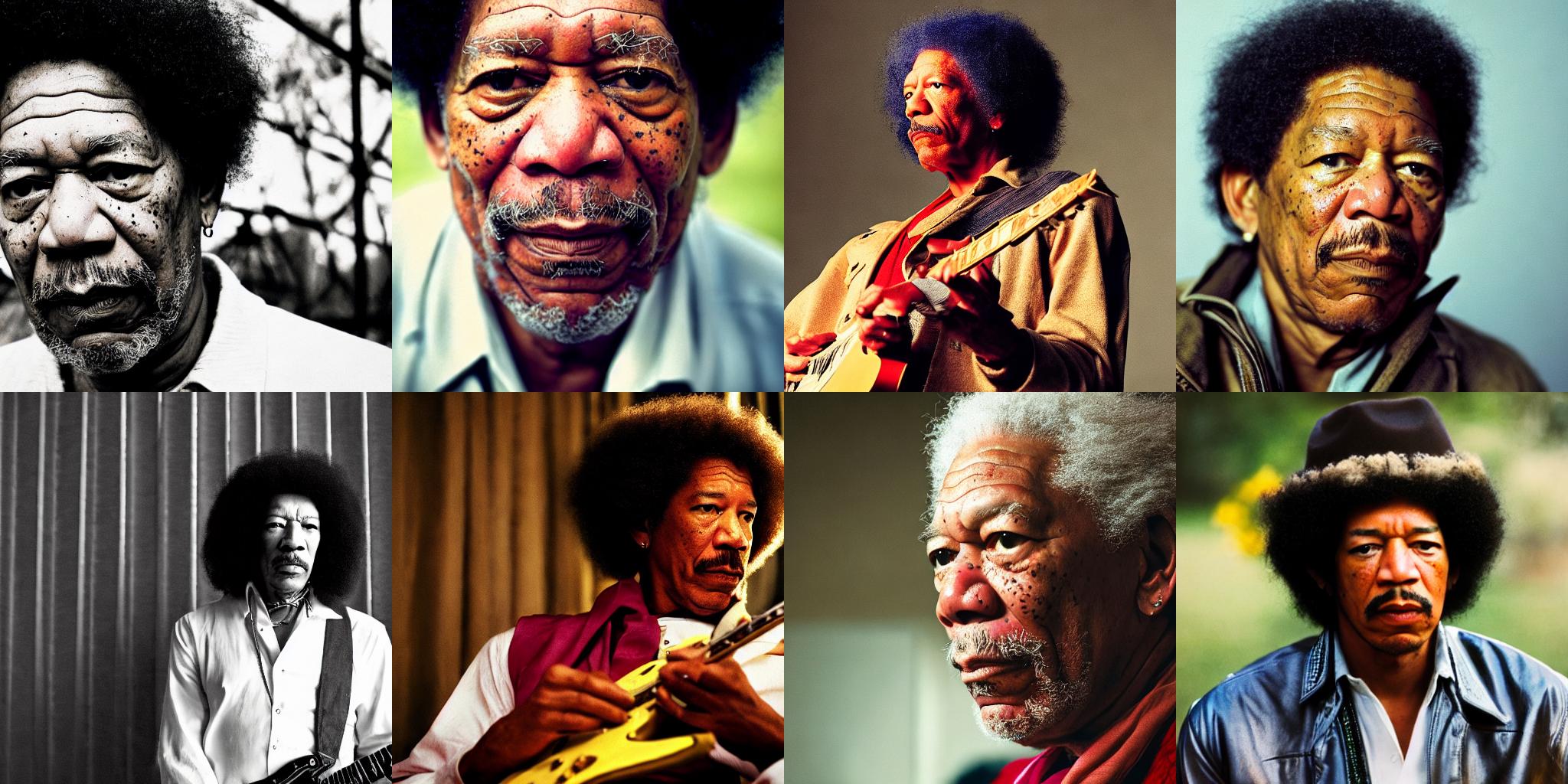

### DreamBooth specialization

|

||||

|

||||

Methods **Text-To-Video**, **Text-To-Video with Pose Control** and **Text-To-Video with Edge Control**

|

||||

can run with custom [DreamBooth](../training/dreambooth) models, as shown below for

|

||||

[Canny edge ControlNet model](https://huggingface.co/lllyasviel/sd-controlnet-canny) and

|

||||

[Avatar style DreamBooth](https://huggingface.co/PAIR/text2video-zero-controlnet-canny-avatar) model

|

||||

|

||||

1. Download a demo video

|

||||

|

||||

```python

|

||||

from huggingface_hub import hf_hub_download

|

||||

|

||||

filename = "__assets__/canny_videos_mp4/girl_turning.mp4"

|

||||

repo_id = "PAIR/Text2Video-Zero"

|

||||

video_path = hf_hub_download(repo_type="space", repo_id=repo_id, filename=filename)

|

||||

```

|

||||

|

||||

2. Read video from path

|

||||

```python

|

||||

from PIL import Image

|

||||

import imageio

|

||||

|

||||

reader = imageio.get_reader(video_path, "ffmpeg")

|

||||

frame_count = 8

|

||||

video = [Image.fromarray(reader.get_data(i)) for i in range(frame_count)]

|

||||

```

|

||||

|

||||

3. Run `StableDiffusionControlNetPipeline` with custom trained DreamBooth model

|

||||

```python

|

||||

import torch

|

||||

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

|

||||

from diffusers.pipelines.text_to_video_synthesis.pipeline_text_to_video_zero import CrossFrameAttnProcessor

|

||||

|

||||

# set model id to custom model

|

||||

model_id = "PAIR/text2video-zero-controlnet-canny-avatar"

|

||||

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16)

|

||||

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

||||

model_id, controlnet=controlnet, torch_dtype=torch.float16

|

||||

).to("cuda")

|

||||

|

||||

# Set the attention processor

|

||||

pipe.unet.set_attn_processor(CrossFrameAttnProcessor(batch_size=2))

|

||||

pipe.controlnet.set_attn_processor(CrossFrameAttnProcessor(batch_size=2))

|

||||

|

||||

# fix latents for all frames

|

||||

latents = torch.randn((1, 4, 64, 64), device="cuda", dtype=torch.float16).repeat(len(pose_images), 1, 1, 1)

|

||||

|

||||

prompt = "oil painting of a beautiful girl avatar style"

|

||||

result = pipe(prompt=[prompt] * len(pose_images), image=pose_images, latents=latents).images

|

||||

imageio.mimsave("video.mp4", result, fps=4)

|

||||

```

|

||||

|

||||

You can filter out some available DreamBooth-trained models with [this link](https://huggingface.co/models?search=dreambooth).

|

||||

|

||||

|

||||

|

||||

## TextToVideoZeroPipeline

|

||||

[[autodoc]] TextToVideoZeroPipeline

|

||||

- all

|

||||

- __call__

|

||||

@@ -20,7 +20,7 @@ The abstract of the paper is the following:

|

||||

|

||||

## Tips

|

||||

|

||||

- VersatileDiffusion is conceptually very similar as [Stable Diffusion](./api/pipelines/stable_diffusion/overview), but instead of providing just a image data stream conditioned on text, VersatileDiffusion provides both a image and text data stream and can be conditioned on both text and image.

|

||||

- VersatileDiffusion is conceptually very similar as [Stable Diffusion](./stable_diffusion/overview), but instead of providing just a image data stream conditioned on text, VersatileDiffusion provides both a image and text data stream and can be conditioned on both text and image.

|

||||

|

||||

### *Run VersatileDiffusion*

|

||||

|

||||

|

||||

@@ -10,7 +10,7 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# Denoising diffusion implicit models (DDIM)

|

||||

# Denoising Diffusion Implicit Models (DDIM)

|

||||

|

||||

## Overview

|

||||

|

||||

@@ -24,4 +24,4 @@ The original codebase of this paper can be found here: [ermongroup/ddim](https:/

|

||||

For questions, feel free to contact the author on [tsong.me](https://tsong.me/).

|

||||

|

||||

## DDIMScheduler

|

||||

[[autodoc]] DDIMScheduler

|

||||

[[autodoc]] DDIMScheduler

|

||||

|

||||

@@ -10,7 +10,7 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# Denoising diffusion probabilistic models (DDPM)

|

||||

# Denoising Diffusion Probabilistic Models (DDPM)

|

||||

|

||||

## Overview

|

||||

|

||||

@@ -24,4 +24,4 @@ We present high quality image synthesis results using diffusion probabilistic mo

|

||||

The original paper can be found [here](https://arxiv.org/abs/2010.02502).

|

||||

|

||||

## DDPMScheduler

|

||||

[[autodoc]] DDPMScheduler

|

||||

[[autodoc]] DDPMScheduler

|

||||

|

||||

@@ -14,8 +14,8 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

## Overview

|

||||

|

||||

Ancestral sampling with Euler method steps. Based on the original (k-diffusion)[https://github.com/crowsonkb/k-diffusion/blob/481677d114f6ea445aa009cf5bd7a9cdee909e47/k_diffusion/sampling.py#L72] implementation by Katherine Crowson.

|

||||

Ancestral sampling with Euler method steps. Based on the original [k-diffusion](https://github.com/crowsonkb/k-diffusion/blob/481677d114f6ea445aa009cf5bd7a9cdee909e47/k_diffusion/sampling.py#L72) implementation by Katherine Crowson.

|

||||

Fast scheduler which often times generates good outputs with 20-30 steps.

|

||||

|

||||

## EulerAncestralDiscreteScheduler

|

||||

[[autodoc]] EulerAncestralDiscreteScheduler

|

||||

[[autodoc]] EulerAncestralDiscreteScheduler

|

||||

|

||||

@@ -10,11 +10,11 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# variance exploding stochastic differential equation (VE-SDE) scheduler

|

||||

# Variance Exploding Stochastic Differential Equation (VE-SDE) scheduler

|

||||

|

||||

## Overview

|

||||

|

||||

Original paper can be found [here](https://arxiv.org/abs/2011.13456).

|

||||

|

||||

## ScoreSdeVeScheduler

|

||||

[[autodoc]] ScoreSdeVeScheduler

|

||||

[[autodoc]] ScoreSdeVeScheduler

|

||||

|

||||

@@ -10,7 +10,7 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# Variance preserving stochastic differential equation (VP-SDE) scheduler

|

||||

# Variance Preserving Stochastic Differential Equation (VP-SDE) scheduler

|

||||

|

||||

## Overview

|

||||

|

||||

@@ -23,4 +23,4 @@ Score SDE-VP is under construction.

|

||||

</Tip>

|

||||

|

||||

## ScoreSdeVpScheduler

|

||||

[[autodoc]] schedulers.scheduling_sde_vp.ScoreSdeVpScheduler

|

||||

[[autodoc]] schedulers.scheduling_sde_vp.ScoreSdeVpScheduler

|

||||

|

||||

@@ -16,7 +16,7 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

UniPC is a training-free framework designed for the fast sampling of diffusion models, which consists of a corrector (UniC) and a predictor (UniP) that share a unified analytical form and support arbitrary orders.

|

||||

|

||||

For more details about the method, please refer to the [[paper]](https://arxiv.org/abs/2302.04867) and the [[code]](https://github.com/wl-zhao/UniPC).

|

||||

For more details about the method, please refer to the [paper](https://arxiv.org/abs/2302.04867) and the [code](https://github.com/wl-zhao/UniPC).

|

||||

|

||||

Fast Sampling of Diffusion Models with Exponential Integrator.

|

||||

|

||||

|

||||

@@ -170,7 +170,7 @@ please have a look at the next sections.

|

||||

|

||||

For all of the following contributions, you will need to open a PR. It is explained in detail how to do so in the [Opening a pull requst](#how-to-open-a-pr) section.

|

||||

|

||||

### 4. Fixing a "Good first issue"

|

||||

### 4. Fixing a `Good first issue`

|

||||

|

||||

*Good first issues* are marked by the [Good first issue](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22) label. Usually, the issue already

|

||||

explains how a potential solution should look so that it is easier to fix.

|

||||

@@ -275,7 +275,7 @@ Once an example script works, please make sure to add a comprehensive `README.md

|

||||

|

||||

If you are contributing to the official training examples, please also make sure to add a test to [examples/test_examples.py](https://github.com/huggingface/diffusers/blob/main/examples/test_examples.py). This is not necessary for non-official training examples.

|

||||

|

||||

### 8. Fixing a "Good second issue"

|

||||

### 8. Fixing a `Good second issue`

|

||||

|

||||

*Good second issues* are marked by the [Good second issue](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22Good+second+issue%22) label. Good second issues are

|

||||

usually more complicated to solve than [Good first issues](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22).

|

||||

|

||||

@@ -73,7 +73,7 @@ The library has three main components:

|

||||

| [stable_diffusion_pix2pix](./api/pipelines/stable_diffusion/pix2pix) | [InstructPix2Pix: Learning to Follow Image Editing Instructions](https://arxiv.org/abs/2211.09800) | Text-Guided Image Editing|

|

||||

| [stable_diffusion_pix2pix_zero](./api/pipelines/stable_diffusion/pix2pix_zero) | [Zero-shot Image-to-Image Translation](https://pix2pixzero.github.io/) | Text-Guided Image Editing |

|

||||

| [stable_diffusion_attend_and_excite](./api/pipelines/stable_diffusion/attend_and_excite) | [Attend-and-Excite: Attention-Based Semantic Guidance for Text-to-Image Diffusion Models](https://arxiv.org/abs/2301.13826) | Text-to-Image Generation |

|

||||

| [stable_diffusion_self_attention_guidance](./api/pipelines/stable_diffusion/self_attention_guidance) | [Improving Sample Quality of Diffusion Models Using Self-Attention Guidance](https://arxiv.org/abs/2210.00939) | Text-to-Image Generation |

|

||||

| [stable_diffusion_self_attention_guidance](./api/pipelines/stable_diffusion/self_attention_guidance) | [Improving Sample Quality of Diffusion Models Using Self-Attention Guidance](https://arxiv.org/abs/2210.00939) | Text-to-Image Generation Unconditional Image Generation |

|

||||

| [stable_diffusion_image_variation](./stable_diffusion/image_variation) | [Stable Diffusion Image Variations](https://github.com/LambdaLabsML/lambda-diffusers#stable-diffusion-image-variations) | Image-to-Image Generation |

|

||||

| [stable_diffusion_latent_upscale](./stable_diffusion/latent_upscale) | [Stable Diffusion Latent Upscaler](https://twitter.com/StabilityAI/status/1590531958815064065) | Text-Guided Super Resolution Image-to-Image |

|

||||

| [stable_diffusion_model_editing](./api/pipelines/stable_diffusion/model_editing) | [Editing Implicit Assumptions in Text-to-Image Diffusion Models](https://time-diffusion.github.io/) | Text-to-Image Model Editing |

|

||||

@@ -90,4 +90,4 @@ The library has three main components:

|

||||

| [versatile_diffusion](./api/pipelines/versatile_diffusion) | [Versatile Diffusion: Text, Images and Variations All in One Diffusion Model](https://arxiv.org/abs/2211.08332) | Text-to-Image Generation |

|

||||

| [versatile_diffusion](./api/pipelines/versatile_diffusion) | [Versatile Diffusion: Text, Images and Variations All in One Diffusion Model](https://arxiv.org/abs/2211.08332) | Image Variations Generation |

|

||||

| [versatile_diffusion](./api/pipelines/versatile_diffusion) | [Versatile Diffusion: Text, Images and Variations All in One Diffusion Model](https://arxiv.org/abs/2211.08332) | Dual Image and Text Guided Generation |

|

||||

| [vq_diffusion](./api/pipelines/vq_diffusion) | [Vector Quantized Diffusion Model for Text-to-Image Synthesis](https://arxiv.org/abs/2111.14822) | Text-to-Image Generation |

|

||||

| [vq_diffusion](./api/pipelines/vq_diffusion) | [Vector Quantized Diffusion Model for Text-to-Image Synthesis](https://arxiv.org/abs/2111.14822) | Text-to-Image Generation |

|

||||

|

||||

167

docs/source/en/optimization/coreml.mdx

Normal file

167

docs/source/en/optimization/coreml.mdx

Normal file

@@ -0,0 +1,167 @@

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# How to run Stable Diffusion with Core ML

|

||||

|

||||

[Core ML](https://developer.apple.com/documentation/coreml) is the model format and machine learning library supported by Apple frameworks. If you are interested in running Stable Diffusion models inside your macOS or iOS/iPadOS apps, this guide will show you how to convert existing PyTorch checkpoints into the Core ML format and use them for inference with Python or Swift.

|

||||

|

||||

Core ML models can leverage all the compute engines available in Apple devices: the CPU, the GPU, and the Apple Neural Engine (or ANE, a tensor-optimized accelerator available in Apple Silicon Macs and modern iPhones/iPads). Depending on the model and the device it's running on, Core ML can mix and match compute engines too, so some portions of the model may run on the CPU while others run on GPU, for example.

|

||||

|

||||

<Tip>

|

||||

|

||||

You can also run the `diffusers` Python codebase on Apple Silicon Macs using the `mps` accelerator built into PyTorch. This approach is explained in depth in [the mps guide](mps), but it is not compatible with native apps.

|

||||

|

||||

</Tip>

|

||||

|

||||

## Stable Diffusion Core ML Checkpoints

|

||||

|

||||

Stable Diffusion weights (or checkpoints) are stored in the PyTorch format, so you need to convert them to the Core ML format before we can use them inside native apps.

|

||||

|

||||

Thankfully, Apple engineers developed [a conversion tool](https://github.com/apple/ml-stable-diffusion#-converting-models-to-core-ml) based on `diffusers` to convert the PyTorch checkpoints to Core ML.

|

||||

|

||||

Before you convert a model, though, take a moment to explore the Hugging Face Hub – chances are the model you're interested in is already available in Core ML format:

|

||||

|

||||

- the [Apple](https://huggingface.co/apple) organization includes Stable Diffusion versions 1.4, 1.5, 2.0 base, and 2.1 base

|

||||

- [coreml](https://huggingface.co/coreml) organization includes custom DreamBoothed and finetuned models

|

||||

- use this [filter](https://huggingface.co/models?pipeline_tag=text-to-image&library=coreml&p=2&sort=likes) to return all available Core ML checkpoints

|

||||

|

||||

If you can't find the model you're interested in, we recommend you follow the instructions for [Converting Models to Core ML](https://github.com/apple/ml-stable-diffusion#-converting-models-to-core-ml) by Apple.

|

||||

|

||||

## Selecting the Core ML Variant to Use

|

||||

|

||||

Stable Diffusion models can be converted to different Core ML variants intended for different purposes:

|

||||

|

||||

- The type of attention blocks used. The attention operation is used to "pay attention" to the relationship between different areas in the image representations and to understand how the image and text representations are related. Attention is compute- and memory-intensive, so different implementations exist that consider the hardware characteristics of different devices. For Core ML Stable Diffusion models, there are two attention variants:

|

||||

* `split_einsum` ([introduced by Apple](https://machinelearning.apple.com/research/neural-engine-transformers)) is optimized for ANE devices, which is available in modern iPhones, iPads and M-series computers.

|

||||

* The "original" attention (the base implementation used in `diffusers`) is only compatible with CPU/GPU and not ANE. It can be *faster* to run your model on CPU + GPU using `original` attention than ANE. See [this performance benchmark](https://huggingface.co/blog/fast-mac-diffusers#performance-benchmarks) as well as some [additional measures provided by the community](https://github.com/huggingface/swift-coreml-diffusers/issues/31) for additional details.

|

||||

|

||||

- The supported inference framework.

|

||||

* `packages` are suitable for Python inference. This can be used to test converted Core ML models before attempting to integrate them inside native apps, or if you want to explore Core ML performance but don't need to support native apps. For example, an application with a web UI could perfectly use a Python Core ML backend.

|

||||

* `compiled` models are required for Swift code. The `compiled` models in the Hub split the large UNet model weights into several files for compatibility with iOS and iPadOS devices. This corresponds to the [`--chunk-unet` conversion option](https://github.com/apple/ml-stable-diffusion#-converting-models-to-core-ml). If you want to support native apps, then you need to select the `compiled` variant.

|

||||

|

||||

The official Core ML Stable Diffusion [models](https://huggingface.co/apple/coreml-stable-diffusion-v1-4/tree/main) include these variants, but the community ones may vary:

|

||||

|

||||

```

|

||||

coreml-stable-diffusion-v1-4

|

||||

├── README.md

|

||||

├── original

|

||||

│ ├── compiled

|

||||

│ └── packages

|

||||

└── split_einsum

|

||||

├── compiled

|

||||

└── packages

|

||||

```

|

||||

|

||||

You can download and use the variant you need as shown below.

|

||||

|

||||

## Core ML Inference in Python

|

||||

|

||||

Install the following libraries to run Core ML inference in Python:

|

||||

|

||||

```bash

|

||||

pip install huggingface_hub

|

||||

pip install git+https://github.com/apple/ml-stable-diffusion

|

||||

```

|

||||

|

||||

### Download the Model Checkpoints

|

||||

|

||||

To run inference in Python, use one of the versions stored in the `packages` folders because the `compiled` ones are only compatible with Swift. You may choose whether you want to use `original` or `split_einsum` attention.

|

||||

|

||||

This is how you'd download the `original` attention variant from the Hub to a directory called `models`:

|

||||

|

||||

```Python

|

||||

from huggingface_hub import snapshot_download

|

||||

from pathlib import Path

|

||||

|

||||

repo_id = "apple/coreml-stable-diffusion-v1-4"

|

||||

variant = "original/packages"

|

||||

|

||||

model_path = Path("./models") / (repo_id.split("/")[-1] + "_" + variant.replace("/", "_"))

|

||||

snapshot_download(repo_id, allow_patterns=f"{variant}/*", local_dir=model_path, local_dir_use_symlinks=False)

|

||||

print(f"Model downloaded at {model_path}")

|

||||

```

|

||||

|

||||

|

||||

### Inference[[python-inference]]

|

||||

|

||||

Once you have downloaded a snapshot of the model, you can test it using Apple's Python script.

|

||||

|

||||

```shell

|

||||

python -m python_coreml_stable_diffusion.pipeline --prompt "a photo of an astronaut riding a horse on mars" -i models/coreml-stable-diffusion-v1-4_original_packages -o </path/to/output/image> --compute-unit CPU_AND_GPU --seed 93

|

||||

```

|

||||

|

||||

`<output-mlpackages-directory>` should point to the checkpoint you downloaded in the step above, and `--compute-unit` indicates the hardware you want to allow for inference. It must be one of the following options: `ALL`, `CPU_AND_GPU`, `CPU_ONLY`, `CPU_AND_NE`. You may also provide an optional output path, and a seed for reproducibility.

|

||||

|

||||

The inference script assumes you're using the original version of the Stable Diffusion model, `CompVis/stable-diffusion-v1-4`. If you use another model, you *have* to specify its Hub id in the inference command line, using the `--model-version` option. This works for models already supported and custom models you trained or fine-tuned yourself.

|

||||

|

||||

For example, if you want to use [`runwayml/stable-diffusion-v1-5`](https://huggingface.co/runwayml/stable-diffusion-v1-5):

|

||||

|

||||

```shell

|

||||

python -m python_coreml_stable_diffusion.pipeline --prompt "a photo of an astronaut riding a horse on mars" --compute-unit ALL -o output --seed 93 -i models/coreml-stable-diffusion-v1-5_original_packages --model-version runwayml/stable-diffusion-v1-5

|

||||

```

|

||||

|

||||

|

||||

## Core ML inference in Swift

|

||||

|

||||

Running inference in Swift is slightly faster than in Python because the models are already compiled in the `mlmodelc` format. This is noticeable on app startup when the model is loaded but shouldn’t be noticeable if you run several generations afterward.

|

||||

|

||||

### Download

|

||||

|

||||

To run inference in Swift on your Mac, you need one of the `compiled` checkpoint versions. We recommend you download them locally using Python code similar to the previous example, but with one of the `compiled` variants:

|

||||

|

||||

```Python

|

||||

from huggingface_hub import snapshot_download

|

||||

from pathlib import Path

|

||||

|

||||

repo_id = "apple/coreml-stable-diffusion-v1-4"

|

||||

variant = "original/compiled"

|

||||

|

||||

model_path = Path("./models") / (repo_id.split("/")[-1] + "_" + variant.replace("/", "_"))

|

||||

snapshot_download(repo_id, allow_patterns=f"{variant}/*", local_dir=model_path, local_dir_use_symlinks=False)

|

||||

print(f"Model downloaded at {model_path}")

|

||||

```

|

||||

|

||||

### Inference[[swift-inference]]

|

||||

|

||||

To run inference, please clone Apple's repo:

|

||||

|

||||

```bash

|

||||

git clone https://github.com/apple/ml-stable-diffusion

|

||||

cd ml-stable-diffusion

|

||||

```

|

||||

|

||||

And then use Apple's command line tool, [Swift Package Manager](https://www.swift.org/package-manager/#):

|

||||

|

||||

```bash

|

||||

swift run StableDiffusionSample --resource-path models/coreml-stable-diffusion-v1-4_original_compiled --compute-units all "a photo of an astronaut riding a horse on mars"

|

||||

```

|

||||

|

||||

You have to specify in `--resource-path` one of the checkpoints downloaded in the previous step, so please make sure it contains compiled Core ML bundles with the extension `.mlmodelc`. The `--compute-units` has to be one of these values: `all`, `cpuOnly`, `cpuAndGPU`, `cpuAndNeuralEngine`.

|

||||

|

||||

For more details, please refer to the [instructions in Apple's repo](https://github.com/apple/ml-stable-diffusion).

|

||||

|

||||

|

||||

## Supported Diffusers Features

|

||||

|

||||

The Core ML models and inference code don't support many of the features, options, and flexibility of 🧨 Diffusers. These are some of the limitations to keep in mind:

|

||||

|

||||

- Core ML models are only suitable for inference. They can't be used for training or fine-tuning.

|

||||

- Only two schedulers have been ported to Swift, the default one used by Stable Diffusion and `DPMSolverMultistepScheduler`, which we ported to Swift from our `diffusers` implementation. We recommend you use `DPMSolverMultistepScheduler`, since it produces the same quality in about half the steps.

|

||||

- Negative prompts, classifier-free guidance scale, and image-to-image tasks are available in the inference code. Advanced features such as depth guidance, ControlNet, and latent upscalers are not available yet.

|

||||

|

||||

Apple's [conversion and inference repo](https://github.com/apple/ml-stable-diffusion) and our own [swift-coreml-diffusers](https://github.com/huggingface/swift-coreml-diffusers) repos are intended as technology demonstrators to enable other developers to build upon.

|

||||

|

||||

If you feel strongly about any missing features, please feel free to open a feature request or, better yet, a contribution PR :)

|

||||

|

||||

## Native Diffusers Swift app

|

||||

|

||||

One easy way to run Stable Diffusion on your own Apple hardware is to use [our open-source Swift repo](https://github.com/huggingface/swift-coreml-diffusers), based on `diffusers` and Apple's conversion and inference repo. You can study the code, compile it with [Xcode](https://developer.apple.com/xcode/) and adapt it for your own needs. For your convenience, there's also a [standalone Mac app in the App Store](https://apps.apple.com/app/diffusers/id1666309574), so you can play with it without having to deal with the code or IDE. If you are a developer and have determined that Core ML is the best solution to build your Stable Diffusion app, then you can use the rest of this guide to get started with your project. We can't wait to see what you'll build :)

|

||||

@@ -1,333 +1,271 @@

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# The Stable Diffusion Guide 🎨

|

||||

<a target="_blank" href="https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_101_guide.ipynb">

|

||||

<img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/>

|

||||

</a>

|

||||

|

||||

## Intro

|

||||

|

||||

Stable Diffusion is a [Latent Diffusion model](https://github.com/CompVis/latent-diffusion) developed by researchers from the Machine Vision and Learning group at LMU Munich, *a.k.a* CompVis.

|

||||

Model checkpoints were publicly released at the end of August 2022 by a collaboration of Stability AI, CompVis, and Runway with support from EleutherAI and LAION. For more information, you can check out [the official blog post](https://stability.ai/blog/stable-diffusion-public-release).

|

||||

|

||||

Since its public release the community has done an incredible job at working together to make the stable diffusion checkpoints **faster**, **more memory efficient**, and **more performant**.

|

||||

|

||||

🧨 Diffusers offers a simple API to run stable diffusion with all memory, computing, and quality improvements.

|

||||

|

||||

This notebook walks you through the improvements one-by-one so you can best leverage [`StableDiffusionPipeline`] for **inference**.

|

||||

|

||||

## Prompt Engineering 🎨

|

||||

|

||||

When running *Stable Diffusion* in inference, we usually want to generate a certain type, or style of image and then improve upon it. Improving upon a previously generated image means running inference over and over again with a different prompt and potentially a different seed until we are happy with our generation.

|

||||

|

||||

So to begin with, it is most important to speed up stable diffusion as much as possible to generate as many pictures as possible in a given amount of time.

|

||||

|

||||

This can be done by both improving the **computational efficiency** (speed) and the **memory efficiency** (GPU RAM).

|

||||

|

||||

Let's start by looking into computational efficiency first.

|

||||

|

||||

Throughout the notebook, we will focus on [runwayml/stable-diffusion-v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5):

|

||||

|

||||

``` python

|

||||

model_id = "runwayml/stable-diffusion-v1-5"

|

||||

```

|

||||

|

||||

Let's load the pipeline.

|

||||

|

||||

## Speed Optimization

|

||||

|

||||

``` python

|

||||

from diffusers import DiffusionPipeline

|

||||

|

||||

pipe = DiffusionPipeline.from_pretrained(model_id)

|

||||

```

|

||||

|

||||

We aim at generating a beautiful photograph of an *old warrior chief* and will later try to find the best prompt to generate such a photograph. For now, let's keep the prompt simple:

|

||||

|

||||

``` python

|

||||

prompt = "portrait photo of a old warrior chief"

|

||||

```

|

||||

|

||||

To begin with, we should make sure we run inference on GPU, so let's move the pipeline to GPU, just like you would with any PyTorch module.

|

||||

|

||||

``` python

|

||||

pipe = pipe.to("cuda")

|

||||

```

|

||||

|

||||

To generate an image, you should use the [~`StableDiffusionPipeline.__call__`] method.

|

||||

|

||||

To make sure we can reproduce more or less the same image in every call, let's make use of the generator. See the documentation on reproducibility [here](./conceptual/reproducibility) for more information.

|

||||

|

||||

``` python

|

||||

generator = torch.Generator("cuda").manual_seed(0)

|

||||

```

|

||||

|

||||

Now, let's take a spin on it.

|

||||

|

||||

``` python

|

||||

image = pipe(prompt, generator=generator).images[0]

|

||||

image

|

||||

```

|

||||

|

||||

|

||||

|

||||

Cool, this now took roughly 30 seconds on a T4 GPU (you might see faster inference if your allocated GPU is better than a T4).

|

||||

|

||||

The default run we did above used full float32 precision and ran the default number of inference steps (50). The easiest speed-ups come from switching to float16 (or half) precision and simply running fewer inference steps. Let's load the model now in float16 instead.

|

||||

|

||||

``` python

|

||||

import torch

|

||||

|

||||

pipe = DiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

|

||||

pipe = pipe.to("cuda")

|

||||

```

|

||||

|

||||

And we can again call the pipeline to generate an image.

|

||||

|

||||

``` python

|

||||

generator = torch.Generator("cuda").manual_seed(0)

|

||||

|

||||

image = pipe(prompt, generator=generator).images[0]

|

||||

image

|

||||

```

|

||||

|

||||

|

||||

Cool, this is almost three times as fast for arguably the same image quality.

|

||||

|

||||

We strongly suggest always running your pipelines in float16 as so far we have very rarely seen degradations in quality because of it.

|

||||

|

||||

Next, let's see if we need to use 50 inference steps or whether we could use significantly fewer. The number of inference steps is associated with the denoising scheduler we use. Choosing a more efficient scheduler could help us decrease the number of steps.

|

||||

|

||||

Let's have a look at all the schedulers the stable diffusion pipeline is compatible with.

|

||||

|

||||

``` python

|

||||

pipe.scheduler.compatibles

|

||||

```

|

||||

|

||||

```

|

||||

[diffusers.schedulers.scheduling_dpmsolver_singlestep.DPMSolverSinglestepScheduler,

|

||||

diffusers.schedulers.scheduling_lms_discrete.LMSDiscreteScheduler,

|

||||

diffusers.schedulers.scheduling_heun_discrete.HeunDiscreteScheduler,

|

||||

diffusers.schedulers.scheduling_pndm.PNDMScheduler,

|

||||

diffusers.schedulers.scheduling_euler_discrete.EulerDiscreteScheduler,

|

||||

diffusers.schedulers.scheduling_euler_ancestral_discrete.EulerAncestralDiscreteScheduler,

|

||||

diffusers.schedulers.scheduling_dpmsolver_multistep.DPMSolverMultistepScheduler,

|

||||

diffusers.schedulers.scheduling_ddpm.DDPMScheduler,

|

||||

diffusers.schedulers.scheduling_ddim.DDIMScheduler]

|

||||

```

|

||||

|

||||

Cool, that's a lot of schedulers.

|

||||

|

||||

🧨 Diffusers is constantly adding a bunch of novel schedulers/samplers that can be used with Stable Diffusion. For more information, we recommend taking a look at the official documentation [here](https://huggingface.co/docs/diffusers/main/en/api/schedulers/overview).

|

||||

|

||||

Alright, right now Stable Diffusion is using the `PNDMScheduler` which usually requires around 50 inference steps. However, other schedulers such as `DPMSolverMultistepScheduler` or `DPMSolverSinglestepScheduler` seem to get away with just 20 to 25 inference steps. Let's try them out.

|

||||

|

||||

You can set a new scheduler by making use of the [from_config](https://huggingface.co/docs/diffusers/main/en/api/configuration#diffusers.ConfigMixin.from_config) function.

|

||||

|

||||

``` python

|

||||

from diffusers import DPMSolverMultistepScheduler

|

||||

|

||||