mirror of

https://github.com/huggingface/diffusers.git

synced 2026-04-05 07:11:47 +08:00

Compare commits

2 Commits

max-parall

...

ckpt-tests

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

09eced25f2 | ||

|

|

0d876c83e3 |

6

.github/workflows/nightly_tests.yml

vendored

6

.github/workflows/nightly_tests.yml

vendored

@@ -112,7 +112,7 @@ jobs:

|

||||

|

||||

run_nightly_tests_for_other_torch_modules:

|

||||

name: Torch Non-Pipelines CUDA Nightly Tests

|

||||

runs-on: [single-gpu, nvidia-gpu, t4, ci]

|

||||

runs-on: docker-gpu

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-cuda

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/ --gpus 0

|

||||

@@ -185,7 +185,7 @@ jobs:

|

||||

|

||||

run_lora_nightly_tests:

|

||||

name: Nightly LoRA Tests with PEFT and TORCH

|

||||

runs-on: [single-gpu, nvidia-gpu, t4, ci]

|

||||

runs-on: docker-gpu

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-cuda

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/ --gpus 0

|

||||

@@ -298,7 +298,7 @@ jobs:

|

||||

|

||||

run_nightly_onnx_tests:

|

||||

name: Nightly ONNXRuntime CUDA tests on Ubuntu

|

||||

runs-on: [single-gpu, nvidia-gpu, t4, ci]

|

||||

runs-on: docker-gpu

|

||||

container:

|

||||

image: diffusers/diffusers-onnxruntime-cuda

|

||||

options: --gpus 0 --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/

|

||||

|

||||

6

.github/workflows/pr_test_fetcher.yml

vendored

6

.github/workflows/pr_test_fetcher.yml

vendored

@@ -15,7 +15,7 @@ concurrency:

|

||||

jobs:

|

||||

setup_pr_tests:

|

||||

name: Setup PR Tests

|

||||

runs-on: [ self-hosted, intel-cpu, 8-cpu, ci ]

|

||||

runs-on: docker-cpu

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-cpu

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/

|

||||

@@ -73,7 +73,7 @@ jobs:

|

||||

max-parallel: 2

|

||||

matrix:

|

||||

modules: ${{ fromJson(needs.setup_pr_tests.outputs.matrix) }}

|

||||

runs-on: [ self-hosted, intel-cpu, 8-cpu, ci ]

|

||||

runs-on: docker-cpu

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-cpu

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/

|

||||

@@ -123,7 +123,7 @@ jobs:

|

||||

config:

|

||||

- name: Hub tests for models, schedulers, and pipelines

|

||||

framework: hub_tests_pytorch

|

||||

runner: [ self-hosted, intel-cpu, 8-cpu, ci ]

|

||||

runner: docker-cpu

|

||||

image: diffusers/diffusers-pytorch-cpu

|

||||

report: torch_hub

|

||||

|

||||

|

||||

44

.github/workflows/push_tests.yml

vendored

44

.github/workflows/push_tests.yml

vendored

@@ -21,9 +21,7 @@ env:

|

||||

jobs:

|

||||

setup_torch_cuda_pipeline_matrix:

|

||||

name: Setup Torch Pipelines CUDA Slow Tests Matrix

|

||||

runs-on: [ self-hosted, intel-cpu, 8-cpu, ci ]

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-cpu

|

||||

runs-on: diffusers/diffusers-pytorch-cpu

|

||||

outputs:

|

||||

pipeline_test_matrix: ${{ steps.fetch_pipeline_matrix.outputs.pipeline_test_matrix }}

|

||||

steps:

|

||||

@@ -31,13 +29,14 @@ jobs:

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 2

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: "3.8"

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m venv /opt/venv && export PATH="/opt/venv/bin:$PATH"

|

||||

python -m uv pip install -e [quality,test]

|

||||

- name: Environment

|

||||

run: |

|

||||

python utils/print_env.py

|

||||

pip install -e .

|

||||

pip install huggingface_hub

|

||||

- name: Fetch Pipeline Matrix

|

||||

id: fetch_pipeline_matrix

|

||||

run: |

|

||||

@@ -56,13 +55,12 @@ jobs:

|

||||

needs: setup_torch_cuda_pipeline_matrix

|

||||

strategy:

|

||||

fail-fast: false

|

||||

max-parallel: 8

|

||||

matrix:

|

||||

module: ${{ fromJson(needs.setup_torch_cuda_pipeline_matrix.outputs.pipeline_test_matrix) }}

|

||||

runs-on: [single-gpu, nvidia-gpu, t4, ci]

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-cuda

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface/diffusers:/mnt/cache/ --gpus 0 --privileged

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/ --gpus 0 --privileged

|

||||

steps:

|

||||

- name: Checkout diffusers

|

||||

uses: actions/checkout@v3

|

||||

@@ -116,10 +114,10 @@ jobs:

|

||||

|

||||

torch_cuda_tests:

|

||||

name: Torch CUDA Tests

|

||||

runs-on: [single-gpu, nvidia-gpu, t4, ci]

|

||||

runs-on: docker-gpu

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-cuda

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface/diffusers:/mnt/cache/ --gpus 0

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/ --gpus 0

|

||||

defaults:

|

||||

run:

|

||||

shell: bash

|

||||

@@ -168,10 +166,10 @@ jobs:

|

||||

|

||||

peft_cuda_tests:

|

||||

name: PEFT CUDA Tests

|

||||

runs-on: [single-gpu, nvidia-gpu, t4, ci]

|

||||

runs-on: docker-gpu

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-cuda

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface/diffusers:/mnt/cache/ --gpus 0

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/ --gpus 0

|

||||

defaults:

|

||||

run:

|

||||

shell: bash

|

||||

@@ -221,7 +219,7 @@ jobs:

|

||||

runs-on: docker-tpu

|

||||

container:

|

||||

image: diffusers/diffusers-flax-tpu

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/ --privileged

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/ --privileged

|

||||

defaults:

|

||||

run:

|

||||

shell: bash

|

||||

@@ -265,10 +263,10 @@ jobs:

|

||||

|

||||

onnx_cuda_tests:

|

||||

name: ONNX CUDA Tests

|

||||

runs-on: [single-gpu, nvidia-gpu, t4, ci]

|

||||

runs-on: docker-gpu

|

||||

container:

|

||||

image: diffusers/diffusers-onnxruntime-cuda

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/ --gpus 0

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/ --gpus 0

|

||||

defaults:

|

||||

run:

|

||||

shell: bash

|

||||

@@ -313,11 +311,11 @@ jobs:

|

||||

run_torch_compile_tests:

|

||||

name: PyTorch Compile CUDA tests

|

||||

|

||||

runs-on: [single-gpu, nvidia-gpu, t4, ci]

|

||||

runs-on: docker-gpu

|

||||

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-compile-cuda

|

||||

options: --gpus 0 --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

options: --gpus 0 --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/

|

||||

|

||||

steps:

|

||||

- name: Checkout diffusers

|

||||

@@ -354,11 +352,11 @@ jobs:

|

||||

run_xformers_tests:

|

||||

name: PyTorch xformers CUDA tests

|

||||

|

||||

runs-on: [single-gpu, nvidia-gpu, t4, ci]

|

||||

runs-on: docker-gpu

|

||||

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-xformers-cuda

|

||||

options: --gpus 0 --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

options: --gpus 0 --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/

|

||||

|

||||

steps:

|

||||

- name: Checkout diffusers

|

||||

@@ -395,11 +393,11 @@ jobs:

|

||||

run_examples_tests:

|

||||

name: Examples PyTorch CUDA tests on Ubuntu

|

||||

|

||||

runs-on: [single-gpu, nvidia-gpu, t4, ci]

|

||||

runs-on: docker-gpu

|

||||

|

||||

container:

|

||||

image: diffusers/diffusers-pytorch-cuda

|

||||

options: --gpus 0 --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

options: --gpus 0 --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/

|

||||

|

||||

steps:

|

||||

- name: Checkout diffusers

|

||||

|

||||

@@ -81,14 +81,16 @@

|

||||

title: ControlNet

|

||||

- local: using-diffusers/t2i_adapter

|

||||

title: T2I-Adapter

|

||||

- local: using-diffusers/inference_with_lcm

|

||||

title: Latent Consistency Model

|

||||

- local: using-diffusers/textual_inversion_inference

|

||||

title: Textual inversion

|

||||

- local: using-diffusers/shap-e

|

||||

title: Shap-E

|

||||

- local: using-diffusers/diffedit

|

||||

title: DiffEdit

|

||||

- local: using-diffusers/inference_with_lcm_lora

|

||||

title: Latent Consistency Model-LoRA

|

||||

- local: using-diffusers/inference_with_lcm

|

||||

title: Latent Consistency Model

|

||||

- local: using-diffusers/inference_with_tcd_lora

|

||||

title: Trajectory Consistency Distillation-LoRA

|

||||

- local: using-diffusers/svd

|

||||

@@ -139,6 +141,8 @@

|

||||

- sections:

|

||||

- local: optimization/fp16

|

||||

title: Speed up inference

|

||||

- local: using-diffusers/distilled_sd

|

||||

title: Distilled Stable Diffusion inference

|

||||

- local: optimization/memory

|

||||

title: Reduce memory usage

|

||||

- local: optimization/torch2.0

|

||||

|

||||

@@ -55,6 +55,3 @@ An attention processor is a class for applying different types of attention mech

|

||||

|

||||

## XFormersAttnProcessor

|

||||

[[autodoc]] models.attention_processor.XFormersAttnProcessor

|

||||

|

||||

## AttnProcessorNPU

|

||||

[[autodoc]] models.attention_processor.AttnProcessorNPU

|

||||

|

||||

@@ -12,23 +12,27 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

# Speed up inference

|

||||

|

||||

There are several ways to optimize Diffusers for inference speed, such as reducing the computational burden by lowering the data precision or using a lightweight distilled model. There are also memory-efficient attention implementations, [xFormers](xformers) and [scaled dot product attetntion](https://pytorch.org/docs/stable/generated/torch.nn.functional.scaled_dot_product_attention.html) in PyTorch 2.0, that reduce memory usage which also indirectly speeds up inference. Different speed optimizations can be stacked together to get the fastest inference times.

|

||||

There are several ways to optimize 🤗 Diffusers for inference speed. As a general rule of thumb, we recommend using either [xFormers](xformers) or `torch.nn.functional.scaled_dot_product_attention` in PyTorch 2.0 for their memory-efficient attention.

|

||||

|

||||

> [!TIP]

|

||||

> Optimizing for inference speed or reduced memory usage can lead to improved performance in the other category, so you should try to optimize for both whenever you can. This guide focuses on inference speed, but you can learn more about lowering memory usage in the [Reduce memory usage](memory) guide.

|

||||

<Tip>

|

||||

|

||||

The inference times below are obtained from generating a single 512x512 image from the prompt "a photo of an astronaut riding a horse on mars" with 50 DDIM steps on a NVIDIA A100.

|

||||

In many cases, optimizing for speed or memory leads to improved performance in the other, so you should try to optimize for both whenever you can. This guide focuses on inference speed, but you can learn more about preserving memory in the [Reduce memory usage](memory) guide.

|

||||

|

||||

| setup | latency | speed-up |

|

||||

|----------|---------|----------|

|

||||

| baseline | 5.27s | x1 |

|

||||

| tf32 | 4.14s | x1.27 |

|

||||

| fp16 | 3.51s | x1.50 |

|

||||

| combined | 3.41s | x1.54 |

|

||||

</Tip>

|

||||

|

||||

## TensorFloat-32

|

||||

The results below are obtained from generating a single 512x512 image from the prompt `a photo of an astronaut riding a horse on mars` with 50 DDIM steps on a Nvidia Titan RTX, demonstrating the speed-up you can expect.

|

||||

|

||||

On Ampere and later CUDA devices, matrix multiplications and convolutions can use the [TensorFloat-32 (tf32)](https://blogs.nvidia.com/blog/2020/05/14/tensorfloat-32-precision-format/) mode for faster, but slightly less accurate computations. By default, PyTorch enables tf32 mode for convolutions but not matrix multiplications. Unless your network requires full float32 precision, we recommend enabling tf32 for matrix multiplications. It can significantly speed up computations with typically negligible loss in numerical accuracy.

|

||||

| | latency | speed-up |

|

||||

| ---------------- | ------- | ------- |

|

||||

| original | 9.50s | x1 |

|

||||

| fp16 | 3.61s | x2.63 |

|

||||

| channels last | 3.30s | x2.88 |

|

||||

| traced UNet | 3.21s | x2.96 |

|

||||

| memory efficient attention | 2.63s | x3.61 |

|

||||

|

||||

## Use TensorFloat-32

|

||||

|

||||

On Ampere and later CUDA devices, matrix multiplications and convolutions can use the [TensorFloat-32 (TF32)](https://blogs.nvidia.com/blog/2020/05/14/tensorfloat-32-precision-format/) mode for faster, but slightly less accurate computations. By default, PyTorch enables TF32 mode for convolutions but not matrix multiplications. Unless your network requires full float32 precision, we recommend enabling TF32 for matrix multiplications. It can significantly speeds up computations with typically negligible loss in numerical accuracy.

|

||||

|

||||

```python

|

||||

import torch

|

||||

@@ -36,11 +40,11 @@ import torch

|

||||

torch.backends.cuda.matmul.allow_tf32 = True

|

||||

```

|

||||

|

||||

Learn more about tf32 in the [Mixed precision training](https://huggingface.co/docs/transformers/en/perf_train_gpu_one#tf32) guide.

|

||||

You can learn more about TF32 in the [Mixed precision training](https://huggingface.co/docs/transformers/en/perf_train_gpu_one#tf32) guide.

|

||||

|

||||

## Half-precision weights

|

||||

|

||||

To save GPU memory and get more speed, set `torch_dtype=torch.float16` to load and run the model weights directly with half-precision weights.

|

||||

To save GPU memory and get more speed, try loading and running the model weights directly in half-precision or float16:

|

||||

|

||||

```Python

|

||||

import torch

|

||||

@@ -52,76 +56,19 @@ pipe = DiffusionPipeline.from_pretrained(

|

||||

use_safetensors=True,

|

||||

)

|

||||

pipe = pipe.to("cuda")

|

||||

|

||||

prompt = "a photo of an astronaut riding a horse on mars"

|

||||

image = pipe(prompt).images[0]

|

||||

```

|

||||

|

||||

> [!WARNING]

|

||||

> Don't use [torch.autocast](https://pytorch.org/docs/stable/amp.html#torch.autocast) in any of the pipelines as it can lead to black images and is always slower than pure float16 precision.

|

||||

<Tip warning={true}>

|

||||

|

||||

Don't use [`torch.autocast`](https://pytorch.org/docs/stable/amp.html#torch.autocast) in any of the pipelines as it can lead to black images and is always slower than pure float16 precision.

|

||||

|

||||

</Tip>

|

||||

|

||||

## Distilled model

|

||||

|

||||

You could also use a distilled Stable Diffusion model and autoencoder to speed up inference. During distillation, many of the UNet's residual and attention blocks are shed to reduce the model size by 51% and improve latency on CPU/GPU by 43%. The distilled model is faster and uses less memory while generating images of comparable quality to the full Stable Diffusion model.

|

||||

You could also use a distilled Stable Diffusion model and autoencoder to speed up inference. During distillation, many of the UNet's residual and attention blocks are shed to reduce the model size. The distilled model is faster and uses less memory while generating images of comparable quality to the full Stable Diffusion model.

|

||||

|

||||

> [!TIP]

|

||||

> Read the [Open-sourcing Knowledge Distillation Code and Weights of SD-Small and SD-Tiny](https://huggingface.co/blog/sd_distillation) blog post to learn more about how knowledge distillation training works to produce a faster, smaller, and cheaper generative model.

|

||||

|

||||

The inference times below are obtained from generating 4 images from the prompt "a photo of an astronaut riding a horse on mars" with 25 PNDM steps on a NVIDIA A100. Each generation is repeated 3 times with the distilled Stable Diffusion v1.4 model by [Nota AI](https://hf.co/nota-ai).

|

||||

|

||||

| setup | latency | speed-up |

|

||||

|------------------------------|---------|----------|

|

||||

| baseline | 6.37s | x1 |

|

||||

| distilled | 4.18s | x1.52 |

|

||||

| distilled + tiny autoencoder | 3.83s | x1.66 |

|

||||

|

||||

Let's load the distilled Stable Diffusion model and compare it against the original Stable Diffusion model.

|

||||

|

||||

```py

|

||||

from diffusers import StableDiffusionPipeline

|

||||

import torch

|

||||

|

||||

distilled = StableDiffusionPipeline.from_pretrained(

|

||||

"nota-ai/bk-sdm-small", torch_dtype=torch.float16, use_safetensors=True,

|

||||

).to("cuda")

|

||||

prompt = "a golden vase with different flowers"

|

||||

generator = torch.manual_seed(2023)

|

||||

image = distilled("a golden vase with different flowers", num_inference_steps=25, generator=generator).images[0]

|

||||

image

|

||||

```

|

||||

|

||||

<div class="flex gap-4">

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/original_sd.png"/>

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">original Stable Diffusion</figcaption>

|

||||

</div>

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/distilled_sd.png"/>

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">distilled Stable Diffusion</figcaption>

|

||||

</div>

|

||||

</div>

|

||||

|

||||

### Tiny AutoEncoder

|

||||

|

||||

To speed inference up even more, replace the autoencoder with a [distilled version](https://huggingface.co/sayakpaul/taesdxl-diffusers) of it.

|

||||

|

||||

```py

|

||||

import torch

|

||||

from diffusers import AutoencoderTiny, StableDiffusionPipeline

|

||||

|

||||

distilled = StableDiffusionPipeline.from_pretrained(

|

||||

"nota-ai/bk-sdm-small", torch_dtype=torch.float16, use_safetensors=True,

|

||||

).to("cuda")

|

||||

distilled.vae = AutoencoderTiny.from_pretrained(

|

||||

"sayakpaul/taesd-diffusers", torch_dtype=torch.float16, use_safetensors=True,

|

||||

).to("cuda")

|

||||

|

||||

prompt = "a golden vase with different flowers"

|

||||

generator = torch.manual_seed(2023)

|

||||

image = distilled("a golden vase with different flowers", num_inference_steps=25, generator=generator).images[0]

|

||||

image

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/distilled_sd_vae.png" />

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">distilled Stable Diffusion + Tiny AutoEncoder</figcaption>

|

||||

</div>

|

||||

</div>

|

||||

Learn more about in the [Distilled Stable Diffusion inference](../using-diffusers/distilled_sd) guide!

|

||||

|

||||

133

docs/source/en/using-diffusers/distilled_sd.md

Normal file

133

docs/source/en/using-diffusers/distilled_sd.md

Normal file

@@ -0,0 +1,133 @@

|

||||

<!--Copyright 2024 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# Distilled Stable Diffusion inference

|

||||

|

||||

[[open-in-colab]]

|

||||

|

||||

Stable Diffusion inference can be a computationally intensive process because it must iteratively denoise the latents to generate an image. To reduce the computational burden, you can use a *distilled* version of the Stable Diffusion model from [Nota AI](https://huggingface.co/nota-ai). The distilled version of their Stable Diffusion model eliminates some of the residual and attention blocks from the UNet, reducing the model size by 51% and improving latency on CPU/GPU by 43%.

|

||||

|

||||

<Tip>

|

||||

|

||||

Read this [blog post](https://huggingface.co/blog/sd_distillation) to learn more about how knowledge distillation training works to produce a faster, smaller, and cheaper generative model.

|

||||

|

||||

</Tip>

|

||||

|

||||

Let's load the distilled Stable Diffusion model and compare it against the original Stable Diffusion model:

|

||||

|

||||

```py

|

||||

from diffusers import StableDiffusionPipeline

|

||||

import torch

|

||||

|

||||

distilled = StableDiffusionPipeline.from_pretrained(

|

||||

"nota-ai/bk-sdm-small", torch_dtype=torch.float16, use_safetensors=True,

|

||||

).to("cuda")

|

||||

|

||||

original = StableDiffusionPipeline.from_pretrained(

|

||||

"CompVis/stable-diffusion-v1-4", torch_dtype=torch.float16, use_safetensors=True,

|

||||

).to("cuda")

|

||||

```

|

||||

|

||||

Given a prompt, get the inference time for the original model:

|

||||

|

||||

```py

|

||||

import time

|

||||

|

||||

seed = 2023

|

||||

generator = torch.manual_seed(seed)

|

||||

|

||||

NUM_ITERS_TO_RUN = 3

|

||||

NUM_INFERENCE_STEPS = 25

|

||||

NUM_IMAGES_PER_PROMPT = 4

|

||||

|

||||

prompt = "a golden vase with different flowers"

|

||||

|

||||

start = time.time_ns()

|

||||

for _ in range(NUM_ITERS_TO_RUN):

|

||||

images = original(

|

||||

prompt,

|

||||

num_inference_steps=NUM_INFERENCE_STEPS,

|

||||

generator=generator,

|

||||

num_images_per_prompt=NUM_IMAGES_PER_PROMPT

|

||||

).images

|

||||

end = time.time_ns()

|

||||

original_sd = f"{(end - start) / 1e6:.1f}"

|

||||

|

||||

print(f"Execution time -- {original_sd} ms\n")

|

||||

"Execution time -- 45781.5 ms"

|

||||

```

|

||||

|

||||

Time the distilled model inference:

|

||||

|

||||

```py

|

||||

start = time.time_ns()

|

||||

for _ in range(NUM_ITERS_TO_RUN):

|

||||

images = distilled(

|

||||

prompt,

|

||||

num_inference_steps=NUM_INFERENCE_STEPS,

|

||||

generator=generator,

|

||||

num_images_per_prompt=NUM_IMAGES_PER_PROMPT

|

||||

).images

|

||||

end = time.time_ns()

|

||||

|

||||

distilled_sd = f"{(end - start) / 1e6:.1f}"

|

||||

print(f"Execution time -- {distilled_sd} ms\n")

|

||||

"Execution time -- 29884.2 ms"

|

||||

```

|

||||

|

||||

<div class="flex gap-4">

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/original_sd.png"/>

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">original Stable Diffusion (45781.5 ms)</figcaption>

|

||||

</div>

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/distilled_sd.png"/>

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">distilled Stable Diffusion (29884.2 ms)</figcaption>

|

||||

</div>

|

||||

</div>

|

||||

|

||||

## Tiny AutoEncoder

|

||||

|

||||

To speed inference up even more, use a tiny distilled version of the [Stable Diffusion VAE](https://huggingface.co/sayakpaul/taesdxl-diffusers) to denoise the latents into images. Replace the VAE in the distilled Stable Diffusion model with the tiny VAE:

|

||||

|

||||

```py

|

||||

from diffusers import AutoencoderTiny

|

||||

|

||||

distilled.vae = AutoencoderTiny.from_pretrained(

|

||||

"sayakpaul/taesd-diffusers", torch_dtype=torch.float16, use_safetensors=True,

|

||||

).to("cuda")

|

||||

```

|

||||

|

||||

Time the distilled model and distilled VAE inference:

|

||||

|

||||

```py

|

||||

start = time.time_ns()

|

||||

for _ in range(NUM_ITERS_TO_RUN):

|

||||

images = distilled(

|

||||

prompt,

|

||||

num_inference_steps=NUM_INFERENCE_STEPS,

|

||||

generator=generator,

|

||||

num_images_per_prompt=NUM_IMAGES_PER_PROMPT

|

||||

).images

|

||||

end = time.time_ns()

|

||||

|

||||

distilled_tiny_sd = f"{(end - start) / 1e6:.1f}"

|

||||

print(f"Execution time -- {distilled_tiny_sd} ms\n")

|

||||

"Execution time -- 27165.7 ms"

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/distilled_sd_vae.png" />

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">distilled Stable Diffusion + Tiny AutoEncoder (27165.7 ms)</figcaption>

|

||||

</div>

|

||||

</div>

|

||||

@@ -10,30 +10,29 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# Latent Consistency Model

|

||||

|

||||

[[open-in-colab]]

|

||||

|

||||

[Latent Consistency Models (LCMs)](https://hf.co/papers/2310.04378) enable fast high-quality image generation by directly predicting the reverse diffusion process in the latent rather than pixel space. In other words, LCMs try to predict the noiseless image from the noisy image in contrast to typical diffusion models that iteratively remove noise from the noisy image. By avoiding the iterative sampling process, LCMs are able to generate high-quality images in 2-4 steps instead of 20-30 steps.

|

||||

# Latent Consistency Model

|

||||

|

||||

LCMs are distilled from pretrained models which requires ~32 hours of A100 compute. To speed this up, [LCM-LoRAs](https://hf.co/papers/2311.05556) train a [LoRA adapter](https://huggingface.co/docs/peft/conceptual_guides/adapter#low-rank-adaptation-lora) which have much fewer parameters to train compared to the full model. The LCM-LoRA can be plugged into a diffusion model once it has been trained.

|

||||

Latent Consistency Models (LCM) enable quality image generation in typically 2-4 steps making it possible to use diffusion models in almost real-time settings.

|

||||

|

||||

This guide will show you how to use LCMs and LCM-LoRAs for fast inference on tasks and how to use them with other adapters like ControlNet or T2I-Adapter.

|

||||

From the [official website](https://latent-consistency-models.github.io/):

|

||||

|

||||

> [!TIP]

|

||||

> LCMs and LCM-LoRAs are available for Stable Diffusion v1.5, Stable Diffusion XL, and the SSD-1B model. You can find their checkpoints on the [Latent Consistency](https://hf.co/collections/latent-consistency/latent-consistency-models-weights-654ce61a95edd6dffccef6a8) Collections.

|

||||

> LCMs can be distilled from any pre-trained Stable Diffusion (SD) in only 4,000 training steps (~32 A100 GPU Hours) for generating high quality 768 x 768 resolution images in 2~4 steps or even one step, significantly accelerating text-to-image generation. We employ LCM to distill the Dreamshaper-V7 version of SD in just 4,000 training iterations.

|

||||

|

||||

For a more technical overview of LCMs, refer to [the paper](https://huggingface.co/papers/2310.04378).

|

||||

|

||||

LCM distilled models are available for [stable-diffusion-v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5), [stable-diffusion-xl-base-1.0](https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0), and the [SSD-1B](https://huggingface.co/segmind/SSD-1B) model. All the checkpoints can be found in this [collection](https://huggingface.co/collections/latent-consistency/latent-consistency-models-weights-654ce61a95edd6dffccef6a8).

|

||||

|

||||

This guide shows how to perform inference with LCMs for

|

||||

- text-to-image

|

||||

- image-to-image

|

||||

- combined with style LoRAs

|

||||

- ControlNet/T2I-Adapter

|

||||

|

||||

## Text-to-image

|

||||

|

||||

<hfoptions id="lcm-text2img">

|

||||

<hfoption id="LCM">

|

||||

|

||||

To use LCMs, you need to load the LCM checkpoint for your supported model into [`UNet2DConditionModel`] and replace the scheduler with the [`LCMScheduler`]. Then you can use the pipeline as usual, and pass a text prompt to generate an image in just 4 steps.

|

||||

|

||||

A couple of notes to keep in mind when using LCMs are:

|

||||

|

||||

* Typically, batch size is doubled inside the pipeline for classifier-free guidance. But LCM applies guidance with guidance embeddings and doesn't need to double the batch size, which leads to faster inference. The downside is that negative prompts don't work with LCM because they don't have any effect on the denoising process.

|

||||

* The ideal range for `guidance_scale` is [3., 13.] because that is what the UNet was trained with. However, disabling `guidance_scale` with a value of 1.0 is also effective in most cases.

|

||||

You'll use the [`StableDiffusionXLPipeline`] pipeline with the [`LCMScheduler`] and then load the LCM-LoRA. Together with the LCM-LoRA and the scheduler, the pipeline enables a fast inference workflow, overcoming the slow iterative nature of diffusion models.

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionXLPipeline, UNet2DConditionModel, LCMScheduler

|

||||

@@ -50,69 +49,31 @@ pipe = StableDiffusionXLPipeline.from_pretrained(

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

prompt = "Self-portrait oil painting, a beautiful cyborg with golden hair, 8k"

|

||||

|

||||

generator = torch.manual_seed(0)

|

||||

image = pipe(

|

||||

prompt=prompt, num_inference_steps=4, generator=generator, guidance_scale=8.0

|

||||

).images[0]

|

||||

image

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm/lcm_full_sdxl_t2i.png"/>

|

||||

</div>

|

||||

|

||||

|

||||

</hfoption>

|

||||

<hfoption id="LCM-LoRA">

|

||||

Notice that we use only 4 steps for generation which is way less than what's typically used for standard SDXL.

|

||||

|

||||

To use LCM-LoRAs, you need to replace the scheduler with the [`LCMScheduler`] and load the LCM-LoRA weights with the [`~loaders.LoraLoaderMixin.load_lora_weights`] method. Then you can use the pipeline as usual, and pass a text prompt to generate an image in just 4 steps.

|

||||

Some details to keep in mind:

|

||||

|

||||

A couple of notes to keep in mind when using LCM-LoRAs are:

|

||||

* To perform classifier-free guidance, batch size is usually doubled inside the pipeline. LCM, however, applies guidance using guidance embeddings, so the batch size does not have to be doubled in this case. This leads to a faster inference time, with the drawback that negative prompts don't have any effect on the denoising process.

|

||||

* The UNet was trained using the [3., 13.] guidance scale range. So, that is the ideal range for `guidance_scale`. However, disabling `guidance_scale` using a value of 1.0 is also effective in most cases.

|

||||

|

||||

* Typically, batch size is doubled inside the pipeline for classifier-free guidance. But LCM applies guidance with guidance embeddings and doesn't need to double the batch size, which leads to faster inference. The downside is that negative prompts don't work with LCM because they don't have any effect on the denoising process.

|

||||

* You could use guidance with LCM-LoRAs, but it is very sensitive to high `guidance_scale` values and can lead to artifacts in the generated image. The best values we've found are between [1.0, 2.0].

|

||||

* Replace [stabilityai/stable-diffusion-xl-base-1.0](https://hf.co/stabilityai/stable-diffusion-xl-base-1.0) with any finetuned model. For example, try using the [animagine-xl](https://huggingface.co/Linaqruf/animagine-xl) checkpoint to generate anime images with SDXL.

|

||||

|

||||

```py

|

||||

import torch

|

||||

from diffusers import DiffusionPipeline, LCMScheduler

|

||||

|

||||

pipe = DiffusionPipeline.from_pretrained(

|

||||

"stabilityai/stable-diffusion-xl-base-1.0",

|

||||

variant="fp16",

|

||||

torch_dtype=torch.float16

|

||||

).to("cuda")

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

pipe.load_lora_weights("latent-consistency/lcm-lora-sdxl")

|

||||

|

||||

prompt = "Self-portrait oil painting, a beautiful cyborg with golden hair, 8k"

|

||||

generator = torch.manual_seed(42)

|

||||

image = pipe(

|

||||

prompt=prompt, num_inference_steps=4, generator=generator, guidance_scale=1.0

|

||||

).images[0]

|

||||

image

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm/lcm_sdxl_t2i.png"/>

|

||||

</div>

|

||||

|

||||

</hfoption>

|

||||

</hfoptions>

|

||||

|

||||

## Image-to-image

|

||||

|

||||

<hfoptions id="lcm-img2img">

|

||||

<hfoption id="LCM">

|

||||

|

||||

To use LCMs for image-to-image, you need to load the LCM checkpoint for your supported model into [`UNet2DConditionModel`] and replace the scheduler with the [`LCMScheduler`]. Then you can use the pipeline as usual, and pass a text prompt and initial image to generate an image in just 4 steps.

|

||||

|

||||

> [!TIP]

|

||||

> Experiment with different values for `num_inference_steps`, `strength`, and `guidance_scale` to get the best results.

|

||||

LCMs can be applied to image-to-image tasks too. For this example, we'll use the [LCM_Dreamshaper_v7](https://huggingface.co/SimianLuo/LCM_Dreamshaper_v7) model, but the same steps can be applied to other LCM models as well.

|

||||

|

||||

```python

|

||||

import torch

|

||||

from diffusers import AutoPipelineForImage2Image, UNet2DConditionModel, LCMScheduler

|

||||

from diffusers.utils import load_image

|

||||

from diffusers.utils import make_image_grid, load_image

|

||||

|

||||

unet = UNet2DConditionModel.from_pretrained(

|

||||

"SimianLuo/LCM_Dreamshaper_v7",

|

||||

@@ -128,8 +89,12 @@ pipe = AutoPipelineForImage2Image.from_pretrained(

|

||||

).to("cuda")

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

init_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/img2img-init.png")

|

||||

# prepare image

|

||||

url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/img2img-init.png"

|

||||

init_image = load_image(url)

|

||||

prompt = "Astronauts in a jungle, cold color palette, muted colors, detailed, 8k"

|

||||

|

||||

# pass prompt and image to pipeline

|

||||

generator = torch.manual_seed(0)

|

||||

image = pipe(

|

||||

prompt,

|

||||

@@ -139,130 +104,22 @@ image = pipe(

|

||||

strength=0.5,

|

||||

generator=generator

|

||||

).images[0]

|

||||

image

|

||||

make_image_grid([init_image, image], rows=1, cols=2)

|

||||

```

|

||||

|

||||

<div class="flex gap-4">

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/img2img-init.png"/>

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">initial image</figcaption>

|

||||

</div>

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm-img2img.png"/>

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">generated image</figcaption>

|

||||

</div>

|

||||

</div>

|

||||

|

||||

|

||||

</hfoption>

|

||||

<hfoption id="LCM-LoRA">

|

||||

|

||||

To use LCM-LoRAs for image-to-image, you need to replace the scheduler with the [`LCMScheduler`] and load the LCM-LoRA weights with the [`~loaders.LoraLoaderMixin.load_lora_weights`] method. Then you can use the pipeline as usual, and pass a text prompt and initial image to generate an image in just 4 steps.

|

||||

<Tip>

|

||||

|

||||

> [!TIP]

|

||||

> Experiment with different values for `num_inference_steps`, `strength`, and `guidance_scale` to get the best results.

|

||||

You can get different results based on your prompt and the image you provide. To get the best results, we recommend trying different values for `num_inference_steps`, `strength`, and `guidance_scale` parameters and choose the best one.

|

||||

|

||||

```py

|

||||

import torch

|

||||

from diffusers import AutoPipelineForImage2Image, LCMScheduler

|

||||

from diffusers.utils import make_image_grid, load_image

|

||||

</Tip>

|

||||

|

||||

pipe = AutoPipelineForImage2Image.from_pretrained(

|

||||

"Lykon/dreamshaper-7",

|

||||

torch_dtype=torch.float16,

|

||||

variant="fp16",

|

||||

).to("cuda")

|

||||

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

## Combine with style LoRAs

|

||||

|

||||

pipe.load_lora_weights("latent-consistency/lcm-lora-sdv1-5")

|

||||

|

||||

init_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/img2img-init.png")

|

||||

prompt = "Astronauts in a jungle, cold color palette, muted colors, detailed, 8k"

|

||||

|

||||

generator = torch.manual_seed(0)

|

||||

image = pipe(

|

||||

prompt,

|

||||

image=init_image,

|

||||

num_inference_steps=4,

|

||||

guidance_scale=1,

|

||||

strength=0.6,

|

||||

generator=generator

|

||||

).images[0]

|

||||

image

|

||||

```

|

||||

|

||||

<div class="flex gap-4">

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/img2img-init.png"/>

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">initial image</figcaption>

|

||||

</div>

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm-lora-img2img.png"/>

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">generated image</figcaption>

|

||||

</div>

|

||||

</div>

|

||||

|

||||

</hfoption>

|

||||

</hfoptions>

|

||||

|

||||

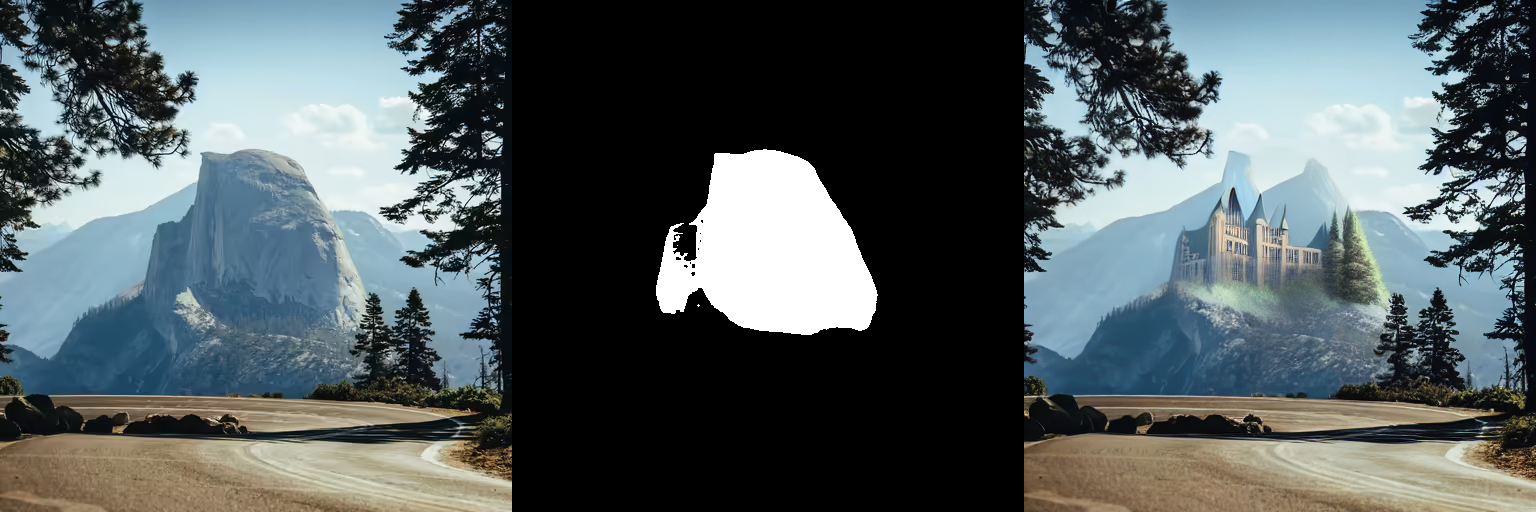

## Inpainting

|

||||

|

||||

To use LCM-LoRAs for inpainting, you need to replace the scheduler with the [`LCMScheduler`] and load the LCM-LoRA weights with the [`~loaders.LoraLoaderMixin.load_lora_weights`] method. Then you can use the pipeline as usual, and pass a text prompt, initial image, and mask image to generate an image in just 4 steps.

|

||||

|

||||

```py

|

||||

import torch

|

||||

from diffusers import AutoPipelineForInpainting, LCMScheduler

|

||||

from diffusers.utils import load_image, make_image_grid

|

||||

|

||||

pipe = AutoPipelineForInpainting.from_pretrained(

|

||||

"runwayml/stable-diffusion-inpainting",

|

||||

torch_dtype=torch.float16,

|

||||

variant="fp16",

|

||||

).to("cuda")

|

||||

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

pipe.load_lora_weights("latent-consistency/lcm-lora-sdv1-5")

|

||||

|

||||

init_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/inpaint.png")

|

||||

mask_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/inpaint_mask.png")

|

||||

|

||||

prompt = "concept art digital painting of an elven castle, inspired by lord of the rings, highly detailed, 8k"

|

||||

generator = torch.manual_seed(0)

|

||||

image = pipe(

|

||||

prompt=prompt,

|

||||

image=init_image,

|

||||

mask_image=mask_image,

|

||||

generator=generator,

|

||||

num_inference_steps=4,

|

||||

guidance_scale=4,

|

||||

).images[0]

|

||||

image

|

||||

```

|

||||

|

||||

<div class="flex gap-4">

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/inpaint.png"/>

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">initial image</figcaption>

|

||||

</div>

|

||||

<div>

|

||||

<img class="rounded-xl" src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm-lora-inpaint.png"/>

|

||||

<figcaption class="mt-2 text-center text-sm text-gray-500">generated image</figcaption>

|

||||

</div>

|

||||

</div>

|

||||

|

||||

## Adapters

|

||||

|

||||

LCMs are compatible with adapters like LoRA, ControlNet, T2I-Adapter, and AnimateDiff. You can bring the speed of LCMs to these adapters to generate images in a certain style or condition the model on another input like a canny image.

|

||||

|

||||

### LoRA

|

||||

|

||||

[LoRA](../using-diffusers/loading_adapters#lora) adapters can be rapidly finetuned to learn a new style from just a few images and plugged into a pretrained model to generate images in that style.

|

||||

|

||||

<hfoptions id="lcm-lora">

|

||||

<hfoption id="LCM">

|

||||

|

||||

Load the LCM checkpoint for your supported model into [`UNet2DConditionModel`] and replace the scheduler with the [`LCMScheduler`]. Then you can use the [`~loaders.LoraLoaderMixin.load_lora_weights`] method to load the LoRA weights into the LCM and generate a styled image in a few steps.

|

||||

LCMs can be used with other styled LoRAs to generate styled-images in very few steps (4-8). In the following example, we'll use the [papercut LoRA](TheLastBen/Papercut_SDXL).

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionXLPipeline, UNet2DConditionModel, LCMScheduler

|

||||

@@ -277,9 +134,11 @@ pipe = StableDiffusionXLPipeline.from_pretrained(

|

||||

"stabilityai/stable-diffusion-xl-base-1.0", unet=unet, torch_dtype=torch.float16, variant="fp16",

|

||||

).to("cuda")

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

pipe.load_lora_weights("TheLastBen/Papercut_SDXL", weight_name="papercut.safetensors", adapter_name="papercut")

|

||||

|

||||

prompt = "papercut, a cute fox"

|

||||

|

||||

generator = torch.manual_seed(0)

|

||||

image = pipe(

|

||||

prompt=prompt, num_inference_steps=4, generator=generator, guidance_scale=8.0

|

||||

@@ -287,58 +146,15 @@ image = pipe(

|

||||

image

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm/lcm_full_sdx_lora_mix.png"/>

|

||||

</div>

|

||||

|

||||

|

||||

</hfoption>

|

||||

<hfoption id="LCM-LoRA">

|

||||

|

||||

Replace the scheduler with the [`LCMScheduler`]. Then you can use the [`~loaders.LoraLoaderMixin.load_lora_weights`] method to load the LCM-LoRA weights and the style LoRA you want to use. Combine both LoRA adapters with the [`~loaders.UNet2DConditionLoadersMixin.set_adapters`] method and generate a styled image in a few steps.

|

||||

## ControlNet/T2I-Adapter

|

||||

|

||||

```py

|

||||

import torch

|

||||

from diffusers import DiffusionPipeline, LCMScheduler

|

||||

|

||||

pipe = DiffusionPipeline.from_pretrained(

|

||||

"stabilityai/stable-diffusion-xl-base-1.0",

|

||||

variant="fp16",

|

||||

torch_dtype=torch.float16

|

||||

).to("cuda")

|

||||

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

pipe.load_lora_weights("latent-consistency/lcm-lora-sdxl", adapter_name="lcm")

|

||||

pipe.load_lora_weights("TheLastBen/Papercut_SDXL", weight_name="papercut.safetensors", adapter_name="papercut")

|

||||

|

||||

pipe.set_adapters(["lcm", "papercut"], adapter_weights=[1.0, 0.8])

|

||||

|

||||

prompt = "papercut, a cute fox"

|

||||

generator = torch.manual_seed(0)

|

||||

image = pipe(prompt, num_inference_steps=4, guidance_scale=1, generator=generator).images[0]

|

||||

image

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm/lcm_sdx_lora_mix.png"/>

|

||||

</div>

|

||||

|

||||

</hfoption>

|

||||

</hfoptions>

|

||||

Let's look at how we can perform inference with ControlNet/T2I-Adapter and a LCM.

|

||||

|

||||

### ControlNet

|

||||

|

||||

[ControlNet](./controlnet) are adapters that can be trained on a variety of inputs like canny edge, pose estimation, or depth. The ControlNet can be inserted into the pipeline to provide additional conditioning and control to the model for more accurate generation.

|

||||

|

||||

You can find additional ControlNet models trained on other inputs in [lllyasviel's](https://hf.co/lllyasviel) repository.

|

||||

|

||||

<hfoptions id="lcm-controlnet">

|

||||

<hfoption id="LCM">

|

||||

|

||||

Load a ControlNet model trained on canny images and pass it to the [`ControlNetModel`]. Then you can load a LCM model into [`StableDiffusionControlNetPipeline`] and replace the scheduler with the [`LCMScheduler`]. Now pass the canny image to the pipeline and generate an image.

|

||||

|

||||

> [!TIP]

|

||||

> Experiment with different values for `num_inference_steps`, `controlnet_conditioning_scale`, `cross_attention_kwargs`, and `guidance_scale` to get the best results.

|

||||

For this example, we'll use the [LCM_Dreamshaper_v7](https://huggingface.co/SimianLuo/LCM_Dreamshaper_v7) model with canny ControlNet, but the same steps can be applied to other LCM models as well.

|

||||

|

||||

```python

|

||||

import torch

|

||||

@@ -370,6 +186,8 @@ pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

||||

torch_dtype=torch.float16,

|

||||

safety_checker=None,

|

||||

).to("cuda")

|

||||

|

||||

# set scheduler

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

generator = torch.manual_seed(0)

|

||||

@@ -382,84 +200,16 @@ image = pipe(

|

||||

make_image_grid([canny_image, image], rows=1, cols=2)

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm/lcm_full_sdv1-5_controlnet.png"/>

|

||||

</div>

|

||||

|

||||

|

||||

</hfoption>

|

||||

<hfoption id="LCM-LoRA">

|

||||

|

||||

Load a ControlNet model trained on canny images and pass it to the [`ControlNetModel`]. Then you can load a Stable Diffusion v1.5 model into [`StableDiffusionControlNetPipeline`] and replace the scheduler with the [`LCMScheduler`]. Use the [`~loaders.LoraLoaderMixin.load_lora_weights`] method to load the LCM-LoRA weights, and pass the canny image to the pipeline and generate an image.

|

||||

|

||||

> [!TIP]

|

||||

> Experiment with different values for `num_inference_steps`, `controlnet_conditioning_scale`, `cross_attention_kwargs`, and `guidance_scale` to get the best results.

|

||||

|

||||

```py

|

||||

import torch

|

||||

import cv2

|

||||

import numpy as np

|

||||

from PIL import Image

|

||||

|

||||

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, LCMScheduler

|

||||

from diffusers.utils import load_image

|

||||

|

||||

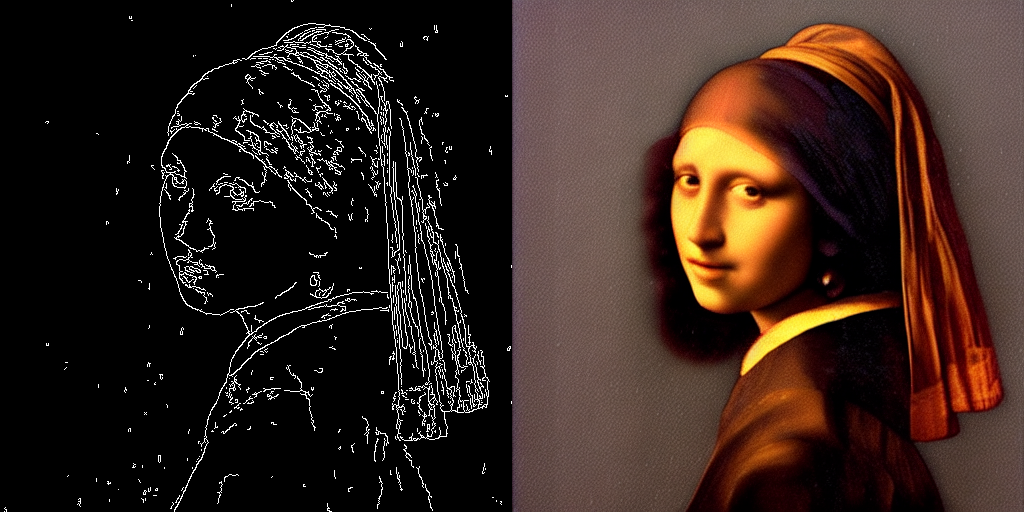

image = load_image(

|

||||

"https://hf.co/datasets/huggingface/documentation-images/resolve/main/diffusers/input_image_vermeer.png"

|

||||

).resize((512, 512))

|

||||

|

||||

image = np.array(image)

|

||||

|

||||

low_threshold = 100

|

||||

high_threshold = 200

|

||||

|

||||

image = cv2.Canny(image, low_threshold, high_threshold)

|

||||

image = image[:, :, None]

|

||||

image = np.concatenate([image, image, image], axis=2)

|

||||

canny_image = Image.fromarray(image)

|

||||

|

||||

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16)

|

||||

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

||||

"runwayml/stable-diffusion-v1-5",

|

||||

controlnet=controlnet,

|

||||

torch_dtype=torch.float16,

|

||||

safety_checker=None,

|

||||

variant="fp16"

|

||||

).to("cuda")

|

||||

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

pipe.load_lora_weights("latent-consistency/lcm-lora-sdv1-5")

|

||||

|

||||

generator = torch.manual_seed(0)

|

||||

image = pipe(

|

||||

"the mona lisa",

|

||||

image=canny_image,

|

||||

num_inference_steps=4,

|

||||

guidance_scale=1.5,

|

||||

controlnet_conditioning_scale=0.8,

|

||||

cross_attention_kwargs={"scale": 1},

|

||||

generator=generator,

|

||||

).images[0]

|

||||

image

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm/lcm_sdv1-5_controlnet.png"/>

|

||||

</div>

|

||||

|

||||

</hfoption>

|

||||

</hfoptions>

|

||||

<Tip>

|

||||

The inference parameters in this example might not work for all examples, so we recommend trying different values for the `num_inference_steps`, `guidance_scale`, `controlnet_conditioning_scale`, and `cross_attention_kwargs` parameters and choosing the best one.

|

||||

</Tip>

|

||||

|

||||

### T2I-Adapter

|

||||

|

||||

[T2I-Adapter](./t2i_adapter) is an even more lightweight adapter than ControlNet, that provides an additional input to condition a pretrained model with. It is faster than ControlNet but the results may be slightly worse.

|

||||

|

||||

You can find additional T2I-Adapter checkpoints trained on other inputs in [TencentArc's](https://hf.co/TencentARC) repository.

|

||||

|

||||

<hfoptions id="lcm-t2i">

|

||||

<hfoption id="LCM">

|

||||

|

||||

Load a T2IAdapter trained on canny images and pass it to the [`StableDiffusionXLAdapterPipeline`]. Then load a LCM checkpoint into [`UNet2DConditionModel`] and replace the scheduler with the [`LCMScheduler`]. Now pass the canny image to the pipeline and generate an image.

|

||||

This example shows how to use the `lcm-sdxl` with the [Canny T2I-Adapter](TencentARC/t2i-adapter-canny-sdxl-1.0).

|

||||

|

||||

```python

|

||||

import torch

|

||||

@@ -470,9 +220,10 @@ from PIL import Image

|

||||

from diffusers import StableDiffusionXLAdapterPipeline, UNet2DConditionModel, T2IAdapter, LCMScheduler

|

||||

from diffusers.utils import load_image, make_image_grid

|

||||

|

||||

# detect the canny map in low resolution to avoid high-frequency details

|

||||

# Prepare image

|

||||

# Detect the canny map in low resolution to avoid high-frequency details

|

||||

image = load_image(

|

||||

"https://hf.co/datasets/huggingface/documentation-images/resolve/main/diffusers/input_image_vermeer.png"

|

||||

"https://huggingface.co/Adapter/t2iadapter/resolve/main/figs_SDXLV1.0/org_canny.jpg"

|

||||

).resize((384, 384))

|

||||

|

||||

image = np.array(image)

|

||||

@@ -485,6 +236,7 @@ image = image[:, :, None]

|

||||

image = np.concatenate([image, image, image], axis=2)

|

||||

canny_image = Image.fromarray(image).resize((1024, 1216))

|

||||

|

||||

# load adapter

|

||||

adapter = T2IAdapter.from_pretrained("TencentARC/t2i-adapter-canny-sdxl-1.0", torch_dtype=torch.float16, varient="fp16").to("cuda")

|

||||

|

||||

unet = UNet2DConditionModel.from_pretrained(

|

||||

@@ -502,7 +254,7 @@ pipe = StableDiffusionXLAdapterPipeline.from_pretrained(

|

||||

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

prompt = "the mona lisa, 4k picture, high quality"

|

||||

prompt = "Mystical fairy in real, magic, 4k picture, high quality"

|

||||

negative_prompt = "extra digit, fewer digits, cropped, worst quality, low quality, glitch, deformed, mutated, ugly, disfigured"

|

||||

|

||||

generator = torch.manual_seed(0)

|

||||

@@ -516,116 +268,7 @@ image = pipe(

|

||||

adapter_conditioning_factor=1,

|

||||

generator=generator,

|

||||

).images[0]

|

||||

grid = make_image_grid([canny_image, image], rows=1, cols=2)

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm-t2i.png"/>

|

||||

</div>

|

||||

|

||||

</hfoption>

|

||||

<hfoption id="LCM-LoRA">

|

||||

|

||||

Load a T2IAdapter trained on canny images and pass it to the [`StableDiffusionXLAdapterPipeline`]. Replace the scheduler with the [`LCMScheduler`], and use the [`~loaders.LoraLoaderMixin.load_lora_weights`] method to load the LCM-LoRA weights. Pass the canny image to the pipeline and generate an image.

|

||||

|

||||

```py

|

||||

import torch

|

||||

import cv2

|

||||

import numpy as np

|

||||

from PIL import Image

|

||||

|

||||

from diffusers import StableDiffusionXLAdapterPipeline, UNet2DConditionModel, T2IAdapter, LCMScheduler

|

||||

from diffusers.utils import load_image, make_image_grid

|

||||

|

||||

# detect the canny map in low resolution to avoid high-frequency details

|

||||

image = load_image(

|

||||

"https://hf.co/datasets/huggingface/documentation-images/resolve/main/diffusers/input_image_vermeer.png"

|

||||

).resize((384, 384))

|

||||

|

||||

image = np.array(image)

|

||||

|

||||

low_threshold = 100

|

||||

high_threshold = 200

|

||||

|

||||

image = cv2.Canny(image, low_threshold, high_threshold)

|

||||

image = image[:, :, None]

|

||||

image = np.concatenate([image, image, image], axis=2)

|

||||

canny_image = Image.fromarray(image).resize((1024, 1024))

|

||||

|

||||

adapter = T2IAdapter.from_pretrained("TencentARC/t2i-adapter-canny-sdxl-1.0", torch_dtype=torch.float16, varient="fp16").to("cuda")

|

||||

|

||||

pipe = StableDiffusionXLAdapterPipeline.from_pretrained(

|

||||

"stabilityai/stable-diffusion-xl-base-1.0",

|

||||

adapter=adapter,

|

||||

torch_dtype=torch.float16,

|

||||

variant="fp16",

|

||||

).to("cuda")

|

||||

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

pipe.load_lora_weights("latent-consistency/lcm-lora-sdxl")

|

||||

|

||||

prompt = "the mona lisa, 4k picture, high quality"

|

||||

negative_prompt = "extra digit, fewer digits, cropped, worst quality, low quality, glitch, deformed, mutated, ugly, disfigured"

|

||||

|

||||

generator = torch.manual_seed(0)

|

||||

image = pipe(

|

||||

prompt=prompt,

|

||||

negative_prompt=negative_prompt,

|

||||

image=canny_image,

|

||||

num_inference_steps=4,

|

||||

guidance_scale=1.5,

|

||||

adapter_conditioning_scale=0.8,

|

||||

adapter_conditioning_factor=1,

|

||||

generator=generator,

|

||||

).images[0]

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm-lora-t2i.png"/>

|

||||

</div>

|

||||

|

||||

</hfoption>

|

||||

</hfoptions>

|

||||

|

||||

### AnimateDiff

|

||||

|

||||

[AnimateDiff](../api/pipelines/animatediff) is an adapter that adds motion to an image. It can be used with most Stable Diffusion models, effectively turning them into "video generation" models. Generating good results with a video model usually requires generating multiple frames (16-24), which can be very slow with a regular Stable Diffusion model. LCM-LoRA can speed up this process by only taking 4-8 steps for each frame.

|

||||

|

||||

Load a [`AnimateDiffPipeline`] and pass a [`MotionAdapter`] to it. Then replace the scheduler with the [`LCMScheduler`], and combine both LoRA adapters with the [`~loaders.UNet2DConditionLoadersMixin.set_adapters`] method. Now you can pass a prompt to the pipeline and generate an animated image.

|

||||

|

||||

```py

|

||||

import torch

|

||||

from diffusers import MotionAdapter, AnimateDiffPipeline, DDIMScheduler, LCMScheduler

|

||||

from diffusers.utils import export_to_gif

|

||||

|

||||

adapter = MotionAdapter.from_pretrained("guoyww/animatediff-motion-adapter-v1-5")

|

||||

pipe = AnimateDiffPipeline.from_pretrained(

|

||||

"frankjoshua/toonyou_beta6",

|

||||

motion_adapter=adapter,

|

||||

).to("cuda")

|

||||

|

||||

# set scheduler

|

||||

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

# load LCM-LoRA

|

||||

pipe.load_lora_weights("latent-consistency/lcm-lora-sdv1-5", adapter_name="lcm")

|

||||

pipe.load_lora_weights("guoyww/animatediff-motion-lora-zoom-in", weight_name="diffusion_pytorch_model.safetensors", adapter_name="motion-lora")

|

||||

|

||||

pipe.set_adapters(["lcm", "motion-lora"], adapter_weights=[0.55, 1.2])

|

||||

|

||||

prompt = "best quality, masterpiece, 1girl, looking at viewer, blurry background, upper body, contemporary, dress"

|

||||

generator = torch.manual_seed(0)

|

||||

frames = pipe(

|

||||

prompt=prompt,

|

||||

num_inference_steps=5,

|

||||

guidance_scale=1.25,

|

||||

cross_attention_kwargs={"scale": 1},

|

||||

num_frames=24,

|

||||

generator=generator

|

||||

).frames[0]

|

||||

export_to_gif(frames, "animation.gif")

|

||||

```

|

||||

|

||||

<div class="flex justify-center">

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/lcm-lora-animatediff.gif"/>

|

||||

</div>

|

||||

|

||||

|

||||

422

docs/source/en/using-diffusers/inference_with_lcm_lora.md

Normal file

422

docs/source/en/using-diffusers/inference_with_lcm_lora.md

Normal file

@@ -0,0 +1,422 @@

|

||||

<!--Copyright 2024 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

[[open-in-colab]]

|

||||

|

||||

# Performing inference with LCM-LoRA

|

||||

|

||||

Latent Consistency Models (LCM) enable quality image generation in typically 2-4 steps making it possible to use diffusion models in almost real-time settings.

|

||||

|

||||

From the [official website](https://latent-consistency-models.github.io/):

|

||||

|

||||

> LCMs can be distilled from any pre-trained Stable Diffusion (SD) in only 4,000 training steps (~32 A100 GPU Hours) for generating high quality 768 x 768 resolution images in 2~4 steps or even one step, significantly accelerating text-to-image generation. We employ LCM to distill the Dreamshaper-V7 version of SD in just 4,000 training iterations.

|

||||

|

||||

For a more technical overview of LCMs, refer to [the paper](https://huggingface.co/papers/2310.04378).

|

||||

|

||||

However, each model needs to be distilled separately for latent consistency distillation. The core idea with LCM-LoRA is to train just a few adapter layers, the adapter being LoRA in this case.

|

||||

This way, we don't have to train the full model and keep the number of trainable parameters manageable. The resulting LoRAs can then be applied to any fine-tuned version of the model without distilling them separately.

|

||||

Additionally, the LoRAs can be applied to image-to-image, ControlNet/T2I-Adapter, inpainting, AnimateDiff etc.

|

||||

The LCM-LoRA can also be combined with other LoRAs to generate styled images in very few steps (4-8).

|

||||

|

||||

LCM-LoRAs are available for [stable-diffusion-v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5), [stable-diffusion-xl-base-1.0](https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0), and the [SSD-1B](https://huggingface.co/segmind/SSD-1B) model. All the checkpoints can be found in this [collection](https://huggingface.co/collections/latent-consistency/latent-consistency-models-loras-654cdd24e111e16f0865fba6).

|

||||

|

||||

For more details about LCM-LoRA, refer to [the technical report](https://huggingface.co/papers/2311.05556).

|

||||

|

||||

This guide shows how to perform inference with LCM-LoRAs for

|

||||

- text-to-image

|

||||

- image-to-image

|

||||

- combined with styled LoRAs

|

||||

- ControlNet/T2I-Adapter

|

||||

- inpainting

|

||||