mirror of

https://github.com/huggingface/diffusers.git

synced 2025-12-10 22:44:38 +08:00

Compare commits

14 Commits

controlnet

...

v0.19.3

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

de9c72d58c | ||

|

|

7b022df49c | ||

|

|

965e52ce61 | ||

|

|

b1e52794a2 | ||

|

|

c3e3a1ee10 | ||

|

|

9cde56a729 | ||

|

|

c63d7cdba0 | ||

|

|

aa4634a7fa | ||

|

|

0709650e9d | ||

|

|

a9829164f4 | ||

|

|

49c95178ad | ||

|

|

c2f755bc62 | ||

|

|

2fb877b66c | ||

|

|

ef9824f9f7 |

57

.github/workflows/pr_tests.yml

vendored

57

.github/workflows/pr_tests.yml

vendored

@@ -113,60 +113,3 @@ jobs:

|

||||

with:

|

||||

name: pr_${{ matrix.config.report }}_test_reports

|

||||

path: reports

|

||||

|

||||

run_staging_tests:

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

config:

|

||||

- name: Hub tests for models, schedulers, and pipelines

|

||||

framework: hub_tests_pytorch

|

||||

runner: docker-cpu

|

||||

image: diffusers/diffusers-pytorch-cpu

|

||||

report: torch_hub

|

||||

|

||||

name: ${{ matrix.config.name }}

|

||||

|

||||

runs-on: ${{ matrix.config.runner }}

|

||||

|

||||

container:

|

||||

image: ${{ matrix.config.image }}

|

||||

options: --shm-size "16gb" --ipc host -v /mnt/hf_cache:/mnt/cache/

|

||||

|

||||

defaults:

|

||||

run:

|

||||

shell: bash

|

||||

|

||||

steps:

|

||||

- name: Checkout diffusers

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 2

|

||||

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

apt-get update && apt-get install libsndfile1-dev libgl1 -y

|

||||

python -m pip install -e .[quality,test]

|

||||

|

||||

- name: Environment

|

||||

run: |

|

||||

python utils/print_env.py

|

||||

|

||||

- name: Run Hub tests for models, schedulers, and pipelines on a staging env

|

||||

if: ${{ matrix.config.framework == 'hub_tests_pytorch' }}

|

||||

run: |

|

||||

HUGGINGFACE_CO_STAGING=true python -m pytest \

|

||||

-m "is_staging_test" \

|

||||

--make-reports=tests_${{ matrix.config.report }} \

|

||||

tests

|

||||

|

||||

- name: Failure short reports

|

||||

if: ${{ failure() }}

|

||||

run: cat reports/tests_${{ matrix.config.report }}_failures_short.txt

|

||||

|

||||

- name: Test suite reports artifacts

|

||||

if: ${{ always() }}

|

||||

uses: actions/upload-artifact@v2

|

||||

with:

|

||||

name: pr_${{ matrix.config.report }}_test_reports

|

||||

path: reports

|

||||

2

Makefile

2

Makefile

@@ -78,7 +78,7 @@ test:

|

||||

# Run tests for examples

|

||||

|

||||

test-examples:

|

||||

python -m pytest -n auto --dist=loadfile -s -v ./examples/

|

||||

python -m pytest -n auto --dist=loadfile -s -v ./examples/pytorch/

|

||||

|

||||

|

||||

# Release stuff

|

||||

|

||||

@@ -90,7 +90,7 @@ The following design principles are followed:

|

||||

- To integrate new model checkpoints whose general architecture can be classified as an architecture that already exists in Diffusers, the existing model architecture shall be adapted to make it work with the new checkpoint. One should only create a new file if the model architecture is fundamentally different.

|

||||

- Models should be designed to be easily extendable to future changes. This can be achieved by limiting public function arguments, configuration arguments, and "foreseeing" future changes, *e.g.* it is usually better to add `string` "...type" arguments that can easily be extended to new future types instead of boolean `is_..._type` arguments. Only the minimum amount of changes shall be made to existing architectures to make a new model checkpoint work.

|

||||

- The model design is a difficult trade-off between keeping code readable and concise and supporting many model checkpoints. For most parts of the modeling code, classes shall be adapted for new model checkpoints, while there are some exceptions where it is preferred to add new classes to make sure the code is kept concise and

|

||||

readable longterm, such as [UNet blocks](https://github.com/huggingface/diffusers/blob/main/src/diffusers/models/unet_2d_blocks.py) and [Attention processors](https://github.com/huggingface/diffusers/blob/main/src/diffusers/models/attention_processor.py).

|

||||

readable longterm, such as [UNet blocks](https://github.com/huggingface/diffusers/blob/main/src/diffusers/models/unet_2d_blocks.py) and [Attention processors](https://github.com/huggingface/diffusers/blob/main/src/diffusers/models/cross_attention.py).

|

||||

|

||||

### Schedulers

|

||||

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

<p align="center">

|

||||

<br>

|

||||

<img src="https://raw.githubusercontent.com/huggingface/diffusers/main/docs/source/en/imgs/diffusers_library.jpg" width="400"/>

|

||||

<img src="https://github.com/huggingface/diffusers/blob/main/docs/source/en/imgs/diffusers_library.jpg" width="400"/>

|

||||

<br>

|

||||

<p>

|

||||

<p align="center">

|

||||

|

||||

@@ -13,8 +13,6 @@

|

||||

title: Overview

|

||||

- local: using-diffusers/write_own_pipeline

|

||||

title: Understanding models and schedulers

|

||||

- local: tutorials/autopipeline

|

||||

title: AutoPipeline

|

||||

- local: tutorials/basic_training

|

||||

title: Train a diffusion model

|

||||

title: Tutorials

|

||||

@@ -32,22 +30,20 @@

|

||||

title: Load safetensors

|

||||

- local: using-diffusers/other-formats

|

||||

title: Load different Stable Diffusion formats

|

||||

- local: using-diffusers/push_to_hub

|

||||

title: Push files to the Hub

|

||||

title: Loading & Hub

|

||||

- sections:

|

||||

- local: using-diffusers/pipeline_overview

|

||||

title: Overview

|

||||

- local: using-diffusers/unconditional_image_generation

|

||||

title: Unconditional image generation

|

||||

- local: using-diffusers/conditional_image_generation

|

||||

title: Text-to-image

|

||||

title: Text-to-image generation

|

||||

- local: using-diffusers/img2img

|

||||

title: Image-to-image

|

||||

title: Text-guided image-to-image

|

||||

- local: using-diffusers/inpaint

|

||||

title: Inpainting

|

||||

title: Text-guided image-inpainting

|

||||

- local: using-diffusers/depth2img

|

||||

title: Depth-to-image

|

||||

title: Tasks

|

||||

- sections:

|

||||

title: Text-guided depth-to-image

|

||||

- local: using-diffusers/textual_inversion_inference

|

||||

title: Textual inversion

|

||||

- local: training/distributed_inference

|

||||

@@ -56,28 +52,16 @@

|

||||

title: Improve image quality with deterministic generation

|

||||

- local: using-diffusers/control_brightness

|

||||

title: Control image brightness

|

||||

- local: using-diffusers/weighted_prompts

|

||||

title: Prompt weighting

|

||||

title: Techniques

|

||||

- sections:

|

||||

- local: using-diffusers/pipeline_overview

|

||||

title: Overview

|

||||

- local: using-diffusers/sdxl

|

||||

title: Stable Diffusion XL

|

||||

- local: using-diffusers/controlnet

|

||||

title: ControlNet

|

||||

- local: using-diffusers/shap-e

|

||||

title: Shap-E

|

||||

- local: using-diffusers/diffedit

|

||||

title: DiffEdit

|

||||

- local: using-diffusers/distilled_sd

|

||||

title: Distilled Stable Diffusion inference

|

||||

- local: using-diffusers/reproducibility

|

||||

title: Create reproducible pipelines

|

||||

- local: using-diffusers/custom_pipeline_examples

|

||||

title: Community pipelines

|

||||

- local: using-diffusers/contribute_pipeline

|

||||

title: How to contribute a community pipeline

|

||||

- local: using-diffusers/stable_diffusion_jax_how_to

|

||||

title: Stable Diffusion in JAX/Flax

|

||||

- local: using-diffusers/weighted_prompts

|

||||

title: Weighting Prompts

|

||||

title: Pipelines for Inference

|

||||

- sections:

|

||||

- local: training/overview

|

||||

@@ -115,8 +99,6 @@

|

||||

title: Memory and Speed

|

||||

- local: optimization/torch2.0

|

||||

title: Torch2.0 support

|

||||

- local: using-diffusers/stable_diffusion_jax_how_to

|

||||

title: Stable Diffusion in JAX/Flax

|

||||

- local: optimization/xformers

|

||||

title: xFormers

|

||||

- local: optimization/onnx

|

||||

@@ -180,8 +162,6 @@

|

||||

title: AutoencoderKL

|

||||

- local: api/models/asymmetricautoencoderkl

|

||||

title: AsymmetricAutoencoderKL

|

||||

- local: api/models/autoencoder_tiny

|

||||

title: Tiny AutoEncoder

|

||||

- local: api/models/transformer2d

|

||||

title: Transformer2D

|

||||

- local: api/models/transformer_temporal

|

||||

@@ -202,16 +182,12 @@

|

||||

title: Audio Diffusion

|

||||

- local: api/pipelines/audioldm

|

||||

title: AudioLDM

|

||||

- local: api/pipelines/audioldm2

|

||||

title: AudioLDM 2

|

||||

- local: api/pipelines/auto_pipeline

|

||||

title: AutoPipeline

|

||||

- local: api/pipelines/consistency_models

|

||||

title: Consistency Models

|

||||

- local: api/pipelines/controlnet

|

||||

title: ControlNet

|

||||

- local: api/pipelines/controlnet_sdxl

|

||||

title: ControlNet with Stable Diffusion XL

|

||||

- local: api/pipelines/cycle_diffusion

|

||||

title: Cycle Diffusion

|

||||

- local: api/pipelines/dance_diffusion

|

||||

@@ -236,8 +212,6 @@

|

||||

title: Latent Diffusion

|

||||

- local: api/pipelines/panorama

|

||||

title: MultiDiffusion

|

||||

- local: api/pipelines/musicldm

|

||||

title: MusicLDM

|

||||

- local: api/pipelines/paint_by_example

|

||||

title: PaintByExample

|

||||

- local: api/pipelines/paradigms

|

||||

@@ -285,8 +259,6 @@

|

||||

title: LDM3D Text-to-(RGB, Depth)

|

||||

- local: api/pipelines/stable_diffusion/adapter

|

||||

title: Stable Diffusion T2I-adapter

|

||||

- local: api/pipelines/stable_diffusion/gligen

|

||||

title: GLIGEN (Grounded Language-to-Image Generation)

|

||||

title: Stable Diffusion

|

||||

- local: api/pipelines/stable_unclip

|

||||

title: Stable unCLIP

|

||||

@@ -315,49 +287,49 @@

|

||||

- local: api/schedulers/overview

|

||||

title: Overview

|

||||

- local: api/schedulers/cm_stochastic_iterative

|

||||

title: CMStochasticIterativeScheduler

|

||||

- local: api/schedulers/ddim_inverse

|

||||

title: DDIMInverseScheduler

|

||||

title: Consistency Model Multistep Scheduler

|

||||

- local: api/schedulers/ddim

|

||||

title: DDIMScheduler

|

||||

title: DDIM

|

||||

- local: api/schedulers/ddim_inverse

|

||||

title: DDIMInverse

|

||||

- local: api/schedulers/ddpm

|

||||

title: DDPMScheduler

|

||||

title: DDPM

|

||||

- local: api/schedulers/deis

|

||||

title: DEISMultistepScheduler

|

||||

- local: api/schedulers/multistep_dpm_solver_inverse

|

||||

title: DPMSolverMultistepInverse

|

||||

- local: api/schedulers/multistep_dpm_solver

|

||||

title: DPMSolverMultistepScheduler

|

||||

title: DEIS

|

||||

- local: api/schedulers/dpm_discrete

|

||||

title: DPM Discrete Scheduler

|

||||

- local: api/schedulers/dpm_discrete_ancestral

|

||||

title: DPM Discrete Scheduler with ancestral sampling

|

||||

- local: api/schedulers/dpm_sde

|

||||

title: DPMSolverSDEScheduler

|

||||

- local: api/schedulers/singlestep_dpm_solver

|

||||

title: DPMSolverSinglestepScheduler

|

||||

- local: api/schedulers/euler_ancestral

|

||||

title: EulerAncestralDiscreteScheduler

|

||||

title: Euler Ancestral Scheduler

|

||||

- local: api/schedulers/euler

|

||||

title: EulerDiscreteScheduler

|

||||

title: Euler scheduler

|

||||

- local: api/schedulers/heun

|

||||

title: HeunDiscreteScheduler

|

||||

title: Heun Scheduler

|

||||

- local: api/schedulers/multistep_dpm_solver_inverse

|

||||

title: Inverse Multistep DPM-Solver

|

||||

- local: api/schedulers/ipndm

|

||||

title: IPNDMScheduler

|

||||

- local: api/schedulers/stochastic_karras_ve

|

||||

title: KarrasVeScheduler

|

||||

- local: api/schedulers/dpm_discrete_ancestral

|

||||

title: KDPM2AncestralDiscreteScheduler

|

||||

- local: api/schedulers/dpm_discrete

|

||||

title: KDPM2DiscreteScheduler

|

||||

title: IPNDM

|

||||

- local: api/schedulers/lms_discrete

|

||||

title: LMSDiscreteScheduler

|

||||

title: Linear Multistep

|

||||

- local: api/schedulers/multistep_dpm_solver

|

||||

title: Multistep DPM-Solver

|

||||

- local: api/schedulers/pndm

|

||||

title: PNDMScheduler

|

||||

title: PNDM

|

||||

- local: api/schedulers/repaint

|

||||

title: RePaintScheduler

|

||||

- local: api/schedulers/score_sde_ve

|

||||

title: ScoreSdeVeScheduler

|

||||

- local: api/schedulers/score_sde_vp

|

||||

title: ScoreSdeVpScheduler

|

||||

title: RePaint Scheduler

|

||||

- local: api/schedulers/singlestep_dpm_solver

|

||||

title: Singlestep DPM-Solver

|

||||

- local: api/schedulers/stochastic_karras_ve

|

||||

title: Stochastic Kerras VE

|

||||

- local: api/schedulers/unipc

|

||||

title: UniPCMultistepScheduler

|

||||

- local: api/schedulers/score_sde_ve

|

||||

title: VE-SDE

|

||||

- local: api/schedulers/score_sde_vp

|

||||

title: VP-SDE

|

||||

- local: api/schedulers/vq_diffusion

|

||||

title: VQDiffusionScheduler

|

||||

title: Schedulers

|

||||

|

||||

@@ -1,45 +0,0 @@

|

||||

# Tiny AutoEncoder

|

||||

|

||||

Tiny AutoEncoder for Stable Diffusion (TAESD) was introduced in [madebyollin/taesd](https://github.com/madebyollin/taesd) by Ollin Boer Bohan. It is a tiny distilled version of Stable Diffusion's VAE that can quickly decode the latents in a [`StableDiffusionPipeline`] or [`StableDiffusionXLPipeline`] almost instantly.

|

||||

|

||||

To use with Stable Diffusion v-2.1:

|

||||

|

||||

```python

|

||||

import torch

|

||||

from diffusers import DiffusionPipeline, AutoencoderTiny

|

||||

|

||||

pipe = DiffusionPipeline.from_pretrained(

|

||||

"stabilityai/stable-diffusion-2-1-base", torch_dtype=torch.float16

|

||||

)

|

||||

pipe.vae = AutoencoderTiny.from_pretrained("madebyollin/taesd", torch_dtype=torch.float16)

|

||||

pipe = pipe.to("cuda")

|

||||

|

||||

prompt = "slice of delicious New York-style berry cheesecake"

|

||||

image = pipe(prompt, num_inference_steps=25).images[0]

|

||||

image.save("cheesecake.png")

|

||||

```

|

||||

|

||||

To use with Stable Diffusion XL 1.0

|

||||

|

||||

```python

|

||||

import torch

|

||||

from diffusers import DiffusionPipeline, AutoencoderTiny

|

||||

|

||||

pipe = DiffusionPipeline.from_pretrained(

|

||||

"stabilityai/stable-diffusion-xl-base-1.0", torch_dtype=torch.float16

|

||||

)

|

||||

pipe.vae = AutoencoderTiny.from_pretrained("madebyollin/taesdxl", torch_dtype=torch.float16)

|

||||

pipe = pipe.to("cuda")

|

||||

|

||||

prompt = "slice of delicious New York-style berry cheesecake"

|

||||

image = pipe(prompt, num_inference_steps=25).images[0]

|

||||

image.save("cheesecake_sdxl.png")

|

||||

```

|

||||

|

||||

## AutoencoderTiny

|

||||

|

||||

[[autodoc]] AutoencoderTiny

|

||||

|

||||

## AutoencoderTinyOutput

|

||||

|

||||

[[autodoc]] models.autoencoder_tiny.AutoencoderTinyOutput

|

||||

@@ -9,8 +9,4 @@ All models are built from the base [`ModelMixin`] class which is a [`torch.nn.mo

|

||||

|

||||

## FlaxModelMixin

|

||||

|

||||

[[autodoc]] FlaxModelMixin

|

||||

|

||||

## PushToHubMixin

|

||||

|

||||

[[autodoc]] utils.PushToHubMixin

|

||||

[[autodoc]] FlaxModelMixin

|

||||

@@ -46,5 +46,6 @@ Make sure to check out the Schedulers [guide](/using-diffusers/schedulers) to le

|

||||

- all

|

||||

- __call__

|

||||

|

||||

## AudioPipelineOutput

|

||||

[[autodoc]] pipelines.AudioPipelineOutput

|

||||

## StableDiffusionPipelineOutput

|

||||

|

||||

[[autodoc]] pipelines.stable_diffusion.StableDiffusionPipelineOutput

|

||||

@@ -1,93 +0,0 @@

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# AudioLDM 2

|

||||

|

||||

AudioLDM 2 was proposed in [AudioLDM 2: Learning Holistic Audio Generation with Self-supervised Pretraining](https://arxiv.org/abs/2308.05734)

|

||||

by Haohe Liu et al. AudioLDM 2 takes a text prompt as input and predicts the corresponding audio. It can generate

|

||||

text-conditional sound effects, human speech and music.

|

||||

|

||||

Inspired by [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/overview), AudioLDM 2

|

||||

is a text-to-audio _latent diffusion model (LDM)_ that learns continuous audio representations from text embeddings. Two

|

||||

text encoder models are used to compute the text embeddings from a prompt input: the text-branch of [CLAP](https://huggingface.co/docs/transformers/main/en/model_doc/clap)

|

||||

and the encoder of [Flan-T5](https://huggingface.co/docs/transformers/main/en/model_doc/flan-t5). These text embeddings

|

||||

are then projected to a shared embedding space by an [AudioLDM2ProjectionModel](https://huggingface.co/docs/diffusers/main/api/pipelines/audioldm2#diffusers.AudioLDM2ProjectionModel).

|

||||

A [GPT2](https://huggingface.co/docs/transformers/main/en/model_doc/gpt2) _language model (LM)_ is used to auto-regressively

|

||||

predict eight new embedding vectors, conditional on the projected CLAP and Flan-T5 embeddings. The generated embedding

|

||||

vectors and Flan-T5 text embeddings are used as cross-attention conditioning in the LDM. The [UNet](https://huggingface.co/docs/diffusers/main/en/api/pipelines/audioldm2#diffusers.AudioLDM2UNet2DConditionModel)

|

||||

of AudioLDM 2 is unique in the sense that it takes **two** cross-attention embeddings, as opposed to one cross-attention

|

||||

conditioning, as in most other LDMs.

|

||||

|

||||

The abstract of the paper is the following:

|

||||

|

||||

*Although audio generation shares commonalities across different types of audio, such as speech, music, and sound effects, designing models for each type requires careful consideration of specific objectives and biases that can significantly differ from those of other types. To bring us closer to a unified perspective of audio generation, this paper proposes a framework that utilizes the same learning method for speech, music, and sound effect generation. Our framework introduces a general representation of audio, called language of audio (LOA). Any audio can be translated into LOA based on AudioMAE, a self-supervised pre-trained representation learning model. In the generation process, we translate any modalities into LOA by using a GPT-2 model, and we perform self-supervised audio generation learning with a latent diffusion model conditioned on LOA. The proposed framework naturally brings advantages such as in-context learning abilities and reusable self-supervised pretrained AudioMAE and latent diffusion models. Experiments on the major benchmarks of text-to-audio, text-to-music, and text-to-speech demonstrate new state-of-the-art or competitive performance to previous approaches.*

|

||||

|

||||

This pipeline was contributed by [sanchit-gandhi](https://huggingface.co/sanchit-gandhi). The original codebase can be

|

||||

found at [haoheliu/audioldm2](https://github.com/haoheliu/audioldm2).

|

||||

|

||||

## Tips

|

||||

|

||||

### Choosing a checkpoint

|

||||

|

||||

AudioLDM2 comes in three variants. Two of these checkpoints are applicable to the general task of text-to-audio

|

||||

generation. The third checkpoint is trained exclusively on text-to-music generation.

|

||||

|

||||

All checkpoints share the same model size for the text encoders and VAE. They differ in the size and depth of the UNet.

|

||||

See table below for details on the three checkpoints:

|

||||

|

||||

| Checkpoint | Task | UNet Model Size | Total Model Size | Training Data / h |

|

||||

|-----------------------------------------------------------------|---------------|-----------------|------------------|-------------------|

|

||||

| [audioldm2](https://huggingface.co/cvssp/audioldm2) | Text-to-audio | 350M | 1.1B | 1150k |

|

||||

| [audioldm2-large](https://huggingface.co/cvssp/audioldm2-large) | Text-to-audio | 750M | 1.5B | 1150k |

|

||||

| [audioldm2-music](https://huggingface.co/cvssp/audioldm2-music) | Text-to-music | 350M | 1.1B | 665k |

|

||||

|

||||

### Constructing a prompt

|

||||

|

||||

* Descriptive prompt inputs work best: use adjectives to describe the sound (e.g. "high quality" or "clear") and make the prompt context specific (e.g. "water stream in a forest" instead of "stream").

|

||||

* It's best to use general terms like "cat" or "dog" instead of specific names or abstract objects the model may not be familiar with.

|

||||

* Using a **negative prompt** can significantly improve the quality of the generated waveform, by guiding the generation away from terms that correspond to poor quality audio. Try using a negative prompt of "Low quality."

|

||||

|

||||

### Controlling inference

|

||||

|

||||

* The _quality_ of the predicted audio sample can be controlled by the `num_inference_steps` argument; higher steps give higher quality audio at the expense of slower inference.

|

||||

* The _length_ of the predicted audio sample can be controlled by varying the `audio_length_in_s` argument.

|

||||

|

||||

### Evaluating generated waveforms:

|

||||

|

||||

* The quality of the generated waveforms can vary significantly based on the seed. Try generating with different seeds until you find a satisfactory generation

|

||||

* Multiple waveforms can be generated in one go: set `num_waveforms_per_prompt` to a value greater than 1. Automatic scoring will be performed between the generated waveforms and prompt text, and the audios ranked from best to worst accordingly.

|

||||

|

||||

The following example demonstrates how to construct good music generation using the aforementioned tips: [example](https://huggingface.co/docs/diffusers/main/en/api/pipelines/audioldm2#diffusers.AudioLDM2Pipeline.__call__.example).

|

||||

|

||||

<Tip>

|

||||

|

||||

Make sure to check out the Schedulers [guide](/using-diffusers/schedulers) to learn how to explore the tradeoff between

|

||||

scheduler speed and quality, and see the [reuse components across pipelines](/using-diffusers/loading#reuse-components-across-pipelines)

|

||||

section to learn how to efficiently load the same components into multiple pipelines.

|

||||

|

||||

</Tip>

|

||||

|

||||

## AudioLDM2Pipeline

|

||||

[[autodoc]] AudioLDM2Pipeline

|

||||

- all

|

||||

- __call__

|

||||

|

||||

## AudioLDM2ProjectionModel

|

||||

[[autodoc]] AudioLDM2ProjectionModel

|

||||

- forward

|

||||

|

||||

## AudioLDM2UNet2DConditionModel

|

||||

[[autodoc]] AudioLDM2UNet2DConditionModel

|

||||

- forward

|

||||

|

||||

## AudioPipelineOutput

|

||||

[[autodoc]] pipelines.AudioPipelineOutput

|

||||

@@ -12,41 +12,35 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

# AutoPipeline

|

||||

|

||||

`AutoPipeline` is designed to:

|

||||

In many cases, one checkpoint can be used for multiple tasks. For example, you may be able to use the same checkpoint for Text-to-Image, Image-to-Image, and Inpainting. However, you'll need to know the pipeline class names linked to your checkpoint.

|

||||

|

||||

1. make it easy for you to load a checkpoint for a task without knowing the specific pipeline class to use

|

||||

2. use multiple pipelines in your workflow

|

||||

AutoPipeline is designed to make it easy for you to use multiple pipelines in your workflow. We currently provide 3 AutoPipeline classes to perform three different tasks, i.e. [`AutoPipelineForText2Image`], [`AutoPipelineForImage2Image`], and [`AutoPipelineForInpainting`]. You'll need to choose the AutoPipeline class based on the task you want to perform and use it to automatically retrieve the relevant pipeline given the name/path to the pre-trained weights.

|

||||

|

||||

Based on the task, the `AutoPipeline` class automatically retrieves the relevant pipeline given the name or path to the pretrained weights with the `from_pretrained()` method.

|

||||

For example, to perform Image-to-Image with the SD1.5 checkpoint, you can do

|

||||

|

||||

To seamlessly switch between tasks with the same checkpoint without reallocating additional memory, use the `from_pipe()` method to transfer the components from the original pipeline to the new one.

|

||||

```python

|

||||

from diffusers import PipelineForImageToImage

|

||||

|

||||

```py

|

||||

from diffusers import AutoPipelineForText2Image

|

||||

import torch

|

||||

|

||||

pipeline = AutoPipelineForText2Image.from_pretrained(

|

||||

"runwayml/stable-diffusion-v1-5", torch_dtype=torch.float16, use_safetensors=True

|

||||

).to("cuda")

|

||||

prompt = "Astronaut in a jungle, cold color palette, muted colors, detailed, 8k"

|

||||

|

||||

image = pipeline(prompt, num_inference_steps=25).images[0]

|

||||

pipe_i2i = PipelineForImageoImage.from_pretrained("runwayml/stable-diffusion-v1-5")

|

||||

```

|

||||

|

||||

<Tip>

|

||||

It will also help you switch between tasks seamlessly using the same checkpoint without reallocating additional memory. For example, to re-use the Image-to-Image pipeline we just created for inpainting, you can do

|

||||

|

||||

Check out the [AutoPipeline](/tutorials/autopipeline) tutorial to learn how to use this API!

|

||||

```python

|

||||

from diffusers import PipelineForInpainting

|

||||

|

||||

</Tip>

|

||||

pipe_inpaint = AutoPipelineForInpainting.from_pipe(pipe_i2i)

|

||||

```

|

||||

All the components will be transferred to the inpainting pipeline with zero cost.

|

||||

|

||||

`AutoPipeline` supports text-to-image, image-to-image, and inpainting for the following diffusion models:

|

||||

|

||||

- [Stable Diffusion](./stable_diffusion)

|

||||

- [ControlNet](./api/pipelines/controlnet)

|

||||

- [Stable Diffusion XL (SDXL)](./stable_diffusion/stable_diffusion_xl)

|

||||

- [DeepFloyd IF](./if)

|

||||

Currently AutoPipeline support the Text-to-Image, Image-to-Image, and Inpainting tasks for below diffusion models:

|

||||

- [stable Diffusion](./stable_diffusion)

|

||||

- [Stable Diffusion Controlnet](./api/pipelines/controlnet)

|

||||

- [Stable Diffusion XL](./stable_diffusion/stable_diffusion_xl)

|

||||

- [IF](./if)

|

||||

- [Kandinsky](./kandinsky)

|

||||

- [Kandinsky 2.2](./kandinsky#kandinsky-22)

|

||||

- [Kandinsky 2.2](./kandinsky)

|

||||

|

||||

|

||||

## AutoPipelineForText2Image

|

||||

|

||||

@@ -12,9 +12,9 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

# ControlNet

|

||||

|

||||

ControlNet was introduced in [Adding Conditional Control to Text-to-Image Diffusion Models](https://huggingface.co/papers/2302.05543) by Lvmin Zhang and Maneesh Agrawala.

|

||||

[Adding Conditional Control to Text-to-Image Diffusion Models](https://huggingface.co/papers/2302.05543) by Lvmin Zhang and Maneesh Agrawala.

|

||||

|

||||

With a ControlNet model, you can provide an additional control image to condition and control Stable Diffusion generation. For example, if you provide a depth map, the ControlNet model generates an image that'll preserve the spatial information from the depth map. It is a more flexible and accurate way to control the image generation process.

|

||||

Using a pretrained model, we can provide control images (for example, a depth map) to control Stable Diffusion text-to-image generation so that it follows the structure of the depth image and fills in the details.

|

||||

|

||||

The abstract from the paper is:

|

||||

|

||||

@@ -22,13 +22,290 @@ The abstract from the paper is:

|

||||

|

||||

This model was contributed by [takuma104](https://huggingface.co/takuma104). ❤️

|

||||

|

||||

The original codebase can be found at [lllyasviel/ControlNet](https://github.com/lllyasviel/ControlNet), and you can find official ControlNet checkpoints on [lllyasviel's](https://huggingface.co/lllyasviel) Hub profile.

|

||||

The original codebase can be found at [lllyasviel/ControlNet](https://github.com/lllyasviel/ControlNet).

|

||||

|

||||

<Tip>

|

||||

## Usage example

|

||||

|

||||

Make sure to check out the Schedulers [guide](/using-diffusers/schedulers) to learn how to explore the tradeoff between scheduler speed and quality, and see the [reuse components across pipelines](/using-diffusers/loading#reuse-components-across-pipelines) section to learn how to efficiently load the same components into multiple pipelines.

|

||||

In the following we give a simple example of how to use a *ControlNet* checkpoint with Diffusers for inference.

|

||||

The inference pipeline is the same for all pipelines:

|

||||

|

||||

</Tip>

|

||||

* 1. Take an image and run it through a pre-conditioning processor.

|

||||

* 2. Run the pre-processed image through the [`StableDiffusionControlNetPipeline`].

|

||||

|

||||

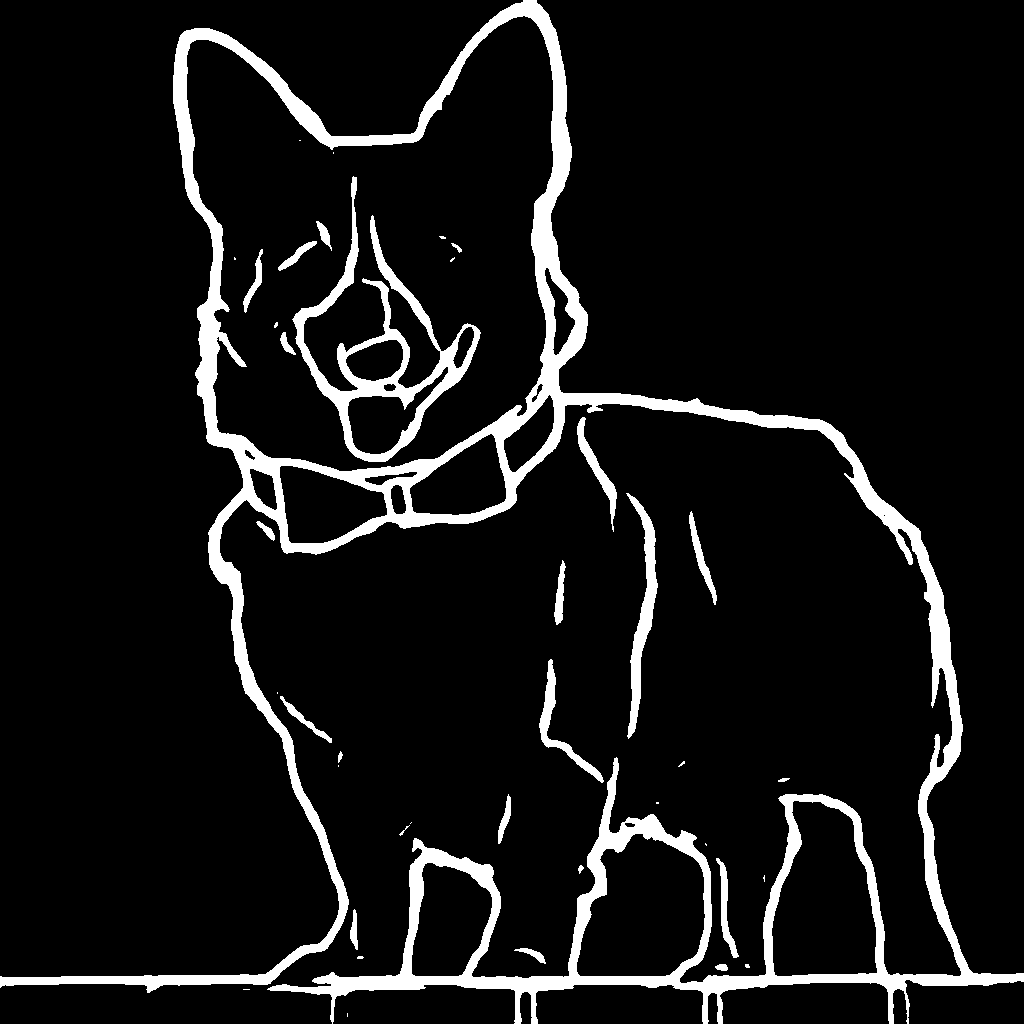

Let's have a look at a simple example using the [Canny Edge ControlNet](https://huggingface.co/lllyasviel/sd-controlnet-canny).

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionControlNetPipeline

|

||||

from diffusers.utils import load_image

|

||||

|

||||

# Let's load the popular vermeer image

|

||||

image = load_image(

|

||||

"https://hf.co/datasets/huggingface/documentation-images/resolve/main/diffusers/input_image_vermeer.png"

|

||||

)

|

||||

```

|

||||

|

||||

|

||||

|

||||

Next, we process the image to get the canny image. This is step *1.* - running the pre-conditioning processor. The pre-conditioning processor is different for every ControlNet. Please see the model cards of the [official checkpoints](#controlnet-with-stable-diffusion-1.5) for more information about other models.

|

||||

|

||||

First, we need to install opencv:

|

||||

|

||||

```

|

||||

pip install opencv-contrib-python

|

||||

```

|

||||

|

||||

Next, let's also install all required Hugging Face libraries:

|

||||

|

||||

```

|

||||

pip install diffusers transformers git+https://github.com/huggingface/accelerate.git

|

||||

```

|

||||

|

||||

Then we can retrieve the canny edges of the image.

|

||||

|

||||

```python

|

||||

import cv2

|

||||

from PIL import Image

|

||||

import numpy as np

|

||||

|

||||

image = np.array(image)

|

||||

|

||||

low_threshold = 100

|

||||

high_threshold = 200

|

||||

|

||||

image = cv2.Canny(image, low_threshold, high_threshold)

|

||||

image = image[:, :, None]

|

||||

image = np.concatenate([image, image, image], axis=2)

|

||||

canny_image = Image.fromarray(image)

|

||||

```

|

||||

|

||||

Let's take a look at the processed image.

|

||||

|

||||

|

||||

|

||||

Now, we load the official [Stable Diffusion 1.5 Model](runwayml/stable-diffusion-v1-5) as well as the ControlNet for canny edges.

|

||||

|

||||

```py

|

||||

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

|

||||

import torch

|

||||

|

||||

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16)

|

||||

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

||||

"runwayml/stable-diffusion-v1-5", controlnet=controlnet, torch_dtype=torch.float16

|

||||

)

|

||||

```

|

||||

|

||||

To speed-up things and reduce memory, let's enable model offloading and use the fast [`UniPCMultistepScheduler`].

|

||||

|

||||

```py

|

||||

from diffusers import UniPCMultistepScheduler

|

||||

|

||||

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

# this command loads the individual model components on GPU on-demand.

|

||||

pipe.enable_model_cpu_offload()

|

||||

```

|

||||

|

||||

Finally, we can run the pipeline:

|

||||

|

||||

```py

|

||||

generator = torch.manual_seed(0)

|

||||

|

||||

out_image = pipe(

|

||||

"disco dancer with colorful lights", num_inference_steps=20, generator=generator, image=canny_image

|

||||

).images[0]

|

||||

```

|

||||

|

||||

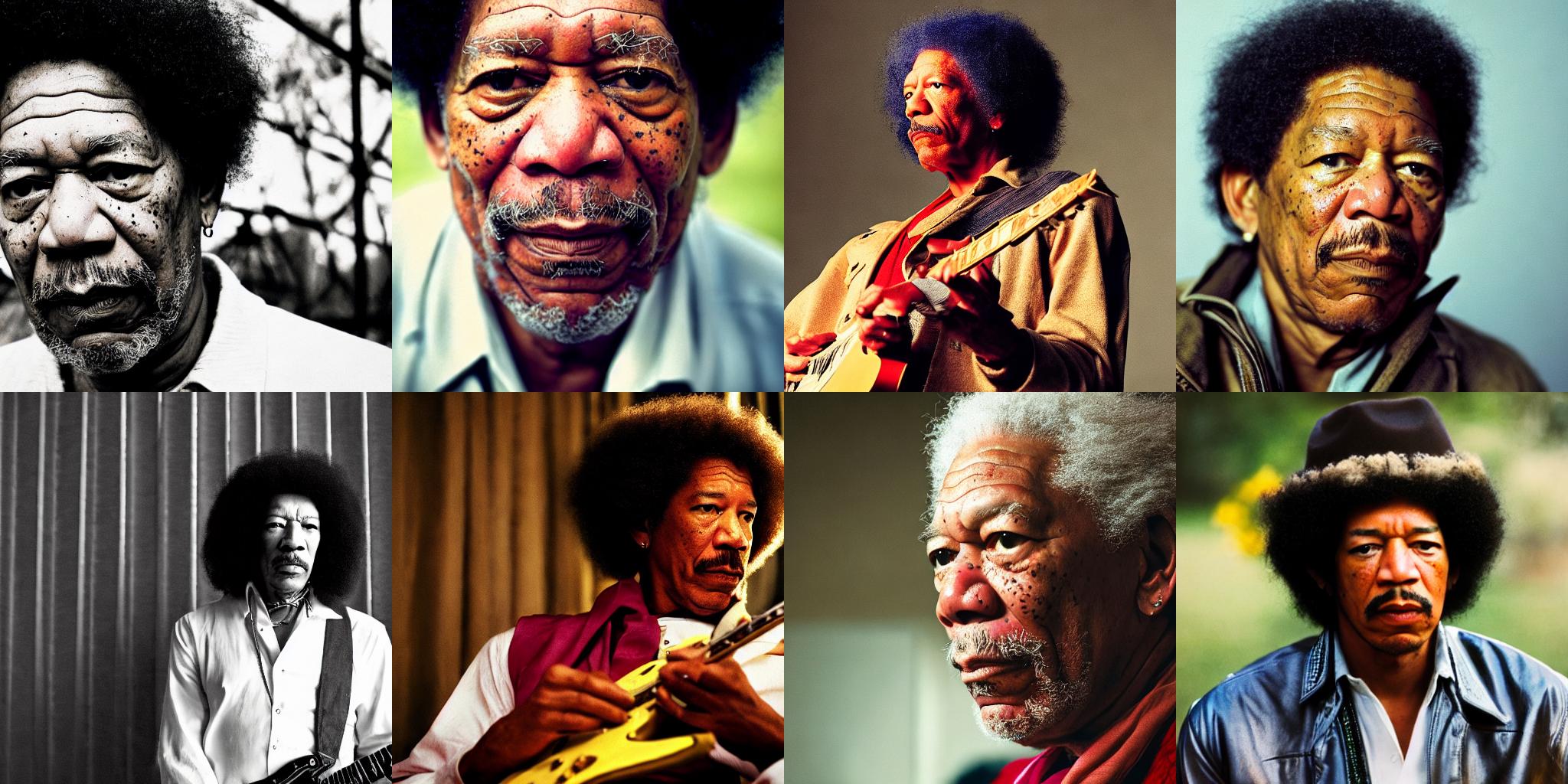

This should take only around 3-4 seconds on GPU (depending on hardware). The output image then looks as follows:

|

||||

|

||||

|

||||

|

||||

|

||||

**Note**: To see how to run all other ControlNet checkpoints, please have a look at [ControlNet with Stable Diffusion 1.5](#controlnet-with-stable-diffusion-1.5).

|

||||

|

||||

<!-- TODO: add space -->

|

||||

|

||||

## Combining multiple conditionings

|

||||

|

||||

Multiple ControlNet conditionings can be combined for a single image generation. Pass a list of ControlNets to the pipeline's constructor and a corresponding list of conditionings to `__call__`.

|

||||

|

||||

When combining conditionings, it is helpful to mask conditionings such that they do not overlap. In the example, we mask the middle of the canny map where the pose conditioning is located.

|

||||

|

||||

It can also be helpful to vary the `controlnet_conditioning_scales` to emphasize one conditioning over the other.

|

||||

|

||||

### Canny conditioning

|

||||

|

||||

The original image:

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/landscape.png"/>

|

||||

|

||||

Prepare the conditioning:

|

||||

|

||||

```python

|

||||

from diffusers.utils import load_image

|

||||

from PIL import Image

|

||||

import cv2

|

||||

import numpy as np

|

||||

from diffusers.utils import load_image

|

||||

|

||||

canny_image = load_image(

|

||||

"https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/landscape.png"

|

||||

)

|

||||

canny_image = np.array(canny_image)

|

||||

|

||||

low_threshold = 100

|

||||

high_threshold = 200

|

||||

|

||||

canny_image = cv2.Canny(canny_image, low_threshold, high_threshold)

|

||||

|

||||

# zero out middle columns of image where pose will be overlayed

|

||||

zero_start = canny_image.shape[1] // 4

|

||||

zero_end = zero_start + canny_image.shape[1] // 2

|

||||

canny_image[:, zero_start:zero_end] = 0

|

||||

|

||||

canny_image = canny_image[:, :, None]

|

||||

canny_image = np.concatenate([canny_image, canny_image, canny_image], axis=2)

|

||||

canny_image = Image.fromarray(canny_image)

|

||||

```

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/controlnet/landscape_canny_masked.png"/>

|

||||

|

||||

### Openpose conditioning

|

||||

|

||||

The original image:

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/person.png" width=600/>

|

||||

|

||||

Prepare the conditioning:

|

||||

|

||||

```python

|

||||

from controlnet_aux import OpenposeDetector

|

||||

from diffusers.utils import load_image

|

||||

|

||||

openpose = OpenposeDetector.from_pretrained("lllyasviel/ControlNet")

|

||||

|

||||

openpose_image = load_image(

|

||||

"https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/person.png"

|

||||

)

|

||||

openpose_image = openpose(openpose_image)

|

||||

```

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/controlnet/person_pose.png" width=600/>

|

||||

|

||||

### Running ControlNet with multiple conditionings

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, UniPCMultistepScheduler

|

||||

import torch

|

||||

|

||||

controlnet = [

|

||||

ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-openpose", torch_dtype=torch.float16),

|

||||

ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16),

|

||||

]

|

||||

|

||||

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

||||

"runwayml/stable-diffusion-v1-5", controlnet=controlnet, torch_dtype=torch.float16

|

||||

)

|

||||

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

|

||||

|

||||

pipe.enable_xformers_memory_efficient_attention()

|

||||

pipe.enable_model_cpu_offload()

|

||||

|

||||

prompt = "a giant standing in a fantasy landscape, best quality"

|

||||

negative_prompt = "monochrome, lowres, bad anatomy, worst quality, low quality"

|

||||

|

||||

generator = torch.Generator(device="cpu").manual_seed(1)

|

||||

|

||||

images = [openpose_image, canny_image]

|

||||

|

||||

image = pipe(

|

||||

prompt,

|

||||

images,

|

||||

num_inference_steps=20,

|

||||

generator=generator,

|

||||

negative_prompt=negative_prompt,

|

||||

controlnet_conditioning_scale=[1.0, 0.8],

|

||||

).images[0]

|

||||

|

||||

image.save("./multi_controlnet_output.png")

|

||||

```

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/controlnet/multi_controlnet_output.png" width=600/>

|

||||

|

||||

### Guess Mode

|

||||

|

||||

Guess Mode is [a ControlNet feature that was implemented](https://github.com/lllyasviel/ControlNet#guess-mode--non-prompt-mode) after the publication of [the paper](https://arxiv.org/abs/2302.05543). The description states:

|

||||

|

||||

>In this mode, the ControlNet encoder will try best to recognize the content of the input control map, like depth map, edge map, scribbles, etc, even if you remove all prompts.

|

||||

|

||||

#### The core implementation:

|

||||

|

||||

It adjusts the scale of the output residuals from ControlNet by a fixed ratio depending on the block depth. The shallowest DownBlock corresponds to `0.1`. As the blocks get deeper, the scale increases exponentially, and the scale for the output of the MidBlock becomes `1.0`.

|

||||

|

||||

Since the core implementation is just this, **it does not have any impact on prompt conditioning**. While it is common to use it without specifying any prompts, it is also possible to provide prompts if desired.

|

||||

|

||||

#### Usage:

|

||||

|

||||

Just specify `guess_mode=True` in the pipe() function. A `guidance_scale` between 3.0 and 5.0 is [recommended](https://github.com/lllyasviel/ControlNet#guess-mode--non-prompt-mode).

|

||||

```py

|

||||

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

|

||||

import torch

|

||||

|

||||

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny")

|

||||

pipe = StableDiffusionControlNetPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", controlnet=controlnet).to(

|

||||

"cuda"

|

||||

)

|

||||

image = pipe("", image=canny_image, guess_mode=True, guidance_scale=3.0).images[0]

|

||||

image.save("guess_mode_generated.png")

|

||||

```

|

||||

|

||||

#### Output image comparison:

|

||||

Canny Control Example

|

||||

|

||||

|no guess_mode with prompt|guess_mode without prompt|

|

||||

|---|---|

|

||||

|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare_guess_mode/output_images/diffusers/output_bird_canny_0.png"><img width="128" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare_guess_mode/output_images/diffusers/output_bird_canny_0.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare_guess_mode/output_images/diffusers/output_bird_canny_0_gm.png"><img width="128" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare_guess_mode/output_images/diffusers/output_bird_canny_0_gm.png"/></a>|

|

||||

|

||||

|

||||

## Available checkpoints

|

||||

|

||||

ControlNet requires a *control image* in addition to the text-to-image *prompt*.

|

||||

Each pretrained model is trained using a different conditioning method that requires different images for conditioning the generated outputs. For example, Canny edge conditioning requires the control image to be the output of a Canny filter, while depth conditioning requires the control image to be a depth map. See the overview and image examples below to know more.

|

||||

|

||||

All checkpoints can be found under the authors' namespace [lllyasviel](https://huggingface.co/lllyasviel).

|

||||

|

||||

**13.04.2024 Update**: The author has released improved controlnet checkpoints v1.1 - see [here](#controlnet-v1.1).

|

||||

|

||||

### ControlNet v1.0

|

||||

|

||||

| Model Name | Control Image Overview| Control Image Example | Generated Image Example |

|

||||

|---|---|---|---|

|

||||

|[lllyasviel/sd-controlnet-canny](https://huggingface.co/lllyasviel/sd-controlnet-canny)<br/> *Trained with canny edge detection* | A monochrome image with white edges on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_bird_canny.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_bird_canny.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_canny_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_canny_1.png"/></a>|

|

||||

|[lllyasviel/sd-controlnet-depth](https://huggingface.co/lllyasviel/sd-controlnet-depth)<br/> *Trained with Midas depth estimation* |A grayscale image with black representing deep areas and white representing shallow areas.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_vermeer_depth.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_vermeer_depth.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_depth_2.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_depth_2.png"/></a>|

|

||||

|[lllyasviel/sd-controlnet-hed](https://huggingface.co/lllyasviel/sd-controlnet-hed)<br/> *Trained with HED edge detection (soft edge)* |A monochrome image with white soft edges on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_bird_hed.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_bird_hed.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_hed_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_hed_1.png"/></a> |

|

||||

|[lllyasviel/sd-controlnet-mlsd](https://huggingface.co/lllyasviel/sd-controlnet-mlsd)<br/> *Trained with M-LSD line detection* |A monochrome image composed only of white straight lines on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_room_mlsd.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_room_mlsd.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_mlsd_0.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_mlsd_0.png"/></a>|

|

||||

|[lllyasviel/sd-controlnet-normal](https://huggingface.co/lllyasviel/sd-controlnet-normal)<br/> *Trained with normal map* |A [normal mapped](https://en.wikipedia.org/wiki/Normal_mapping) image.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_human_normal.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_human_normal.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_normal_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_normal_1.png"/></a>|

|

||||

|[lllyasviel/sd-controlnet-openpose](https://huggingface.co/lllyasviel/sd-controlnet_openpose)<br/> *Trained with OpenPose bone image* |A [OpenPose bone](https://github.com/CMU-Perceptual-Computing-Lab/openpose) image.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_human_openpose.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_human_openpose.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_openpose_0.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_openpose_0.png"/></a>|

|

||||

|[lllyasviel/sd-controlnet-scribble](https://huggingface.co/lllyasviel/sd-controlnet_scribble)<br/> *Trained with human scribbles* |A hand-drawn monochrome image with white outlines on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_vermeer_scribble.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_vermeer_scribble.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_scribble_0.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_scribble_0.png"/></a> |

|

||||

|[lllyasviel/sd-controlnet-seg](https://huggingface.co/lllyasviel/sd-controlnet_seg)<br/>*Trained with semantic segmentation* |An [ADE20K](https://groups.csail.mit.edu/vision/datasets/ADE20K/)'s segmentation protocol image.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_room_seg.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_room_seg.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_seg_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_seg_1.png"/></a> |

|

||||

|

||||

### ControlNet v1.1

|

||||

|

||||

| Model Name | Control Image Overview| Condition Image | Control Image Example | Generated Image Example |

|

||||

|---|---|---|---|---|

|

||||

|[lllyasviel/control_v11p_sd15_canny](https://huggingface.co/lllyasviel/control_v11p_sd15_canny)<br/> | *Trained with canny edge detection* | A monochrome image with white edges on a black background.|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_canny/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11p_sd15_canny/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_canny/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11p_sd15_canny/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11e_sd15_ip2p](https://huggingface.co/lllyasviel/control_v11e_sd15_ip2p)<br/> | *Trained with pixel to pixel instruction* | No condition .|<a href="https://huggingface.co/lllyasviel/control_v11e_sd15_ip2p/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11e_sd15_ip2p/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11e_sd15_ip2p/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11e_sd15_ip2p/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11p_sd15_inpaint](https://huggingface.co/lllyasviel/control_v11p_sd15_inpaint)<br/> | Trained with image inpainting | No condition.|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_inpaint/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11p_sd15_inpaint/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_inpaint/resolve/main/images/output.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11p_sd15_inpaint/resolve/main/images/output.png"/></a>|

|

||||

|[lllyasviel/control_v11p_sd15_mlsd](https://huggingface.co/lllyasviel/control_v11p_sd15_mlsd)<br/> | Trained with multi-level line segment detection | An image with annotated line segments.|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_mlsd/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11p_sd15_mlsd/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_mlsd/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11p_sd15_mlsd/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11f1p_sd15_depth](https://huggingface.co/lllyasviel/control_v11f1p_sd15_depth)<br/> | Trained with depth estimation | An image with depth information, usually represented as a grayscale image.|<a href="https://huggingface.co/lllyasviel/control_v11f1p_sd15_depth/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11f1p_sd15_depth/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11f1p_sd15_depth/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11f1p_sd15_depth/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11p_sd15_normalbae](https://huggingface.co/lllyasviel/control_v11p_sd15_normalbae)<br/> | Trained with surface normal estimation | An image with surface normal information, usually represented as a color-coded image.|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_normalbae/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11p_sd15_normalbae/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_normalbae/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11p_sd15_normalbae/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11p_sd15_seg](https://huggingface.co/lllyasviel/control_v11p_sd15_seg)<br/> | Trained with image segmentation | An image with segmented regions, usually represented as a color-coded image.|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_seg/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11p_sd15_seg/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_seg/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11p_sd15_seg/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11p_sd15_lineart](https://huggingface.co/lllyasviel/control_v11p_sd15_lineart)<br/> | Trained with line art generation | An image with line art, usually black lines on a white background.|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_lineart/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11p_sd15_lineart/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_lineart/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11p_sd15_lineart/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11p_sd15s2_lineart_anime](https://huggingface.co/lllyasviel/control_v11p_sd15s2_lineart_anime)<br/> | Trained with anime line art generation | An image with anime-style line art.|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15s2_lineart_anime/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11p_sd15s2_lineart_anime/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15s2_lineart_anime/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11p_sd15s2_lineart_anime/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11p_sd15_openpose](https://huggingface.co/lllyasviel/control_v11p_sd15s2_lineart_anime)<br/> | Trained with human pose estimation | An image with human poses, usually represented as a set of keypoints or skeletons.|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_openpose/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11p_sd15_openpose/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_openpose/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11p_sd15_openpose/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11p_sd15_scribble](https://huggingface.co/lllyasviel/control_v11p_sd15_scribble)<br/> | Trained with scribble-based image generation | An image with scribbles, usually random or user-drawn strokes.|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_scribble/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11p_sd15_scribble/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_scribble/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11p_sd15_scribble/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11p_sd15_softedge](https://huggingface.co/lllyasviel/control_v11p_sd15_softedge)<br/> | Trained with soft edge image generation | An image with soft edges, usually to create a more painterly or artistic effect.|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_softedge/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11p_sd15_softedge/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11p_sd15_softedge/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11p_sd15_softedge/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11e_sd15_shuffle](https://huggingface.co/lllyasviel/control_v11e_sd15_shuffle)<br/> | Trained with image shuffling | An image with shuffled patches or regions.|<a href="https://huggingface.co/lllyasviel/control_v11e_sd15_shuffle/resolve/main/images/control.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11e_sd15_shuffle/resolve/main/images/control.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11e_sd15_shuffle/resolve/main/images/image_out.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11e_sd15_shuffle/resolve/main/images/image_out.png"/></a>|

|

||||

|[lllyasviel/control_v11f1e_sd15_tile](https://huggingface.co/lllyasviel/control_v11f1e_sd15_tile)<br/> | Trained with image tiling | A blurry image or part of an image .|<a href="https://huggingface.co/lllyasviel/control_v11f1e_sd15_tile/resolve/main/images/original.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/lllyasviel/control_v11f1e_sd15_tile/resolve/main/images/original.png"/></a>|<a href="https://huggingface.co/lllyasviel/control_v11f1e_sd15_tile/resolve/main/images/output.png"><img width="64" src="https://huggingface.co/lllyasviel/control_v11f1e_sd15_tile/resolve/main/images/output.png"/></a>|

|

||||

|

||||

## StableDiffusionControlNetPipeline

|

||||

[[autodoc]] StableDiffusionControlNetPipeline

|

||||

@@ -66,15 +343,8 @@ Make sure to check out the Schedulers [guide](/using-diffusers/schedulers) to le

|

||||

- disable_xformers_memory_efficient_attention

|

||||

- load_textual_inversion

|

||||

|

||||

## StableDiffusionPipelineOutput

|

||||

|

||||

[[autodoc]] pipelines.stable_diffusion.StableDiffusionPipelineOutput

|

||||

|

||||

## FlaxStableDiffusionControlNetPipeline

|

||||

[[autodoc]] FlaxStableDiffusionControlNetPipeline

|

||||

- all

|

||||

- __call__

|

||||

|

||||

## FlaxStableDiffusionControlNetPipelineOutput

|

||||

|

||||

[[autodoc]] pipelines.stable_diffusion.FlaxStableDiffusionPipelineOutput

|

||||

@@ -1,46 +0,0 @@

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# ControlNet with Stable Diffusion XL

|

||||

|

||||

ControlNet was introduced in [Adding Conditional Control to Text-to-Image Diffusion Models](https://huggingface.co/papers/2302.05543) by Lvmin Zhang and Maneesh Agrawala.

|

||||

|

||||

With a ControlNet model, you can provide an additional control image to condition and control Stable Diffusion generation. For example, if you provide a depth map, the ControlNet model generates an image that'll preserve the spatial information from the depth map. It is a more flexible and accurate way to control the image generation process.

|

||||

|

||||

The abstract from the paper is:

|

||||

|

||||

*We present a neural network structure, ControlNet, to control pretrained large diffusion models to support additional input conditions. The ControlNet learns task-specific conditions in an end-to-end way, and the learning is robust even when the training dataset is small (< 50k). Moreover, training a ControlNet is as fast as fine-tuning a diffusion model, and the model can be trained on a personal devices. Alternatively, if powerful computation clusters are available, the model can scale to large amounts (millions to billions) of data. We report that large diffusion models like Stable Diffusion can be augmented with ControlNets to enable conditional inputs like edge maps, segmentation maps, keypoints, etc. This may enrich the methods to control large diffusion models and further facilitate related applications.*

|

||||

|

||||

You can find additional smaller Stable Diffusion XL (SDXL) ControlNet checkpoints from the 🤗 [Diffusers](https://huggingface.co/diffusers) Hub organization, and browse [community-trained](https://huggingface.co/models?other=stable-diffusion-xl&other=controlnet) checkpoints on the Hub.

|

||||

|

||||

<Tip warning={true}>

|

||||

|

||||

🧪 Many of the SDXL ControlNet checkpoints are experimental, and there is a lot of room for improvement. Feel free to open an [Issue](https://github.com/huggingface/diffusers/issues/new/choose) and leave us feedback on how we can improve!

|

||||

|

||||

</Tip>

|

||||

|

||||

If you don't see a checkpoint you're interested in, you can train your own SDXL ControlNet with our [training script](https://github.com/huggingface/diffusers/blob/main/examples/controlnet/README_sdxl.md).

|

||||

|

||||

<Tip>

|

||||

|

||||

Make sure to check out the Schedulers [guide](/using-diffusers/schedulers) to learn how to explore the tradeoff between scheduler speed and quality, and see the [reuse components across pipelines](/using-diffusers/loading#reuse-components-across-pipelines) section to learn how to efficiently load the same components into multiple pipelines.

|

||||

|

||||

</Tip>

|

||||

|

||||

## StableDiffusionXLControlNetPipeline

|

||||

[[autodoc]] StableDiffusionXLControlNetPipeline

|

||||

- all

|

||||

- __call__

|

||||

|

||||

## StableDiffusionPipelineOutput

|

||||

|

||||

[[autodoc]] pipelines.stable_diffusion.StableDiffusionPipelineOutput

|

||||

@@ -24,32 +24,325 @@ This pipeline was contributed by [clarencechen](https://github.com/clarencechen)

|

||||

|

||||

## Tips

|

||||

|

||||

* The pipeline can generate masks that can be fed into other inpainting pipelines.

|

||||

* In order to generate an image using this pipeline, both an image mask (source and target prompts can be manually specified or generated, and passed to [`~StableDiffusionDiffEditPipeline.generate_mask`])

|

||||

and a set of partially inverted latents (generated using [`~StableDiffusionDiffEditPipeline.invert`]) _must_ be provided as arguments when calling the pipeline to generate the final edited image.

|

||||

* The function [`~StableDiffusionDiffEditPipeline.generate_mask`] exposes two prompt arguments, `source_prompt` and `target_prompt`

|

||||

* The pipeline can generate masks that can be fed into other inpainting pipelines. Check out the code examples below to know more.

|

||||

* In order to generate an image using this pipeline, both an image mask (manually specified or generated using `generate_mask`)

|

||||

and a set of partially inverted latents (generated using `invert`) _must_ be provided as arguments when calling the pipeline to generate the final edited image.

|

||||

Refer to the code examples below for more details.

|

||||

* The function `generate_mask` exposes two prompt arguments, `source_prompt` and `target_prompt`,

|

||||

that let you control the locations of the semantic edits in the final image to be generated. Let's say,

|

||||

you wanted to translate from "cat" to "dog". In this case, the edit direction will be "cat -> dog". To reflect

|

||||

this in the generated mask, you simply have to set the embeddings related to the phrases including "cat" to

|

||||

`source_prompt` and "dog" to `target_prompt`.

|

||||

`source_prompt_embeds` and "dog" to `target_prompt_embeds`. Refer to the code example below for more details.

|

||||

* When generating partially inverted latents using `invert`, assign a caption or text embedding describing the

|

||||

overall image to the `prompt` argument to help guide the inverse latent sampling process. In most cases, the

|

||||

source concept is sufficently descriptive to yield good results, but feel free to explore alternatives.

|

||||

Please refer to [this code example](#generating-image-captions-for-inversion) for more details.

|

||||

* When calling the pipeline to generate the final edited image, assign the source concept to `negative_prompt`

|

||||

and the target concept to `prompt`. Taking the above example, you simply have to set the embeddings related to

|

||||

the phrases including "cat" to `negative_prompt` and "dog" to `prompt`.

|

||||

the phrases including "cat" to `negative_prompt_embeds` and "dog" to `prompt_embeds`. Refer to the code example

|

||||

below for more details.

|

||||

* If you wanted to reverse the direction in the example above, i.e., "dog -> cat", then it's recommended to:

|

||||

* Swap the `source_prompt` and `target_prompt` in the arguments to `generate_mask`.

|

||||