mirror of

https://github.com/huggingface/diffusers.git

synced 2026-03-09 18:21:48 +08:00

Compare commits

9 Commits

ci-ssh

...

typo-in-wo

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

bf030ad21c | ||

|

|

e0fae6fd73 | ||

|

|

ec1aded12e | ||

|

|

151a56b80e | ||

|

|

a3faf3f260 | ||

|

|

867a2b0cf9 | ||

|

|

98730c5dd7 | ||

|

|

7ebd359446 | ||

|

|

d3881f35b7 |

85

.github/workflows/mirror_community_pipeline.yml

vendored

Normal file

85

.github/workflows/mirror_community_pipeline.yml

vendored

Normal file

@@ -0,0 +1,85 @@

|

||||

name: Mirror Community Pipeline

|

||||

|

||||

on:

|

||||

# Push changes on the main branch

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

paths:

|

||||

- 'examples/community/**.py'

|

||||

|

||||

# And on tag creation (e.g. `v0.28.1`)

|

||||

tags:

|

||||

- '*'

|

||||

|

||||

# Manual trigger with ref input

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

ref:

|

||||

description: "Either 'main' or a tag ref"

|

||||

required: true

|

||||

default: 'main'

|

||||

|

||||

jobs:

|

||||

mirror_community_pipeline:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

# Checkout to correct ref

|

||||

# If workflow dispatch

|

||||

# If ref is 'main', set:

|

||||

# CHECKOUT_REF=refs/heads/main

|

||||

# PATH_IN_REPO=main

|

||||

# Else it must be a tag. Set:

|

||||

# CHECKOUT_REF=refs/tags/{tag}

|

||||

# PATH_IN_REPO={tag}

|

||||

# If not workflow dispatch

|

||||

# If ref is 'refs/heads/main' => set 'main'

|

||||

# Else it must be a tag => set {tag}

|

||||

- name: Set checkout_ref and path_in_repo

|

||||

run: |

|

||||

if [ "${{ github.event_name }}" == "workflow_dispatch" ]; then

|

||||

if [ -z "${{ github.event.inputs.ref }}" ]; then

|

||||

echo "Error: Missing ref input"

|

||||

exit 1

|

||||

elif [ "${{ github.event.inputs.ref }}" == "main" ]; then

|

||||

echo "CHECKOUT_REF=refs/heads/main" >> $GITHUB_ENV

|

||||

echo "PATH_IN_REPO=main" >> $GITHUB_ENV

|

||||

else

|

||||

echo "CHECKOUT_REF=refs/tags/${{ github.event.inputs.ref }}" >> $GITHUB_ENV

|

||||

echo "PATH_IN_REPO=${{ github.event.inputs.ref }}" >> $GITHUB_ENV

|

||||

fi

|

||||

elif [ "${{ github.ref }}" == "refs/heads/main" ]; then

|

||||

echo "CHECKOUT_REF=${{ github.ref }}" >> $GITHUB_ENV

|

||||

echo "PATH_IN_REPO=main" >> $GITHUB_ENV

|

||||

else

|

||||

# e.g. refs/tags/v0.28.1 -> v0.28.1

|

||||

echo "CHECKOUT_REF=${{ github.ref }}" >> $GITHUB_ENV

|

||||

echo "PATH_IN_REPO=${${{ github.ref }}#refs/tags/}" >> $GITHUB_ENV

|

||||

fi

|

||||

- uses: actions/checkout@v3

|

||||

with:

|

||||

ref: ${{ env.CHECKOUT_REF }}

|

||||

|

||||

# Setup + install dependencies

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: "3.10"

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install uv

|

||||

uv pip install --upgrade huggingface_hub

|

||||

|

||||

# Check secret is set

|

||||

- name: whoami

|

||||

run: huggingface-cli whoami

|

||||

env:

|

||||

HF_TOKEN: ${{ secrets.HF_TOKEN_MIRROR_COMMUNITY_PIPELINES }}

|

||||

|

||||

# Push to HF! (under subfolder based on checkout ref)

|

||||

# https://huggingface.co/datasets/diffusers/community-pipelines-mirror

|

||||

- name: Mirror community pipeline to HF

|

||||

run: huggingface-cli upload diffusers/community-pipelines-mirror ./examples/community ${PATH_IN_REPO} --repo-type dataset

|

||||

env:

|

||||

PATH_IN_REPO: ${{ env.PATH_IN_REPO }}

|

||||

HF_TOKEN: ${{ secrets.HF_TOKEN_MIRROR_COMMUNITY_PIPELINES }}

|

||||

@@ -10,13 +10,17 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# Loading Pipelines and Models via `from_single_file`

|

||||

# Single files

|

||||

|

||||

The `from_single_file` method allows you to load supported pipelines using a single checkpoint file as opposed to Diffusers' multiple folders format. This is useful if you are working with Stable Diffusion Web UI's (such as A1111) that rely on a single file format to distribute all the components of a model.

|

||||

The [`~loaders.FromSingleFileMixin.from_single_file`] method allows you to load:

|

||||

|

||||

The `from_single_file` method also supports loading models in their originally distributed format. This means that supported models that have been finetuned with other services can be loaded directly into Diffusers model objects and pipelines.

|

||||

* a model stored in a single file, which is useful if you're working with models from the diffusion ecosystem, like Automatic1111, and commonly rely on a single-file layout to store and share models

|

||||

* a model stored in their originally distributed layout, which is useful if you're working with models finetuned with other services, and want to load it directly into Diffusers model objects and pipelines

|

||||

|

||||

## Pipelines that currently support `from_single_file` loading

|

||||

> [!TIP]

|

||||

> Read the [Model files and layouts](../../using-diffusers/other-formats) guide to learn more about the Diffusers-multifolder layout versus the single-file layout, and how to load models stored in these different layouts.

|

||||

|

||||

## Supported pipelines

|

||||

|

||||

- [`StableDiffusionPipeline`]

|

||||

- [`StableDiffusionImg2ImgPipeline`]

|

||||

@@ -39,218 +43,13 @@ The `from_single_file` method also supports loading models in their originally d

|

||||

- [`LEditsPPPipelineStableDiffusionXL`]

|

||||

- [`PIAPipeline`]

|

||||

|

||||

## Models that currently support `from_single_file` loading

|

||||

## Supported models

|

||||

|

||||

- [`UNet2DConditionModel`]

|

||||

- [`StableCascadeUNet`]

|

||||

- [`AutoencoderKL`]

|

||||

- [`ControlNetModel`]

|

||||

|

||||

## Usage Examples

|

||||

|

||||

## Loading a Pipeline using `from_single_file`

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionXLPipeline

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/blob/main/sd_xl_base_1.0_0.9vae.safetensors"

|

||||

pipe = StableDiffusionXLPipeline.from_single_file(ckpt_path)

|

||||

```

|

||||

|

||||

## Setting components in a Pipeline using `from_single_file`

|

||||

|

||||

Set components of a pipeline by passing them directly to the `from_single_file` method. For example, here we are swapping out the pipeline's default scheduler with the `DDIMScheduler`.

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionXLPipeline, DDIMScheduler

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/blob/main/sd_xl_base_1.0_0.9vae.safetensors"

|

||||

|

||||

scheduler = DDIMScheduler()

|

||||

pipe = StableDiffusionXLPipeline.from_single_file(ckpt_path, scheduler=scheduler)

|

||||

|

||||

```

|

||||

|

||||

Here we are passing in a ControlNet model to the `StableDiffusionControlNetPipeline`.

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

|

||||

|

||||

ckpt_path = "https://huggingface.co/runwayml/stable-diffusion-v1-5/blob/main/v1-5-pruned-emaonly.safetensors"

|

||||

|

||||

controlnet = ControlNetModel.from_pretrained("lllyasviel/control_v11p_sd15_canny")

|

||||

pipe = StableDiffusionControlNetPipeline.from_single_file(ckpt_path, controlnet=controlnet)

|

||||

|

||||

```

|

||||

|

||||

## Loading a Model using `from_single_file`

|

||||

|

||||

```python

|

||||

from diffusers import StableCascadeUNet

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/stable-cascade/blob/main/stage_b_lite.safetensors"

|

||||

model = StableCascadeUNet.from_single_file(ckpt_path)

|

||||

|

||||

```

|

||||

|

||||

## Using a Diffusers model repository to configure single file loading

|

||||

|

||||

Under the hood, `from_single_file` will try to automatically determine a model repository to use to configure the components of a pipeline. You can also explicitly set the model repository to configure the pipeline with the `config` argument.

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionXLPipeline

|

||||

|

||||

ckpt_path = "https://huggingface.co/segmind/SSD-1B/blob/main/SSD-1B.safetensors"

|

||||

repo_id = "segmind/SSD-1B"

|

||||

|

||||

pipe = StableDiffusionXLPipeline.from_single_file(ckpt_path, config=repo_id)

|

||||

|

||||

```

|

||||

|

||||

In the example above, since we explicitly passed `repo_id="segmind/SSD-1B"` to the `config` argument, it will use this [configuration file](https://huggingface.co/segmind/SSD-1B/blob/main/unet/config.json) from the `unet` subfolder in `"segmind/SSD-1B"` to configure the `unet` component of the pipeline; Similarly, it will use the `config.json` file from `vae` subfolder to configure the `vae` model, `config.json` file from `text_encoder` folder to configure `text_encoder` and so on.

|

||||

|

||||

<Tip>

|

||||

|

||||

Most of the time you do not need to explicitly set a `config` argument. `from_single_file` will automatically map the checkpoint to the appropriate model repository. However, this option can be useful in cases where model components in the checkpoint might have been changed from what was originally distributed, or in cases where a checkpoint file might not have the necessary metadata to correctly determine the configuration to use for the pipeline.

|

||||

|

||||

</Tip>

|

||||

|

||||

## Override configuration options when using single file loading

|

||||

|

||||

Override the default model or pipeline configuration options by providing the relevant arguments directly to the `from_single_file` method. Any argument supported by the model or pipeline class can be configured in this way:

|

||||

|

||||

### Setting a pipeline configuration option

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionXLInstructPix2PixPipeline

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/cosxl/blob/main/cosxl_edit.safetensors"

|

||||

pipe = StableDiffusionXLInstructPix2PixPipeline.from_single_file(ckpt_path, config="diffusers/sdxl-instructpix2pix-768", is_cosxl_edit=True)

|

||||

|

||||

```

|

||||

|

||||

### Setting a model configuration option

|

||||

|

||||

```python

|

||||

from diffusers import UNet2DConditionModel

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/blob/main/sd_xl_base_1.0_0.9vae.safetensors"

|

||||

model = UNet2DConditionModel.from_single_file(ckpt_path, upcast_attention=True)

|

||||

|

||||

```

|

||||

|

||||

<Tip>

|

||||

|

||||

To learn more about how to load single file weights, see the [Load different Stable Diffusion formats](../../using-diffusers/other-formats) loading guide.

|

||||

|

||||

</Tip>

|

||||

|

||||

## Working with local files

|

||||

|

||||

As of `diffusers>=0.28.0` the `from_single_file` method will attempt to configure a pipeline or model by first inferring the model type from the keys in the checkpoint file. This inferred model type is then used to determine the appropriate model repository on the Hugging Face Hub to configure the model or pipeline.

|

||||

|

||||

For example, any single file checkpoint based on the Stable Diffusion XL base model will use the [`stabilityai/stable-diffusion-xl-base-1.0`](https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0) model repository to configure the pipeline.

|

||||

|

||||

If you are working in an environment with restricted internet access, it is recommended that you download the config files and checkpoints for the model to your preferred directory and pass the local paths to the `pretrained_model_link_or_path` and `config` arguments of the `from_single_file` method.

|

||||

|

||||

```python

|

||||

from huggingface_hub import hf_hub_download, snapshot_download

|

||||

|

||||

my_local_checkpoint_path = hf_hub_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

filename="SSD-1B.safetensors"

|

||||

)

|

||||

|

||||

my_local_config_path = snapshot_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

allowed_patterns=["*.json", "**/*.json", "*.txt", "**/*.txt"]

|

||||

)

|

||||

|

||||

pipe = StableDiffusionXLPipeline.from_single_file(my_local_checkpoint_path, config=my_local_config_path, local_files_only=True)

|

||||

|

||||

```

|

||||

|

||||

By default this will download the checkpoints and config files to the [Hugging Face Hub cache directory](https://huggingface.co/docs/huggingface_hub/en/guides/manage-cache). You can also specify a local directory to download the files to by passing the `local_dir` argument to the `hf_hub_download` and `snapshot_download` functions.

|

||||

|

||||

```python

|

||||

from huggingface_hub import hf_hub_download, snapshot_download

|

||||

|

||||

my_local_checkpoint_path = hf_hub_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

filename="SSD-1B.safetensors"

|

||||

local_dir="my_local_checkpoints"

|

||||

)

|

||||

|

||||

my_local_config_path = snapshot_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

allowed_patterns=["*.json", "**/*.json", "*.txt", "**/*.txt"]

|

||||

local_dir="my_local_config"

|

||||

)

|

||||

|

||||

pipe = StableDiffusionXLPipeline.from_single_file(my_local_checkpoint_path, config=my_local_config_path, local_files_only=True)

|

||||

|

||||

```

|

||||

|

||||

## Working with local files on file systems that do not support symlinking

|

||||

|

||||

By default the `from_single_file` method relies on the `huggingface_hub` caching mechanism to fetch and store checkpoints and config files for models and pipelines. If you are working with a file system that does not support symlinking, it is recommended that you first download the checkpoint file to a local directory and disable symlinking by passing the `local_dir_use_symlink=False` argument to the `hf_hub_download` and `snapshot_download` functions.

|

||||

|

||||

```python

|

||||

from huggingface_hub import hf_hub_download, snapshot_download

|

||||

|

||||

my_local_checkpoint_path = hf_hub_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

filename="SSD-1B.safetensors"

|

||||

local_dir="my_local_checkpoints",

|

||||

local_dir_use_symlinks=False

|

||||

)

|

||||

print("My local checkpoint: ", my_local_checkpoint_path)

|

||||

|

||||

my_local_config_path = snapshot_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

allowed_patterns=["*.json", "**/*.json", "*.txt", "**/*.txt"]

|

||||

local_dir_use_symlinks=False,

|

||||

)

|

||||

print("My local config: ", my_local_config_path)

|

||||

|

||||

```

|

||||

|

||||

Then pass the local paths to the `pretrained_model_link_or_path` and `config` arguments of the `from_single_file` method.

|

||||

|

||||

```python

|

||||

pipe = StableDiffusionXLPipeline.from_single_file(my_local_checkpoint_path, config=my_local_config_path, local_files_only=True)

|

||||

|

||||

```

|

||||

|

||||

<Tip>

|

||||

|

||||

As of `huggingface_hub>=0.23.0` the `local_dir_use_symlinks` argument isn't necessary for the `hf_hub_download` and `snapshot_download` functions.

|

||||

|

||||

</Tip>

|

||||

|

||||

## Using the original configuration file of a model

|

||||

|

||||

If you would like to configure the model components in a pipeline using the orignal YAML configuration file, you can pass a local path or url to the original configuration file via the `original_config` argument.

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionXLPipeline

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/blob/main/sd_xl_base_1.0_0.9vae.safetensors"

|

||||

repo_id = "stabilityai/stable-diffusion-xl-base-1.0"

|

||||

original_config = "https://raw.githubusercontent.com/Stability-AI/generative-models/main/configs/inference/sd_xl_base.yaml"

|

||||

|

||||

pipe = StableDiffusionXLPipeline.from_single_file(ckpt_path, original_config=original_config)

|

||||

```

|

||||

|

||||

<Tip>

|

||||

|

||||

When using `original_config` with `local_files_only=True`, Diffusers will attempt to infer the components of the pipeline based on the type signatures of pipeline class, rather than attempting to fetch the configuration files from a model repository on the Hugging Face Hub. This is to prevent backward breaking changes in existing code that might not be able to connect to the internet to fetch the necessary configuration files.

|

||||

|

||||

This is not as reliable as providing a path to a local model repository using the `config` argument and might lead to errors when configuring the pipeline. To avoid this, please run the pipeline with `local_files_only=False` once to download the appropriate pipeline configuration files to the local cache.

|

||||

|

||||

</Tip>

|

||||

|

||||

|

||||

## FromSingleFileMixin

|

||||

|

||||

[[autodoc]] loaders.single_file.FromSingleFileMixin

|

||||

|

||||

@@ -16,7 +16,7 @@ aMUSEd was introduced in [aMUSEd: An Open MUSE Reproduction](https://huggingface

|

||||

|

||||

Amused is a lightweight text to image model based off of the [MUSE](https://arxiv.org/abs/2301.00704) architecture. Amused is particularly useful in applications that require a lightweight and fast model such as generating many images quickly at once.

|

||||

|

||||

Amused is a vqvae token based transformer that can generate an image in fewer forward passes than many diffusion models. In contrast with muse, it uses the smaller text encoder CLIP-L/14 instead of t5-xxl. Due to its small parameter count and few forward pass generation process, amused can generate many images quickly. This benefit is seen particularly at larger batch sizes.

|

||||

Amused is a vqvae token based transformer that can generate an image in fewer forward passes than many diffusion models. In contrast with muse, it uses the smaller text encoder CLIP-L/14 instead of t5-xxl. Due to its small parameter count and few forward pass generation process, amused can generate many images quickly. This benefit is seen particularly at larger batch sizes.

|

||||

|

||||

The abstract from the paper is:

|

||||

|

||||

|

||||

@@ -165,7 +165,7 @@ from PIL import Image

|

||||

adapter = MotionAdapter.from_pretrained("guoyww/animatediff-motion-adapter-v1-5-2", torch_dtype=torch.float16)

|

||||

# load SD 1.5 based finetuned model

|

||||

model_id = "SG161222/Realistic_Vision_V5.1_noVAE"

|

||||

pipe = AnimateDiffVideoToVideoPipeline.from_pretrained(model_id, motion_adapter=adapter, torch_dtype=torch.float16).to("cuda")

|

||||

pipe = AnimateDiffVideoToVideoPipeline.from_pretrained(model_id, motion_adapter=adapter, torch_dtype=torch.float16)

|

||||

scheduler = DDIMScheduler.from_pretrained(

|

||||

model_id,

|

||||

subfolder="scheduler",

|

||||

|

||||

@@ -28,11 +28,65 @@ HunyuanDiT has the following components:

|

||||

* It uses a diffusion transformer as the backbone

|

||||

* It combines two text encoders, a bilingual CLIP and a multilingual T5 encoder

|

||||

|

||||

<Tip>

|

||||

|

||||

## Memory optimization

|

||||

Make sure to check out the Schedulers [guide](../../using-diffusers/schedulers.md) to learn how to explore the tradeoff between scheduler speed and quality, and see the [reuse components across pipelines](../../using-diffusers/loading.md#reuse-a-pipeline) section to learn how to efficiently load the same components into multiple pipelines.

|

||||

|

||||

</Tip>

|

||||

|

||||

## Optimization

|

||||

|

||||

You can optimize the pipeline's runtime and memory consumption with torch.compile and feed-forward chunking. To learn about other optimization methods, check out the [Speed up inference](../../optimization/fp16) and [Reduce memory usage](../../optimization/memory) guides.

|

||||

|

||||

### Inference

|

||||

|

||||

Use [`torch.compile`](https://huggingface.co/docs/diffusers/main/en/tutorials/fast_diffusion#torchcompile) to reduce the inference latency.

|

||||

|

||||

First, load the pipeline:

|

||||

|

||||

```python

|

||||

from diffusers import HunyuanDiTPipeline

|

||||

import torch

|

||||

|

||||

pipeline = HunyuanDiTPipeline.from_pretrained(

|

||||

"Tencent-Hunyuan/HunyuanDiT-Diffusers", torch_dtype=torch.float16

|

||||

).to("cuda")

|

||||

```

|

||||

|

||||

Then change the memory layout of the pipelines `transformer` and `vae` components to `torch.channels-last`:

|

||||

|

||||

```python

|

||||

pipeline.transformer.to(memory_format=torch.channels_last)

|

||||

pipeline.vae.to(memory_format=torch.channels_last)

|

||||

```

|

||||

|

||||

Finally, compile the components and run inference:

|

||||

|

||||

```python

|

||||

pipeline.transformer = torch.compile(pipeline.transformer, mode="max-autotune", fullgraph=True)

|

||||

pipeline.vae.decode = torch.compile(pipeline.vae.decode, mode="max-autotune", fullgraph=True)

|

||||

|

||||

image = pipeline(prompt="一个宇航员在骑马").images[0]

|

||||

```

|

||||

|

||||

The [benchmark](https://gist.github.com/sayakpaul/29d3a14905cfcbf611fe71ebd22e9b23) results on a 80GB A100 machine are:

|

||||

|

||||

```bash

|

||||

With torch.compile(): Average inference time: 12.470 seconds.

|

||||

Without torch.compile(): Average inference time: 20.570 seconds.

|

||||

```

|

||||

|

||||

### Memory optimization

|

||||

|

||||

By loading the T5 text encoder in 8 bits, you can run the pipeline in just under 6 GBs of GPU VRAM. Refer to [this script](https://gist.github.com/sayakpaul/3154605f6af05b98a41081aaba5ca43e) for details.

|

||||

|

||||

Furthermore, you can use the [`~HunyuanDiT2DModel.enable_forward_chunking`] method to reduce memory usage. Feed-forward chunking runs the feed-forward layers in a transformer block in a loop instead of all at once. This gives you a trade-off between memory consumption and inference runtime.

|

||||

|

||||

```diff

|

||||

+ pipeline.transformer.enable_forward_chunking(chunk_size=1, dim=1)

|

||||

```

|

||||

|

||||

|

||||

## HunyuanDiTPipeline

|

||||

|

||||

[[autodoc]] HunyuanDiTPipeline

|

||||

|

||||

@@ -11,12 +11,12 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

Kandinsky 3 is created by [Vladimir Arkhipkin](https://github.com/oriBetelgeuse),[Anastasia Maltseva](https://github.com/NastyaMittseva),[Igor Pavlov](https://github.com/boomb0om),[Andrei Filatov](https://github.com/anvilarth),[Arseniy Shakhmatov](https://github.com/cene555),[Andrey Kuznetsov](https://github.com/kuznetsoffandrey),[Denis Dimitrov](https://github.com/denndimitrov), [Zein Shaheen](https://github.com/zeinsh)

|

||||

|

||||

The description from it's Github page:

|

||||

The description from it's Github page:

|

||||

|

||||

*Kandinsky 3.0 is an open-source text-to-image diffusion model built upon the Kandinsky2-x model family. In comparison to its predecessors, enhancements have been made to the text understanding and visual quality of the model, achieved by increasing the size of the text encoder and Diffusion U-Net models, respectively.*

|

||||

|

||||

Its architecture includes 3 main components:

|

||||

1. [FLAN-UL2](https://huggingface.co/google/flan-ul2), which is an encoder decoder model based on the T5 architecture.

|

||||

1. [FLAN-UL2](https://huggingface.co/google/flan-ul2), which is an encoder decoder model based on the T5 architecture.

|

||||

2. New U-Net architecture featuring BigGAN-deep blocks doubles depth while maintaining the same number of parameters.

|

||||

3. Sber-MoVQGAN is a decoder proven to have superior results in image restoration.

|

||||

|

||||

|

||||

@@ -25,11 +25,11 @@ You can find additional information about LEDITS++ on the [project page](https:/

|

||||

</Tip>

|

||||

|

||||

<Tip warning={true}>

|

||||

Due to some backward compatability issues with the current diffusers implementation of [`~schedulers.DPMSolverMultistepScheduler`] this implementation of LEdits++ can no longer guarantee perfect inversion.

|

||||

This issue is unlikely to have any noticeable effects on applied use-cases. However, we provide an alternative implementation that guarantees perfect inversion in a dedicated [GitHub repo](https://github.com/ml-research/ledits_pp).

|

||||

Due to some backward compatability issues with the current diffusers implementation of [`~schedulers.DPMSolverMultistepScheduler`] this implementation of LEdits++ can no longer guarantee perfect inversion.

|

||||

This issue is unlikely to have any noticeable effects on applied use-cases. However, we provide an alternative implementation that guarantees perfect inversion in a dedicated [GitHub repo](https://github.com/ml-research/ledits_pp).

|

||||

</Tip>

|

||||

|

||||

We provide two distinct pipelines based on different pre-trained models.

|

||||

We provide two distinct pipelines based on different pre-trained models.

|

||||

|

||||

## LEditsPPPipelineStableDiffusion

|

||||

[[autodoc]] pipelines.ledits_pp.LEditsPPPipelineStableDiffusion

|

||||

|

||||

@@ -14,10 +14,10 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

|

||||

|

||||

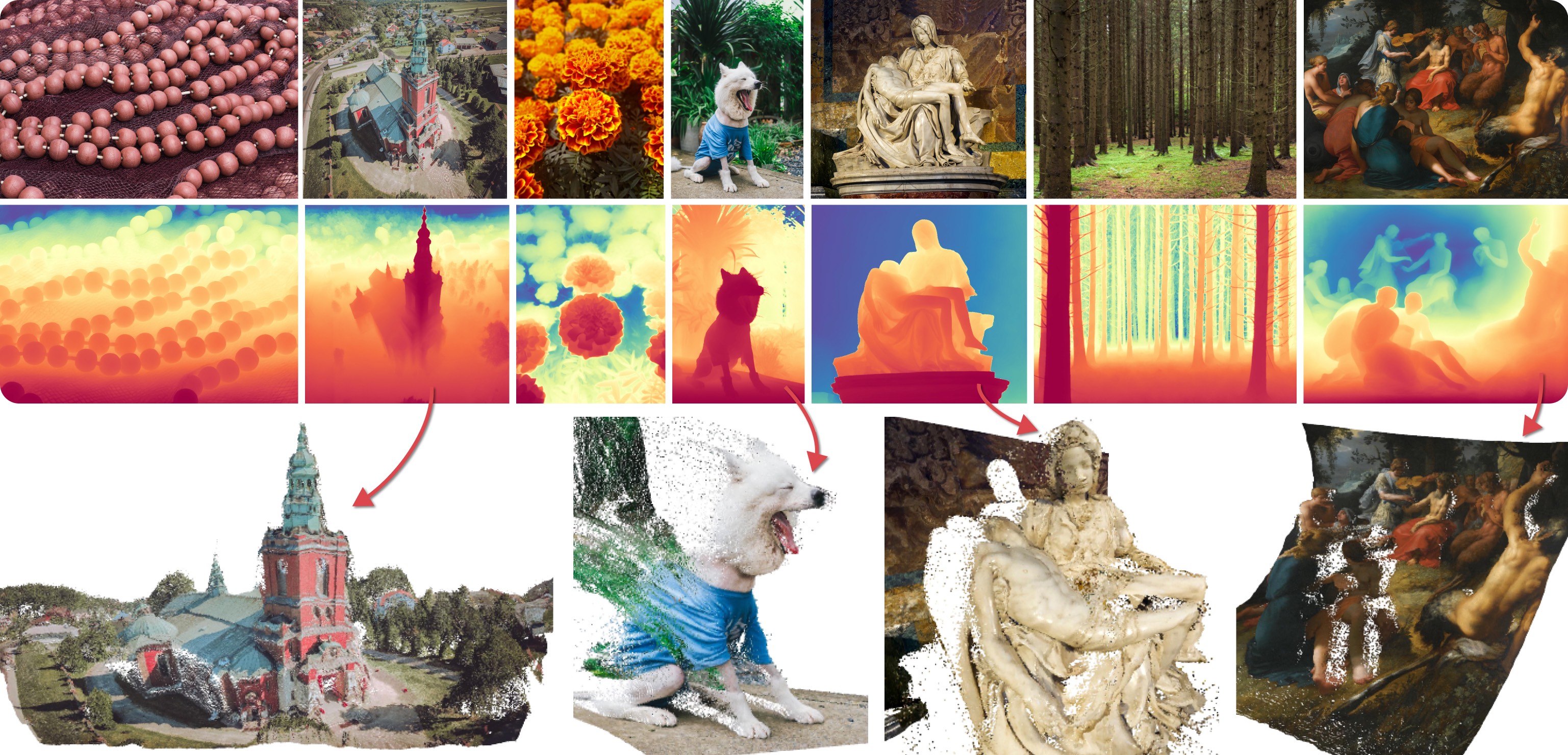

Marigold was proposed in [Repurposing Diffusion-Based Image Generators for Monocular Depth Estimation](https://huggingface.co/papers/2312.02145), a CVPR 2024 Oral paper by [Bingxin Ke](http://www.kebingxin.com/), [Anton Obukhov](https://www.obukhov.ai/), [Shengyu Huang](https://shengyuh.github.io/), [Nando Metzger](https://nandometzger.github.io/), [Rodrigo Caye Daudt](https://rcdaudt.github.io/), and [Konrad Schindler](https://scholar.google.com/citations?user=FZuNgqIAAAAJ&hl=en).

|

||||

The idea is to repurpose the rich generative prior of Text-to-Image Latent Diffusion Models (LDMs) for traditional computer vision tasks.

|

||||

Initially, this idea was explored to fine-tune Stable Diffusion for Monocular Depth Estimation, as shown in the teaser above.

|

||||

Later,

|

||||

Marigold was proposed in [Repurposing Diffusion-Based Image Generators for Monocular Depth Estimation](https://huggingface.co/papers/2312.02145), a CVPR 2024 Oral paper by [Bingxin Ke](http://www.kebingxin.com/), [Anton Obukhov](https://www.obukhov.ai/), [Shengyu Huang](https://shengyuh.github.io/), [Nando Metzger](https://nandometzger.github.io/), [Rodrigo Caye Daudt](https://rcdaudt.github.io/), and [Konrad Schindler](https://scholar.google.com/citations?user=FZuNgqIAAAAJ&hl=en).

|

||||

The idea is to repurpose the rich generative prior of Text-to-Image Latent Diffusion Models (LDMs) for traditional computer vision tasks.

|

||||

Initially, this idea was explored to fine-tune Stable Diffusion for Monocular Depth Estimation, as shown in the teaser above.

|

||||

Later,

|

||||

- [Tianfu Wang](https://tianfwang.github.io/) trained the first Latent Consistency Model (LCM) of Marigold, which unlocked fast single-step inference;

|

||||

- [Kevin Qu](https://www.linkedin.com/in/kevin-qu-b3417621b/?locale=en_US) extended the approach to Surface Normals Estimation;

|

||||

- [Anton Obukhov](https://www.obukhov.ai/) contributed the pipelines and documentation into diffusers (enabled and supported by [YiYi Xu](https://yiyixuxu.github.io/) and [Sayak Paul](https://sayak.dev/)).

|

||||

@@ -28,7 +28,7 @@ The abstract from the paper is:

|

||||

|

||||

## Available Pipelines

|

||||

|

||||

Each pipeline supports one Computer Vision task, which takes an input RGB image as input and produces a *prediction* of the modality of interest, such as a depth map of the input image.

|

||||

Each pipeline supports one Computer Vision task, which takes an input RGB image as input and produces a *prediction* of the modality of interest, such as a depth map of the input image.

|

||||

Currently, the following tasks are implemented:

|

||||

|

||||

| Pipeline | Predicted Modalities | Demos |

|

||||

@@ -39,7 +39,7 @@ Currently, the following tasks are implemented:

|

||||

|

||||

## Available Checkpoints

|

||||

|

||||

The original checkpoints can be found under the [PRS-ETH](https://huggingface.co/prs-eth/) Hugging Face organization.

|

||||

The original checkpoints can be found under the [PRS-ETH](https://huggingface.co/prs-eth/) Hugging Face organization.

|

||||

|

||||

<Tip>

|

||||

|

||||

@@ -49,11 +49,11 @@ Make sure to check out the Schedulers [guide](../../using-diffusers/schedulers)

|

||||

|

||||

<Tip warning={true}>

|

||||

|

||||

Marigold pipelines were designed and tested only with `DDIMScheduler` and `LCMScheduler`.

|

||||

Marigold pipelines were designed and tested only with `DDIMScheduler` and `LCMScheduler`.

|

||||

Depending on the scheduler, the number of inference steps required to get reliable predictions varies, and there is no universal value that works best across schedulers.

|

||||

Because of that, the default value of `num_inference_steps` in the `__call__` method of the pipeline is set to `None` (see the API reference).

|

||||

Unless set explicitly, its value will be taken from the checkpoint configuration `model_index.json`.

|

||||

This is done to ensure high-quality predictions when calling the pipeline with just the `image` argument.

|

||||

Because of that, the default value of `num_inference_steps` in the `__call__` method of the pipeline is set to `None` (see the API reference).

|

||||

Unless set explicitly, its value will be taken from the checkpoint configuration `model_index.json`.

|

||||

This is done to ensure high-quality predictions when calling the pipeline with just the `image` argument.

|

||||

|

||||

</Tip>

|

||||

|

||||

|

||||

@@ -37,7 +37,7 @@ Make sure to check out the Schedulers [guide](../../using-diffusers/schedulers.m

|

||||

|

||||

## Inference with under 8GB GPU VRAM

|

||||

|

||||

Run the [`PixArtAlphaPipeline`] with under 8GB GPU VRAM by loading the text encoder in 8-bit precision. Let's walk through a full-fledged example.

|

||||

Run the [`PixArtAlphaPipeline`] with under 8GB GPU VRAM by loading the text encoder in 8-bit precision. Let's walk through a full-fledged example.

|

||||

|

||||

First, install the [bitsandbytes](https://github.com/TimDettmers/bitsandbytes) library:

|

||||

|

||||

@@ -75,10 +75,10 @@ with torch.no_grad():

|

||||

prompt_embeds, prompt_attention_mask, negative_embeds, negative_prompt_attention_mask = pipe.encode_prompt(prompt)

|

||||

```

|

||||

|

||||

Since text embeddings have been computed, remove the `text_encoder` and `pipe` from the memory, and free up som GPU VRAM:

|

||||

Since text embeddings have been computed, remove the `text_encoder` and `pipe` from the memory, and free up some GPU VRAM:

|

||||

|

||||

```python

|

||||

import gc

|

||||

import gc

|

||||

|

||||

def flush():

|

||||

gc.collect()

|

||||

@@ -99,7 +99,7 @@ pipe = PixArtAlphaPipeline.from_pretrained(

|

||||

).to("cuda")

|

||||

|

||||

latents = pipe(

|

||||

negative_prompt=None,

|

||||

negative_prompt=None,

|

||||

prompt_embeds=prompt_embeds,

|

||||

negative_prompt_embeds=negative_embeds,

|

||||

prompt_attention_mask=prompt_attention_mask,

|

||||

@@ -146,4 +146,3 @@ While loading the `text_encoder`, you set `load_in_8bit` to `True`. You could al

|

||||

[[autodoc]] PixArtAlphaPipeline

|

||||

- all

|

||||

- __call__

|

||||

|

||||

@@ -39,7 +39,7 @@ Make sure to check out the Schedulers [guide](../../using-diffusers/schedulers)

|

||||

|

||||

## Inference with under 8GB GPU VRAM

|

||||

|

||||

Run the [`PixArtSigmaPipeline`] with under 8GB GPU VRAM by loading the text encoder in 8-bit precision. Let's walk through a full-fledged example.

|

||||

Run the [`PixArtSigmaPipeline`] with under 8GB GPU VRAM by loading the text encoder in 8-bit precision. Let's walk through a full-fledged example.

|

||||

|

||||

First, install the [bitsandbytes](https://github.com/TimDettmers/bitsandbytes) library:

|

||||

|

||||

@@ -59,7 +59,6 @@ text_encoder = T5EncoderModel.from_pretrained(

|

||||

subfolder="text_encoder",

|

||||

load_in_8bit=True,

|

||||

device_map="auto",

|

||||

|

||||

)

|

||||

pipe = PixArtSigmaPipeline.from_pretrained(

|

||||

"PixArt-alpha/PixArt-Sigma-XL-2-1024-MS",

|

||||

@@ -77,10 +76,10 @@ with torch.no_grad():

|

||||

prompt_embeds, prompt_attention_mask, negative_embeds, negative_prompt_attention_mask = pipe.encode_prompt(prompt)

|

||||

```

|

||||

|

||||

Since text embeddings have been computed, remove the `text_encoder` and `pipe` from the memory, and free up som GPU VRAM:

|

||||

Since text embeddings have been computed, remove the `text_encoder` and `pipe` from the memory, and free up some GPU VRAM:

|

||||

|

||||

```python

|

||||

import gc

|

||||

import gc

|

||||

|

||||

def flush():

|

||||

gc.collect()

|

||||

@@ -101,7 +100,7 @@ pipe = PixArtSigmaPipeline.from_pretrained(

|

||||

).to("cuda")

|

||||

|

||||

latents = pipe(

|

||||

negative_prompt=None,

|

||||

negative_prompt=None,

|

||||

prompt_embeds=prompt_embeds,

|

||||

negative_prompt_embeds=negative_embeds,

|

||||

prompt_attention_mask=prompt_attention_mask,

|

||||

@@ -148,4 +147,3 @@ While loading the `text_encoder`, you set `load_in_8bit` to `True`. You could al

|

||||

[[autodoc]] PixArtSigmaPipeline

|

||||

- all

|

||||

- __call__

|

||||

|

||||

@@ -177,7 +177,7 @@ inpaint = StableDiffusionInpaintPipeline(**text2img.components)

|

||||

|

||||

The Stable Diffusion pipelines are automatically supported in [Gradio](https://github.com/gradio-app/gradio/), a library that makes creating beautiful and user-friendly machine learning apps on the web a breeze. First, make sure you have Gradio installed:

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install -U gradio

|

||||

```

|

||||

|

||||

@@ -209,4 +209,4 @@ gr.Interface.from_pipeline(pipe).launch()

|

||||

```

|

||||

|

||||

By default, the web demo runs on a local server. If you'd like to share it with others, you can generate a temporary public

|

||||

link by setting `share=True` in `launch()`. Or, you can host your demo on [Hugging Face Spaces](https://huggingface.co/spaces)https://huggingface.co/spaces for a permanent link.

|

||||

link by setting `share=True` in `launch()`. Or, you can host your demo on [Hugging Face Spaces](https://huggingface.co/spaces)https://huggingface.co/spaces for a permanent link.

|

||||

@@ -12,7 +12,7 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

# EDMDPMSolverMultistepScheduler

|

||||

|

||||

`EDMDPMSolverMultistepScheduler` is a [Karras formulation](https://huggingface.co/papers/2206.00364) of `DPMSolverMultistep`, a multistep scheduler from [DPM-Solver: A Fast ODE Solver for Diffusion Probabilistic Model Sampling in Around 10 Steps](https://huggingface.co/papers/2206.00927) and [DPM-Solver++: Fast Solver for Guided Sampling of Diffusion Probabilistic Models](https://huggingface.co/papers/2211.01095) by Cheng Lu, Yuhao Zhou, Fan Bao, Jianfei Chen, Chongxuan Li, and Jun Zhu.

|

||||

`EDMDPMSolverMultistepScheduler` is a [Karras formulation](https://huggingface.co/papers/2206.00364) of `DPMSolverMultistepScheduler`, a multistep scheduler from [DPM-Solver: A Fast ODE Solver for Diffusion Probabilistic Model Sampling in Around 10 Steps](https://huggingface.co/papers/2206.00927) and [DPM-Solver++: Fast Solver for Guided Sampling of Diffusion Probabilistic Models](https://huggingface.co/papers/2211.01095) by Cheng Lu, Yuhao Zhou, Fan Bao, Jianfei Chen, Chongxuan Li, and Jun Zhu.

|

||||

|

||||

DPMSolver (and the improved version DPMSolver++) is a fast dedicated high-order solver for diffusion ODEs with convergence order guarantee. Empirically, DPMSolver sampling with only 20 steps can generate high-quality

|

||||

samples, and it can generate quite good samples even in 10 steps.

|

||||

|

||||

@@ -12,7 +12,7 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

# DPMSolverMultistepScheduler

|

||||

|

||||

`DPMSolverMultistep` is a multistep scheduler from [DPM-Solver: A Fast ODE Solver for Diffusion Probabilistic Model Sampling in Around 10 Steps](https://huggingface.co/papers/2206.00927) and [DPM-Solver++: Fast Solver for Guided Sampling of Diffusion Probabilistic Models](https://huggingface.co/papers/2211.01095) by Cheng Lu, Yuhao Zhou, Fan Bao, Jianfei Chen, Chongxuan Li, and Jun Zhu.

|

||||

`DPMSolverMultistepScheduler` is a multistep scheduler from [DPM-Solver: A Fast ODE Solver for Diffusion Probabilistic Model Sampling in Around 10 Steps](https://huggingface.co/papers/2206.00927) and [DPM-Solver++: Fast Solver for Guided Sampling of Diffusion Probabilistic Models](https://huggingface.co/papers/2211.01095) by Cheng Lu, Yuhao Zhou, Fan Bao, Jianfei Chen, Chongxuan Li, and Jun Zhu.

|

||||

|

||||

DPMSolver (and the improved version DPMSolver++) is a fast dedicated high-order solver for diffusion ODEs with convergence order guarantee. Empirically, DPMSolver sampling with only 20 steps can generate high-quality

|

||||

samples, and it can generate quite good samples even in 10 steps.

|

||||

|

||||

@@ -36,7 +36,7 @@ Then load and enable the [`DeepCacheSDHelper`](https://github.com/horseee/DeepCa

|

||||

image = pipe("a photo of an astronaut on a moon").images[0]

|

||||

```

|

||||

|

||||

The `set_params` method accepts two arguments: `cache_interval` and `cache_branch_id`. `cache_interval` means the frequency of feature caching, specified as the number of steps between each cache operation. `cache_branch_id` identifies which branch of the network (ordered from the shallowest to the deepest layer) is responsible for executing the caching processes.

|

||||

The `set_params` method accepts two arguments: `cache_interval` and `cache_branch_id`. `cache_interval` means the frequency of feature caching, specified as the number of steps between each cache operation. `cache_branch_id` identifies which branch of the network (ordered from the shallowest to the deepest layer) is responsible for executing the caching processes.

|

||||

Opting for a lower `cache_branch_id` or a larger `cache_interval` can lead to faster inference speed at the expense of reduced image quality (ablation experiments of these two hyperparameters can be found in the [paper](https://arxiv.org/abs/2312.00858)). Once those arguments are set, use the `enable` or `disable` methods to activate or deactivate the `DeepCacheSDHelper`.

|

||||

|

||||

<div class="flex justify-center">

|

||||

|

||||

@@ -188,7 +188,7 @@ def latents_to_rgb(latents):

|

||||

```py

|

||||

def decode_tensors(pipe, step, timestep, callback_kwargs):

|

||||

latents = callback_kwargs["latents"]

|

||||

|

||||

|

||||

image = latents_to_rgb(latents)

|

||||

image.save(f"{step}.png")

|

||||

|

||||

|

||||

@@ -138,15 +138,15 @@ Because Marigold's latent space is compatible with the base Stable Diffusion, it

|

||||

```diff

|

||||

import diffusers

|

||||

import torch

|

||||

|

||||

|

||||

pipe = diffusers.MarigoldDepthPipeline.from_pretrained(

|

||||

"prs-eth/marigold-depth-lcm-v1-0", variant="fp16", torch_dtype=torch.float16

|

||||

).to("cuda")

|

||||

|

||||

|

||||

+ pipe.vae = diffusers.AutoencoderTiny.from_pretrained(

|

||||

+ "madebyollin/taesd", torch_dtype=torch.float16

|

||||

+ ).cuda()

|

||||

|

||||

|

||||

image = diffusers.utils.load_image("https://marigoldmonodepth.github.io/images/einstein.jpg")

|

||||

depth = pipe(image)

|

||||

```

|

||||

@@ -156,13 +156,13 @@ As suggested in [Optimizations](../optimization/torch2.0#torch.compile), adding

|

||||

```diff

|

||||

import diffusers

|

||||

import torch

|

||||

|

||||

|

||||

pipe = diffusers.MarigoldDepthPipeline.from_pretrained(

|

||||

"prs-eth/marigold-depth-lcm-v1-0", variant="fp16", torch_dtype=torch.float16

|

||||

).to("cuda")

|

||||

|

||||

|

||||

+ pipe.unet = torch.compile(pipe.unet, mode="reduce-overhead", fullgraph=True)

|

||||

|

||||

|

||||

image = diffusers.utils.load_image("https://marigoldmonodepth.github.io/images/einstein.jpg")

|

||||

depth = pipe(image)

|

||||

```

|

||||

@@ -208,7 +208,7 @@ model_paper_kwargs = {

|

||||

diffusers.schedulers.LCMScheduler: {

|

||||

"num_inference_steps": 4,

|

||||

"ensemble_size": 5,

|

||||

},

|

||||

},

|

||||

}

|

||||

|

||||

image = diffusers.utils.load_image("https://marigoldmonodepth.github.io/images/einstein.jpg")

|

||||

@@ -261,7 +261,7 @@ model_paper_kwargs = {

|

||||

diffusers.schedulers.LCMScheduler: {

|

||||

"num_inference_steps": 4,

|

||||

"ensemble_size": 10,

|

||||

},

|

||||

},

|

||||

}

|

||||

|

||||

image = diffusers.utils.load_image("https://marigoldmonodepth.github.io/images/einstein.jpg")

|

||||

@@ -415,7 +415,7 @@ image = diffusers.utils.load_image(

|

||||

|

||||

pipe = diffusers.MarigoldDepthPipeline.from_pretrained(

|

||||

"prs-eth/marigold-depth-lcm-v1-0", torch_dtype=torch.float16, variant="fp16"

|

||||

).to("cuda")

|

||||

).to(device)

|

||||

|

||||

depth_image = pipe(image, generator=generator).prediction

|

||||

depth_image = pipe.image_processor.visualize_depth(depth_image, color_map="binary")

|

||||

@@ -423,10 +423,10 @@ depth_image[0].save("motorcycle_controlnet_depth.png")

|

||||

|

||||

controlnet = diffusers.ControlNetModel.from_pretrained(

|

||||

"diffusers/controlnet-depth-sdxl-1.0", torch_dtype=torch.float16, variant="fp16"

|

||||

).to("cuda")

|

||||

).to(device)

|

||||

pipe = diffusers.StableDiffusionXLControlNetPipeline.from_pretrained(

|

||||

"SG161222/RealVisXL_V4.0", torch_dtype=torch.float16, variant="fp16", controlnet=controlnet

|

||||

).to("cuda")

|

||||

).to(device)

|

||||

pipe.scheduler = diffusers.DPMSolverMultistepScheduler.from_config(pipe.scheduler.config, use_karras_sigmas=True)

|

||||

|

||||

controlnet_out = pipe(

|

||||

|

||||

@@ -267,3 +267,216 @@ pipeline.save_pretrained()

|

||||

```

|

||||

|

||||

Lastly, there are also Spaces, such as [SD To Diffusers](https://hf.co/spaces/diffusers/sd-to-diffusers) and [SD-XL To Diffusers](https://hf.co/spaces/diffusers/sdxl-to-diffusers), that provide a more user-friendly interface for converting models to Diffusers-multifolder layout. This is the easiest and most convenient option for converting layouts, and it'll open a PR on your model repository with the converted files. However, this option is not as reliable as running a script, and the Space may fail for more complicated models.

|

||||

|

||||

## Single-file layout usage

|

||||

|

||||

Now that you're familiar with the differences between the Diffusers-multifolder and single-file layout, this section shows you how to load models and pipeline components, customize configuration options for loading, and load local files with the [`~loaders.FromSingleFileMixin.from_single_file`] method.

|

||||

|

||||

### Load a pipeline or model

|

||||

|

||||

Pass the file path of the pipeline or model to the [`~loaders.FromSingleFileMixin.from_single_file`] method to load it.

|

||||

|

||||

<hfoptions id="pipeline-model">

|

||||

<hfoption id="pipeline">

|

||||

|

||||

```py

|

||||

from diffusers import StableDiffusionXLPipeline

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/blob/main/sd_xl_base_1.0_0.9vae.safetensors"

|

||||

pipeline = StableDiffusionXLPipeline.from_single_file(ckpt_path)

|

||||

```

|

||||

|

||||

</hfoption>

|

||||

<hfoption id="model">

|

||||

|

||||

```py

|

||||

from diffusers import StableCascadeUNet

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/stable-cascade/blob/main/stage_b_lite.safetensors"

|

||||

model = StableCascadeUNet.from_single_file(ckpt_path)

|

||||

```

|

||||

|

||||

</hfoption>

|

||||

</hfoptions>

|

||||

|

||||

Customize components in the pipeline by passing them directly to the [`~loaders.FromSingleFileMixin.from_single_file`] method. For example, you can use a different scheduler in a pipeline.

|

||||

|

||||

```py

|

||||

from diffusers import StableDiffusionXLPipeline, DDIMScheduler

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/blob/main/sd_xl_base_1.0_0.9vae.safetensors"

|

||||

scheduler = DDIMScheduler()

|

||||

pipeline = StableDiffusionXLPipeline.from_single_file(ckpt_path, scheduler=scheduler)

|

||||

```

|

||||

|

||||

Or you could use a ControlNet model in the pipeline.

|

||||

|

||||

```py

|

||||

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

|

||||

|

||||

ckpt_path = "https://huggingface.co/runwayml/stable-diffusion-v1-5/blob/main/v1-5-pruned-emaonly.safetensors"

|

||||

controlnet = ControlNetModel.from_pretrained("lllyasviel/control_v11p_sd15_canny")

|

||||

pipeline = StableDiffusionControlNetPipeline.from_single_file(ckpt_path, controlnet=controlnet)

|

||||

```

|

||||

|

||||

### Customize configuration options

|

||||

|

||||

Models have a configuration file that define their attributes like the number of inputs in a UNet. Pipelines configuration options are available in the pipeline's class. For example, if you look at the [`StableDiffusionXLInstructPix2PixPipeline`] class, there is an option to scale the image latents with the `is_cosxl_edit` parameter.

|

||||

|

||||

These configuration files can be found in the models Hub repository or another location from which the configuration file originated (for example, a GitHub repository or locally on your device).

|

||||

|

||||

<hfoptions id="config-file">

|

||||

<hfoption id="Hub configuration file">

|

||||

|

||||

> [!TIP]

|

||||

> The [`~loaders.FromSingleFileMixin.from_single_file`] method automatically maps the checkpoint to the appropriate model repository, but there are cases where it is useful to use the `config` parameter. For example, if the model components in the checkpoint are different from the original checkpoint or if a checkpoint doesn't have the necessary metadata to correctly determine the configuration to use for the pipeline.

|

||||

|

||||

The [`~loaders.FromSingleFileMixin.from_single_file`] method automatically determines the configuration to use from the configuration file in the model repository. You could also explicitly specify the configuration to use by providing the repository id to the `config` parameter.

|

||||

|

||||

```py

|

||||

from diffusers import StableDiffusionXLPipeline

|

||||

|

||||

ckpt_path = "https://huggingface.co/segmind/SSD-1B/blob/main/SSD-1B.safetensors"

|

||||

repo_id = "segmind/SSD-1B"

|

||||

|

||||

pipeline = StableDiffusionXLPipeline.from_single_file(ckpt_path, config=repo_id)

|

||||

```

|

||||

|

||||

The model loads the configuration file for the [UNet](https://huggingface.co/segmind/SSD-1B/blob/main/unet/config.json), [VAE](https://huggingface.co/segmind/SSD-1B/blob/main/vae/config.json), and [text encoder](https://huggingface.co/segmind/SSD-1B/blob/main/text_encoder/config.json) from their respective subfolders in the repository.

|

||||

|

||||

</hfoption>

|

||||

<hfoption id="original configuration file">

|

||||

|

||||

The [`~loaders.FromSingleFileMixin.from_single_file`] method can also load the original configuration file of a pipeline that is stored elsewhere. Pass a local path or URL of the original configuration file to the `original_config` parameter.

|

||||

|

||||

```py

|

||||

from diffusers import StableDiffusionXLPipeline

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/blob/main/sd_xl_base_1.0_0.9vae.safetensors"

|

||||

original_config = "https://raw.githubusercontent.com/Stability-AI/generative-models/main/configs/inference/sd_xl_base.yaml"

|

||||

|

||||

pipeline = StableDiffusionXLPipeline.from_single_file(ckpt_path, original_config=original_config)

|

||||

```

|

||||

|

||||

> [!TIP]

|

||||

> Diffusers attempts to infer the pipeline components based on the type signatures of the pipeline class when you use `original_config` with `local_files_only=True`, instead of fetching the configuration files from the model repository on the Hub. This prevents backward breaking changes in code that can't connect to the internet to fetch the necessary configuration files.

|

||||

>

|

||||

> This is not as reliable as providing a path to a local model repository with the `config` parameter, and might lead to errors during pipeline configuration. To avoid errors, run the pipeline with `local_files_only=False` once to download the appropriate pipeline configuration files to the local cache.

|

||||

|

||||

</hfoption>

|

||||

</hfoptions>

|

||||

|

||||

While the configuration files specify the pipeline or models default parameters, you can override them by providing the parameters directly to the [`~loaders.FromSingleFileMixin.from_single_file`] method. Any parameter supported by the model or pipeline class can be configured in this way.

|

||||

|

||||

<hfoptions id="override">

|

||||

<hfoption id="pipeline">

|

||||

|

||||

For example, to scale the image latents in [`StableDiffusionXLInstructPix2PixPipeline`] pass the `is_cosxl_edit` parameter.

|

||||

|

||||

```python

|

||||

from diffusers import StableDiffusionXLInstructPix2PixPipeline

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/cosxl/blob/main/cosxl_edit.safetensors"

|

||||

pipeline = StableDiffusionXLInstructPix2PixPipeline.from_single_file(ckpt_path, config="diffusers/sdxl-instructpix2pix-768", is_cosxl_edit=True)

|

||||

```

|

||||

|

||||

</hfoption>

|

||||

<hfoption id="model">

|

||||

|

||||

For example, to upcast the attention dimensions in a [`UNet2DConditionModel`] pass the `upcast_attention` parameter.

|

||||

|

||||

```python

|

||||

from diffusers import UNet2DConditionModel

|

||||

|

||||

ckpt_path = "https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/blob/main/sd_xl_base_1.0_0.9vae.safetensors"

|

||||

model = UNet2DConditionModel.from_single_file(ckpt_path, upcast_attention=True)

|

||||

```

|

||||

|

||||

</hfoption>

|

||||

</hfoptions>

|

||||

|

||||

### Local files

|

||||

|

||||

In Diffusers>=v0.28.0, the [`~loaders.FromSingleFileMixin.from_single_file`] method attempts to configure a pipeline or model by inferring the model type from the keys in the checkpoint file. The inferred model type is used to determine the appropriate model repository on the Hugging Face Hub to configure the model or pipeline.

|

||||

|

||||

For example, any single file checkpoint based on the Stable Diffusion XL base model will use the [stabilityai/stable-diffusion-xl-base-1.0](https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0) model repository to configure the pipeline.

|

||||

|

||||

But if you're working in an environment with restricted internet access, you should download the configuration files with the [`~huggingface_hub.snapshot_download`] function, and the model checkpoint with the [`~huggingface_hub.hf_hub_download`] function. By default, these files are downloaded to the Hugging Face Hub [cache directory](https://huggingface.co/docs/huggingface_hub/en/guides/manage-cache), but you can specify a preferred directory to download the files to with the `local_dir` parameter.

|

||||

|

||||

Pass the configuration and checkpoint paths to the [`~loaders.FromSingleFileMixin.from_single_file`] method to load locally.

|

||||

|

||||

<hfoptions id="local">

|

||||

<hfoption id="Hub cache directory">

|

||||

|

||||

```python

|

||||

from huggingface_hub import hf_hub_download, snapshot_download

|

||||

|

||||

my_local_checkpoint_path = hf_hub_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

filename="SSD-1B.safetensors"

|

||||

)

|

||||

|

||||

my_local_config_path = snapshot_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

allowed_patterns=["*.json", "**/*.json", "*.txt", "**/*.txt"]

|

||||

)

|

||||

|

||||

pipeline = StableDiffusionXLPipeline.from_single_file(my_local_checkpoint_path, config=my_local_config_path, local_files_only=True)

|

||||

```

|

||||

|

||||

</hfoption>

|

||||

<hfoption id="specific local directory">

|

||||

|

||||

```python

|

||||

from huggingface_hub import hf_hub_download, snapshot_download

|

||||

|

||||

my_local_checkpoint_path = hf_hub_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

filename="SSD-1B.safetensors"

|

||||

local_dir="my_local_checkpoints"

|

||||

)

|

||||

|

||||

my_local_config_path = snapshot_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

allowed_patterns=["*.json", "**/*.json", "*.txt", "**/*.txt"]

|

||||

local_dir="my_local_config"

|

||||

)

|

||||

|

||||

pipeline = StableDiffusionXLPipeline.from_single_file(my_local_checkpoint_path, config=my_local_config_path, local_files_only=True)

|

||||

```

|

||||

|

||||

</hfoption>

|

||||

</hfoptions>

|

||||

|

||||

#### Local files without symlink

|

||||

|

||||

> [!TIP]

|

||||

> In huggingface_hub>=v0.23.0, the `local_dir_use_symlinks` argument isn't necessary for the [`~huggingface_hub.hf_hub_download`] and [`~huggingface_hub.snapshot_download`] functions.

|

||||

|

||||

The [`~loaders.FromSingleFileMixin.from_single_file`] method relies on the [huggingface_hub](https://hf.co/docs/huggingface_hub/index) caching mechanism to fetch and store checkpoints and configuration files for models and pipelines. If you're working with a file system that does not support symlinking, you should download the checkpoint file to a local directory first, and disable symlinking with the `local_dir_use_symlink=False` parameter in the [`~huggingface_hub.hf_hub_download`] function and [`~huggingface_hub.snapshot_download`] functions.

|

||||

|

||||

```python

|

||||

from huggingface_hub import hf_hub_download, snapshot_download

|

||||

|

||||

my_local_checkpoint_path = hf_hub_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

filename="SSD-1B.safetensors"

|

||||

local_dir="my_local_checkpoints",

|

||||

local_dir_use_symlinks=False

|

||||

)

|

||||

print("My local checkpoint: ", my_local_checkpoint_path)

|

||||

|

||||

my_local_config_path = snapshot_download(

|

||||

repo_id="segmind/SSD-1B",

|

||||

allowed_patterns=["*.json", "**/*.json", "*.txt", "**/*.txt"]

|

||||

local_dir_use_symlinks=False,

|

||||

)

|

||||

print("My local config: ", my_local_config_path)

|

||||

|

||||

```

|

||||

|

||||

Then you can pass the local paths to the `pretrained_model_link_or_path` and `config` parameters.

|

||||

|

||||

```python

|

||||

pipeline = StableDiffusionXLPipeline.from_single_file(my_local_checkpoint_path, config=my_local_config_path, local_files_only=True)

|

||||

```

|

||||

|

||||

@@ -134,7 +134,7 @@ sigmas = [14.615, 6.315, 3.771, 2.181, 1.342, 0.862, 0.555, 0.380, 0.234, 0.113,

|

||||

prompt = "anthropomorphic capybara wearing a suit and working with a computer"

|

||||

generator = torch.Generator(device='cuda').manual_seed(123)

|

||||

image = pipeline(

|

||||

prompt=prompt,

|

||||

prompt=prompt,

|

||||

num_inference_steps=10,

|

||||

sigmas=sigmas,

|

||||

generator=generator

|

||||

|

||||

@@ -34,7 +34,7 @@ Stable Diffusion XL은 Dustin Podell, Zion English, Kyle Lacey, Andreas Blattman

|

||||

SDXL을 사용하기 전에 `transformers`, `accelerate`, `safetensors` 와 `invisible_watermark`를 설치하세요.

|

||||

다음과 같이 라이브러리를 설치할 수 있습니다:

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install transformers

|

||||

pip install accelerate

|

||||

pip install safetensors

|

||||

@@ -46,7 +46,7 @@ pip install invisible-watermark>=0.2.0

|

||||

Stable Diffusion XL로 이미지를 생성할 때 워터마크가 보이지 않도록 추가하는 것을 권장하는데, 이는 다운스트림(downstream) 어플리케이션에서 기계에 합성되었는지를 식별하는데 도움을 줄 수 있습니다. 그렇게 하려면 [invisible_watermark 라이브러리](https://pypi.org/project/invisible-watermark/)를 통해 설치해주세요:

|

||||

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install invisible-watermark>=0.2.0

|

||||

```

|

||||

|

||||

@@ -75,11 +75,11 @@ prompt = "Astronaut in a jungle, cold color palette, muted colors, detailed, 8k"

|

||||

image = pipe(prompt=prompt).images[0]

|

||||

```

|

||||

|

||||

### Image-to-image

|

||||

### Image-to-image

|

||||

|

||||

*image-to-image*를 위해 다음과 같이 SDXL을 사용할 수 있습니다:

|

||||

|

||||

```py

|

||||

```py

|

||||

import torch

|

||||

from diffusers import StableDiffusionXLImg2ImgPipeline

|

||||

from diffusers.utils import load_image

|

||||

@@ -99,7 +99,7 @@ image = pipe(prompt, image=init_image).images[0]

|

||||

|

||||

*inpainting*를 위해 다음과 같이 SDXL을 사용할 수 있습니다:

|

||||

|

||||

```py

|

||||

```py

|

||||

import torch

|

||||

from diffusers import StableDiffusionXLInpaintPipeline

|

||||

from diffusers.utils import load_image

|

||||

@@ -352,7 +352,7 @@ out-of-memory 에러가 난다면, [`StableDiffusionXLPipeline.enable_model_cpu_

|

||||

|

||||

**참고** Stable Diffusion XL을 `torch`가 2.0 버전 미만에서 실행시키고 싶을 때, xformers 어텐션을 사용해주세요:

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install xformers

|

||||

```

|

||||

|

||||

|

||||

@@ -93,13 +93,13 @@ cd diffusers

|

||||

|

||||

**PyTorch의 경우**

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install -e ".[torch]"

|

||||

```

|

||||

|

||||

**Flax의 경우**

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install -e ".[flax]"

|

||||

```

|

||||

|

||||

|

||||

@@ -19,13 +19,13 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

다음 명령어로 ONNX Runtime를 지원하는 🤗 Optimum를 설치합니다:

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install optimum["onnxruntime"]

|

||||

```

|

||||

|

||||

## Stable Diffusion 추론

|

||||

|

||||

아래 코드는 ONNX 런타임을 사용하는 방법을 보여줍니다. `StableDiffusionPipeline` 대신 `OnnxStableDiffusionPipeline`을 사용해야 합니다.

|

||||

아래 코드는 ONNX 런타임을 사용하는 방법을 보여줍니다. `StableDiffusionPipeline` 대신 `OnnxStableDiffusionPipeline`을 사용해야 합니다.

|

||||

PyTorch 모델을 불러오고 즉시 ONNX 형식으로 변환하려는 경우 `export=True`로 설정합니다.

|

||||

|

||||

```python

|

||||

@@ -38,7 +38,7 @@ images = pipe(prompt).images[0]

|

||||

pipe.save_pretrained("./onnx-stable-diffusion-v1-5")

|

||||

```

|

||||

|

||||

파이프라인을 ONNX 형식으로 오프라인으로 내보내고 나중에 추론에 사용하려는 경우,

|

||||

파이프라인을 ONNX 형식으로 오프라인으로 내보내고 나중에 추론에 사용하려는 경우,

|

||||

[`optimum-cli export`](https://huggingface.co/docs/optimum/main/en/exporters/onnx/usage_guides/export_a_model#exporting-a-model-to-onnx-using-the-cli) 명령어를 사용할 수 있습니다:

|

||||

|

||||

```bash

|

||||

@@ -47,7 +47,7 @@ optimum-cli export onnx --model runwayml/stable-diffusion-v1-5 sd_v15_onnx/

|

||||

|

||||

그 다음 추론을 수행합니다:

|

||||

|

||||

```python

|

||||

```python

|

||||

from optimum.onnxruntime import ORTStableDiffusionPipeline

|

||||

|

||||

model_id = "sd_v15_onnx"

|

||||

|

||||

@@ -19,7 +19,7 @@ specific language governing permissions and limitations under the License.

|

||||

|

||||

다음 명령어로 🤗 Optimum을 설치합니다:

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install optimum["openvino"]

|

||||

```

|

||||

|

||||

|

||||

@@ -59,7 +59,7 @@ image

|

||||

|

||||

먼저 `compel` 라이브러리를 설치해야합니다:

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install compel

|

||||

```

|

||||

|

||||

|

||||

@@ -95,13 +95,13 @@ cd diffusers

|

||||

|

||||

**PyTorch**

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install -e ".[torch]"

|

||||

```

|

||||

|

||||

**Flax**

|

||||

|

||||

```

|

||||

```sh

|

||||

pip install -e ".[flax]"

|

||||

```

|

||||

|

||||

|

||||

@@ -25,7 +25,7 @@ from diffusers.utils.torch_utils import randn_tensor

|

||||

|

||||

EXAMPLE_DOC_STRING = """

|

||||

Examples:

|

||||

```

|

||||

```py

|

||||

from io import BytesIO

|

||||

|

||||

import requests

|

||||

|

||||

@@ -113,9 +113,9 @@ accelerate launch train_lcm_distill_lora_sdxl_wds.py \

|

||||

--push_to_hub \

|

||||

```

|

||||

|

||||

We provide another version for LCM LoRA SDXL that follows best practices of `peft` and leverages the `datasets` library for quick experimentation. The script doesn't load two UNets unlike `train_lcm_distill_lora_sdxl_wds.py` which reduces the memory requirements quite a bit.

|

||||

We provide another version for LCM LoRA SDXL that follows best practices of `peft` and leverages the `datasets` library for quick experimentation. The script doesn't load two UNets unlike `train_lcm_distill_lora_sdxl_wds.py` which reduces the memory requirements quite a bit.

|

||||

|

||||

Below is an example training command that trains an LCM LoRA on the [Pokemons dataset](https://huggingface.co/datasets/lambdalabs/naruto-blip-captions):

|

||||

Below is an example training command that trains an LCM LoRA on the [Narutos dataset](https://huggingface.co/datasets/lambdalabs/naruto-blip-captions):

|

||||

|

||||

```bash

|

||||

export MODEL_NAME="stabilityai/stable-diffusion-xl-base-1.0"

|

||||

@@ -125,7 +125,7 @@ export VAE_PATH="madebyollin/sdxl-vae-fp16-fix"

|

||||

accelerate launch train_lcm_distill_lora_sdxl.py \

|

||||

--pretrained_teacher_model=${MODEL_NAME} \

|

||||

--pretrained_vae_model_name_or_path=${VAE_PATH} \

|

||||

--output_dir="pokemons-lora-lcm-sdxl" \

|

||||

--output_dir="narutos-lora-lcm-sdxl" \

|

||||

--mixed_precision="fp16" \

|

||||

--dataset_name=$DATASET_NAME \

|

||||

--resolution=1024 \

|

||||

|

||||

@@ -101,7 +101,7 @@ accelerate launch train_controlnet.py \

|

||||

`accelerate` allows for seamless multi-GPU training. Follow the instructions [here](https://huggingface.co/docs/accelerate/basic_tutorials/launch)

|

||||

for running distributed training with `accelerate`. Here is an example command:

|

||||